Mark Billinghurst

Mark Billinghurst

Director

Prof. Mark Billinghurst has a wealth of knowledge and expertise in human-computer interface technology, particularly in the area of Augmented Reality (the overlay of three-dimensional images on the real world).

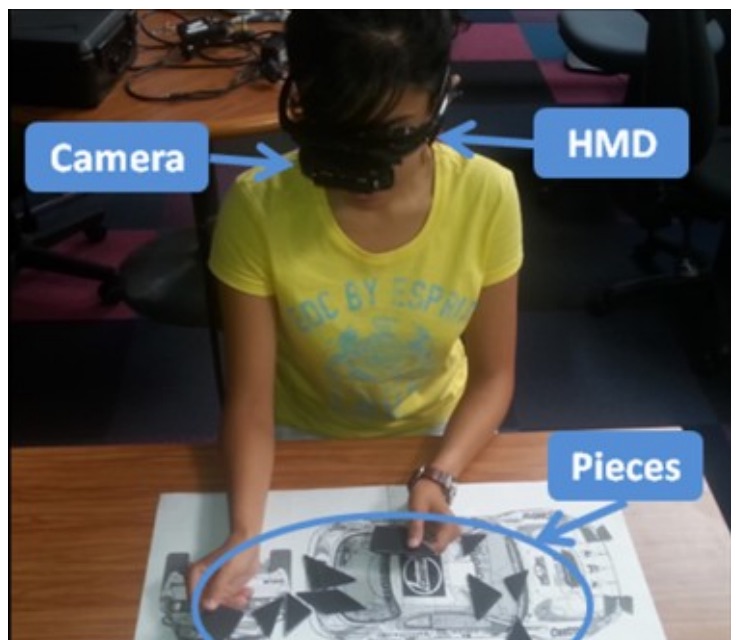

In 2002, the former HIT Lab US Research Associate completed his PhD in Electrical Engineering, at the University of Washington, under the supervision of Professor Thomas Furness III and Professor Linda Shapiro. As part of the research for his thesis titled Shared Space: Exploration in Collaborative Augmented Reality, Dr Billinghurst invented the Magic Book – an animated children’s book that comes to life when viewed through the lightweight head-mounted display (HMD).

Not surprisingly, Dr Billinghurst has achieved several accolades in recent years for his contribution to Human Interface Technology research. He was awarded a Discover Magazine Award in 2001, for Entertainment for creating the Magic Book technology. He was selected as one of eight leading New Zealand innovators and entrepreneurs to be showcased at the Carter Holt Harvey New Zealand Innovation Pavilion at the America’s Cup Village from November 2002 until March 2003. In 2004 he was nominated for a prestigious World Technology Network (WTN) World Technology Award in the education category and in 2005 he was appointed to the New Zealand Government’s Growth and Innovation Advisory Board.

Originally educated in New Zealand, Dr Billinghurst is a two-time graduate of Waikato University where he completed a BCMS (Bachelor of Computing and Mathematical Science)(first class honours) in 1990 and a Master of Philosophy (Applied Mathematics & Physics) in 1992.

Research interests: Dr. Billinghurst’s research focuses primarily on advanced 3D user interfaces such as:

- Wearable Computing – Spatial and collaborative interfaces for small wearable computers. These interfaces address the idea of what is possible when you merge ubiquitous computing and communications on the body.

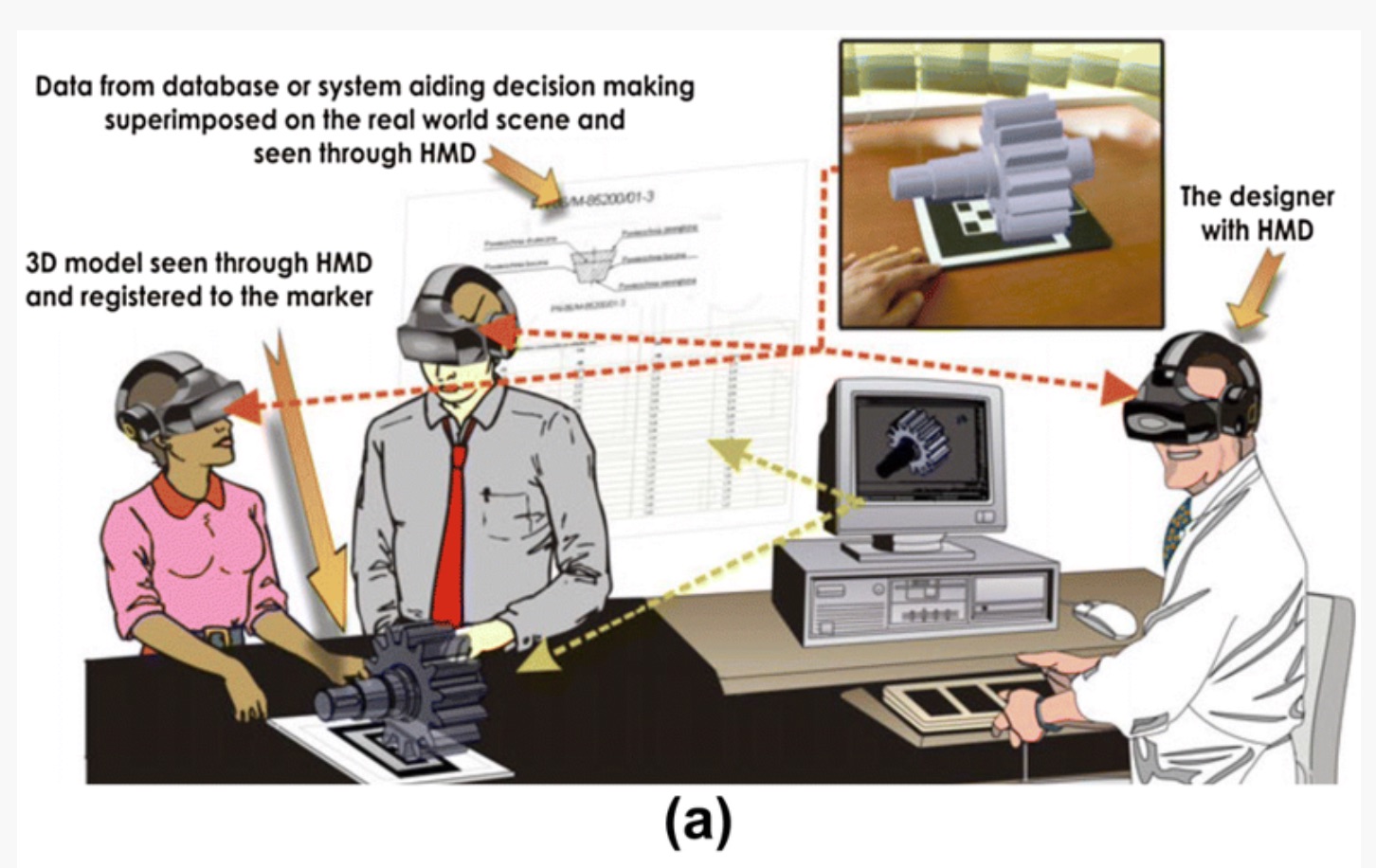

- Shared Space – An interface that demonstrates how augmented reality, the overlaying of virtual objects on the real world, can radically enhance face-face and remote collaboration.

- Multimodal Input – Combining natural language and artificial intelligence techniques to allow human-computer interaction with an intuitive mix of voice, gesture, speech, gaze and body motion.

Projects

-

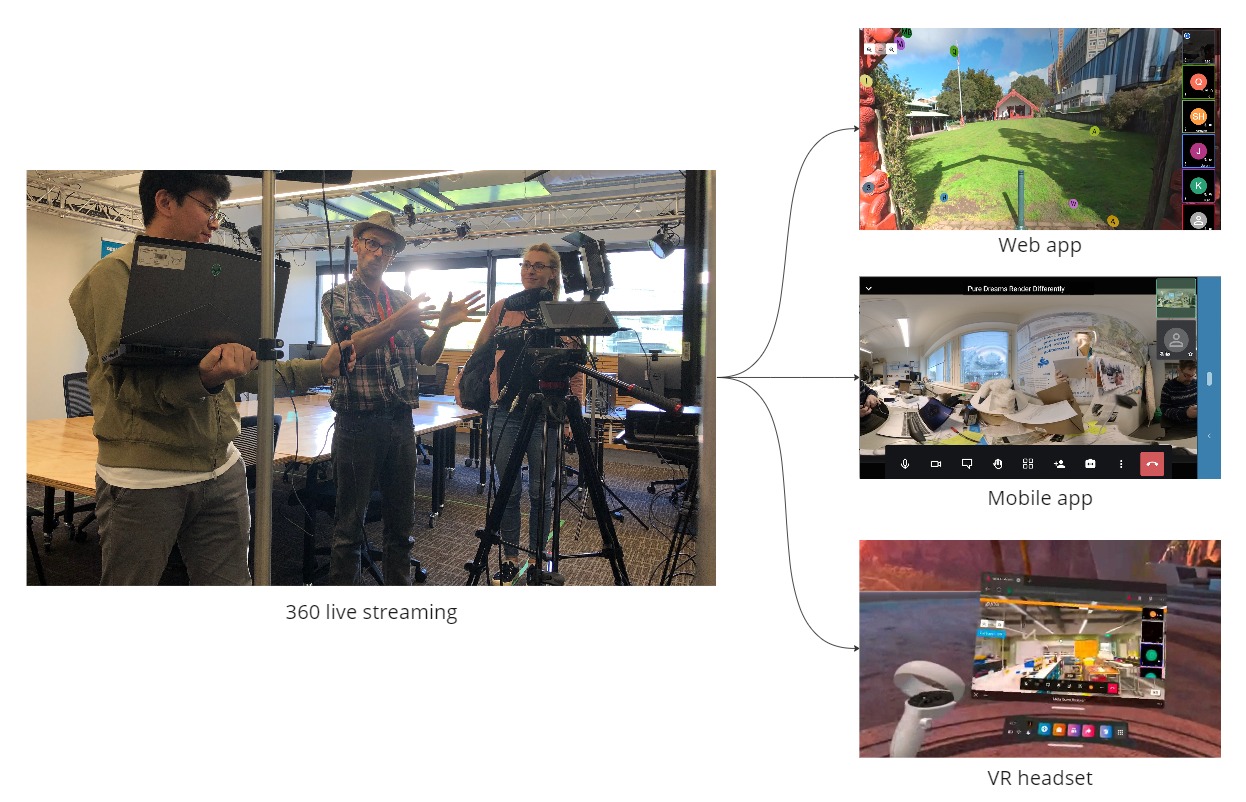

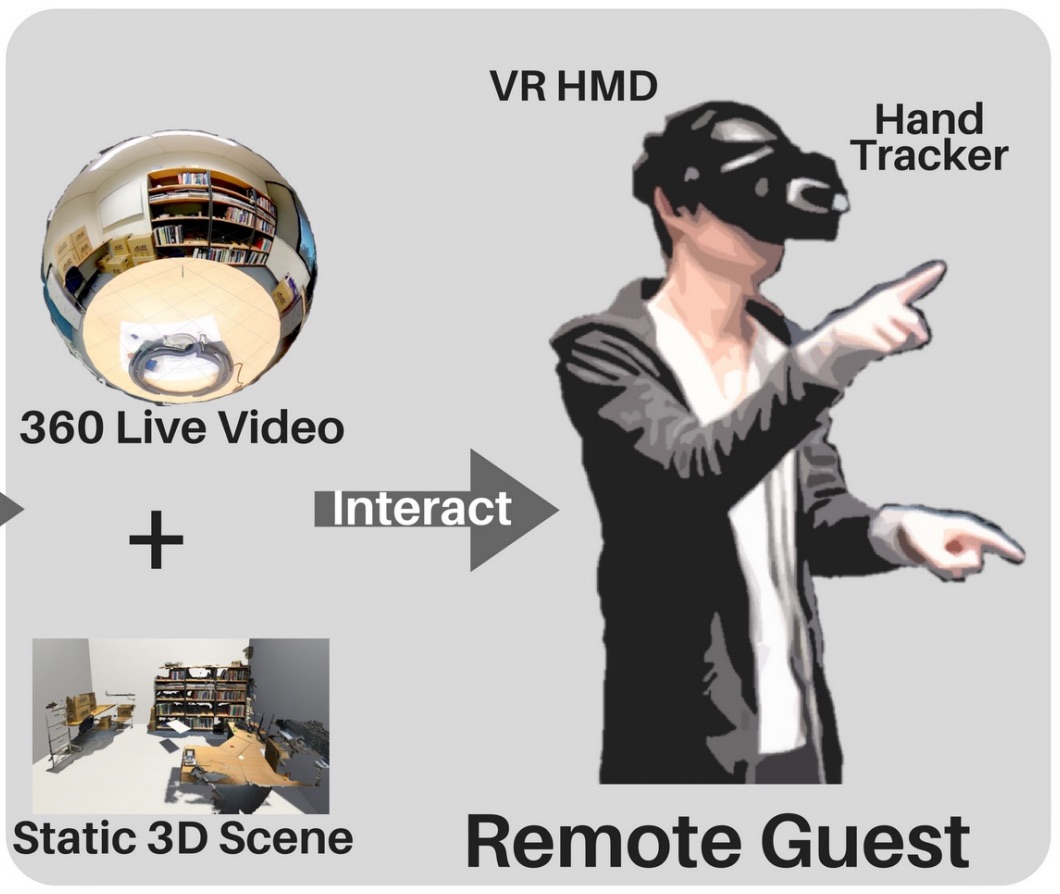

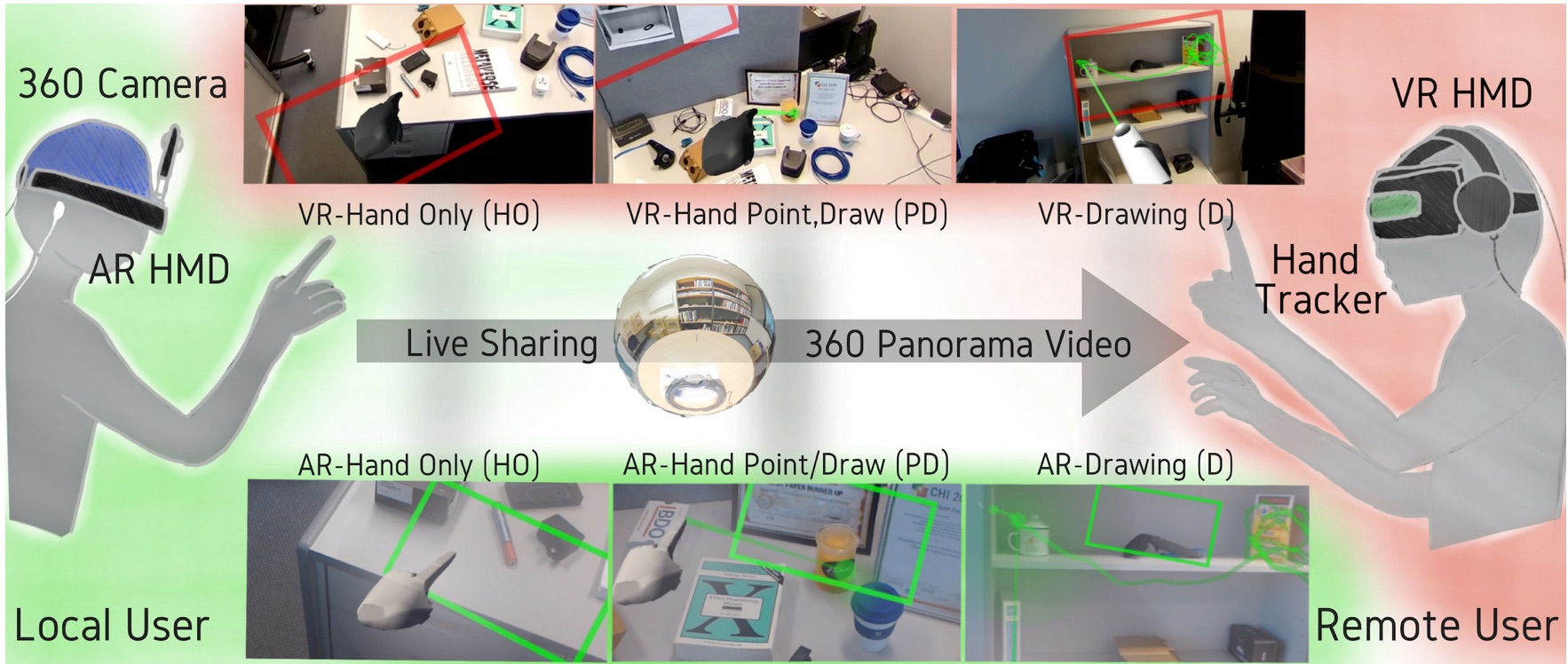

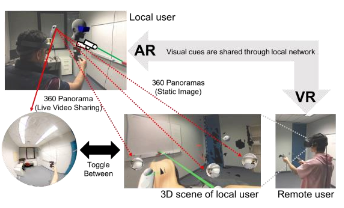

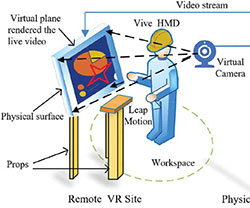

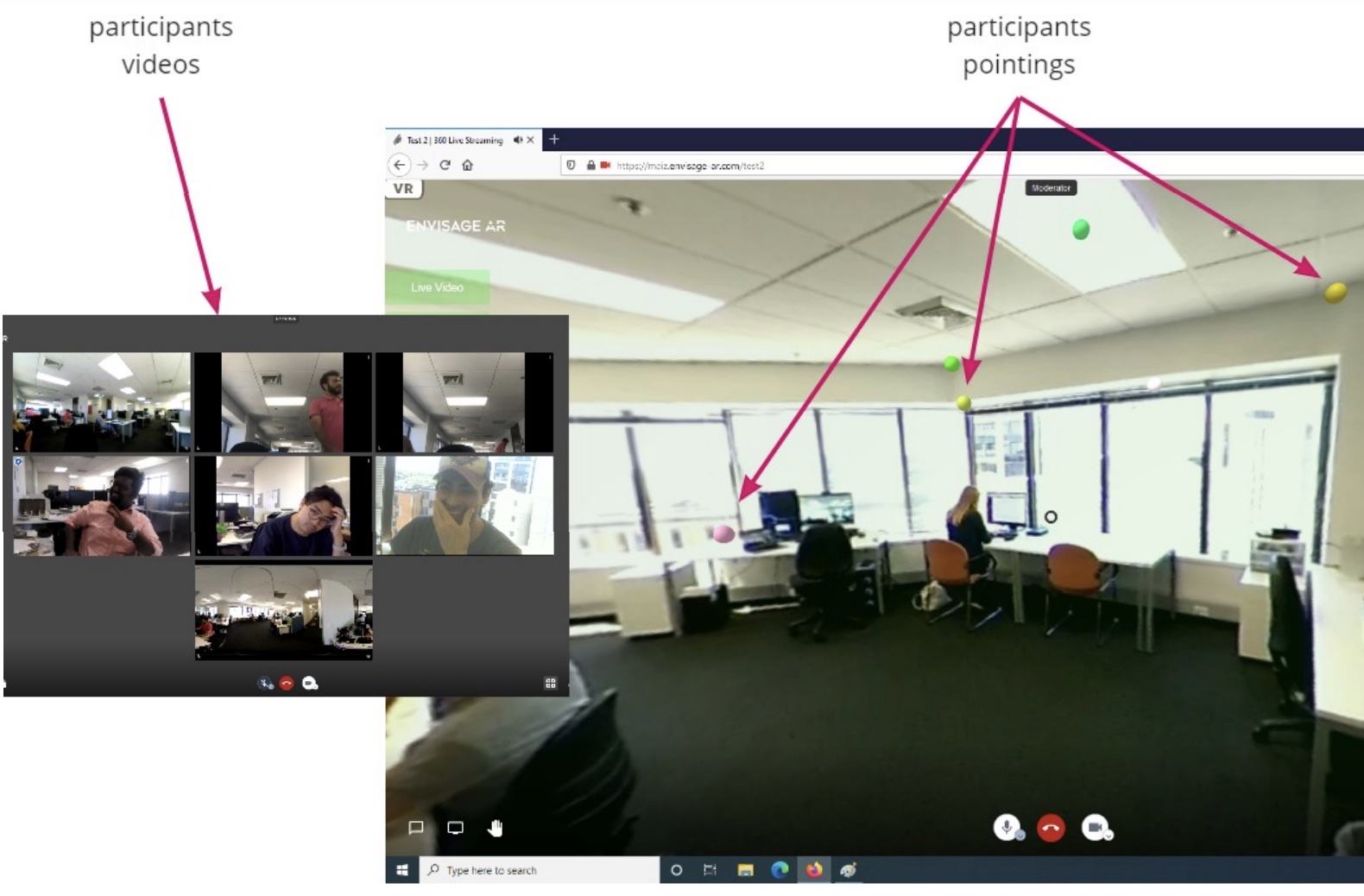

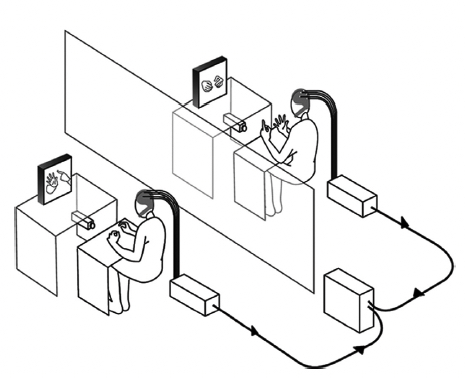

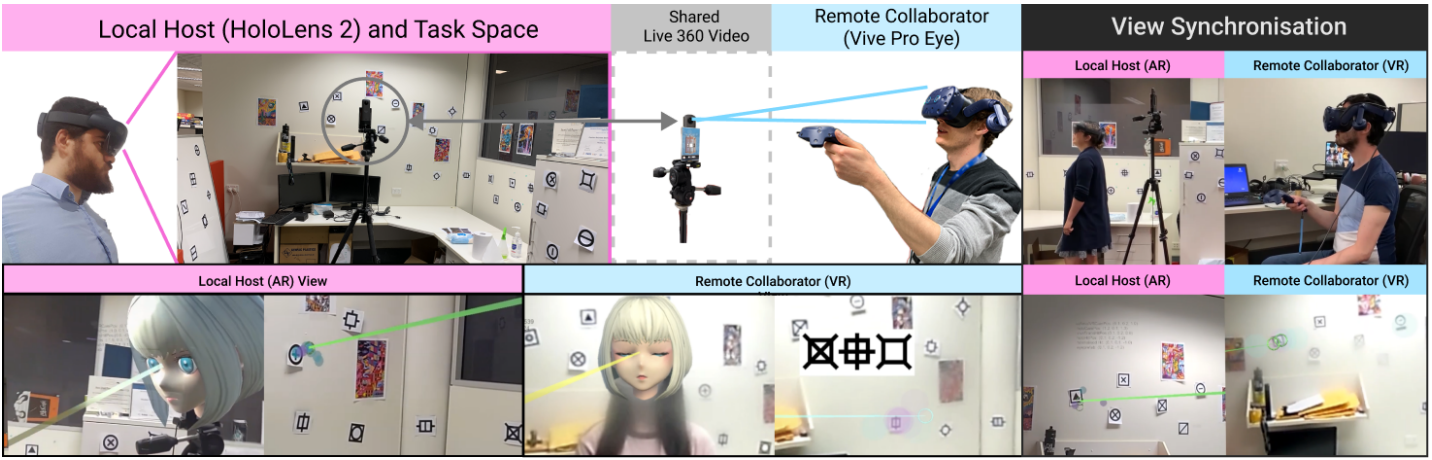

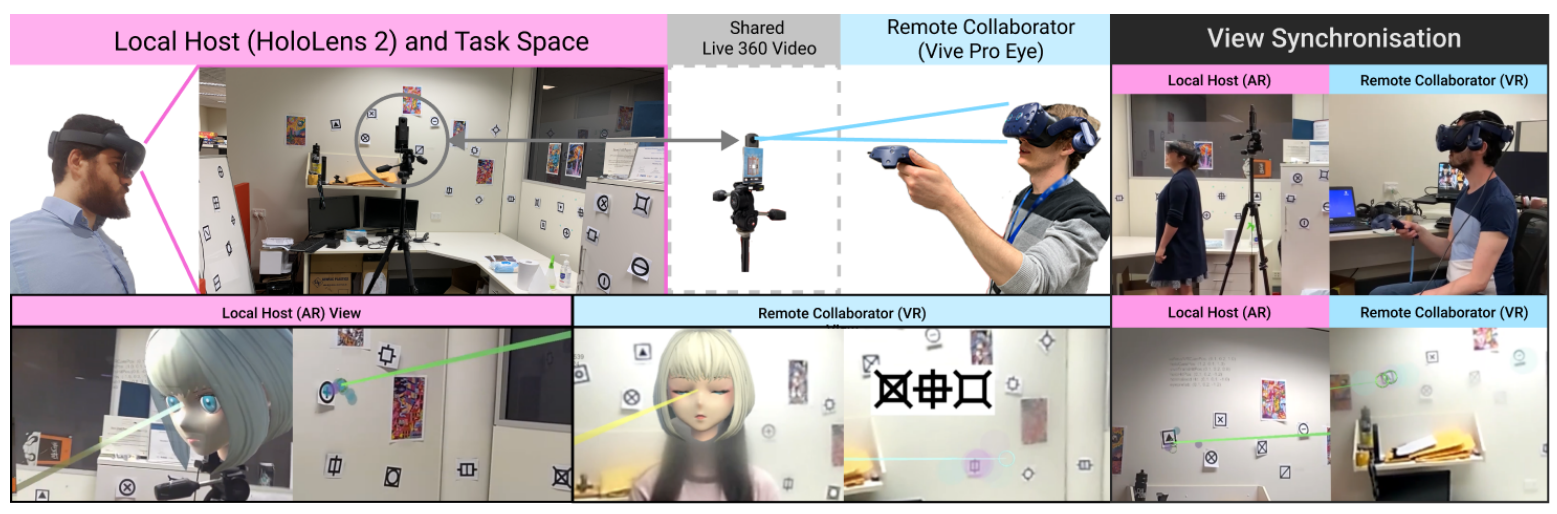

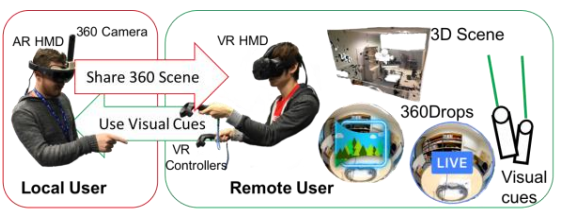

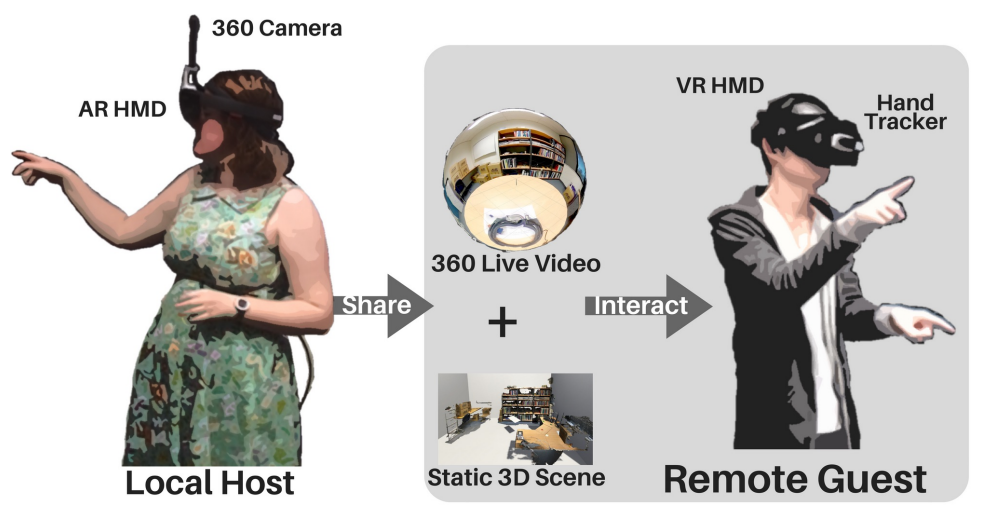

SharedSphere

SharedSphere is a Mixed Reality based remote collaboration system which not only allows sharing a live captured immersive 360 panorama, but also supports enriched two-way communication and collaboration through sharing non-verbal communication cues, such as view awareness cues, drawn annotation, and hand gestures.

-

Augmented Mirrors

Mirrors are physical displays that show our real world in reflection. While physical mirrors simply show what is in the real world scene, with help of digital technology, we can also alter the reality reflected in the mirror. The Augmented Mirrors project aims at exploring visualisation interaction techniques for exploiting mirrors as Augmented Reality (AR) displays. The project especially focuses on using user interface agents for guiding user interaction with Augmented Mirrors.

-

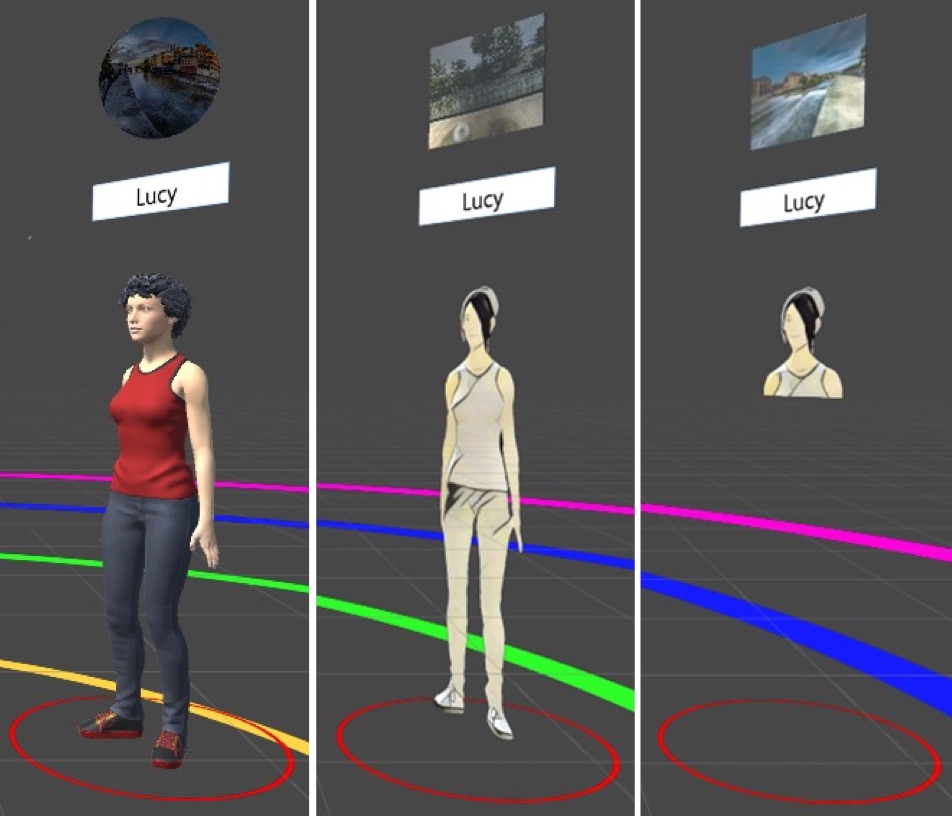

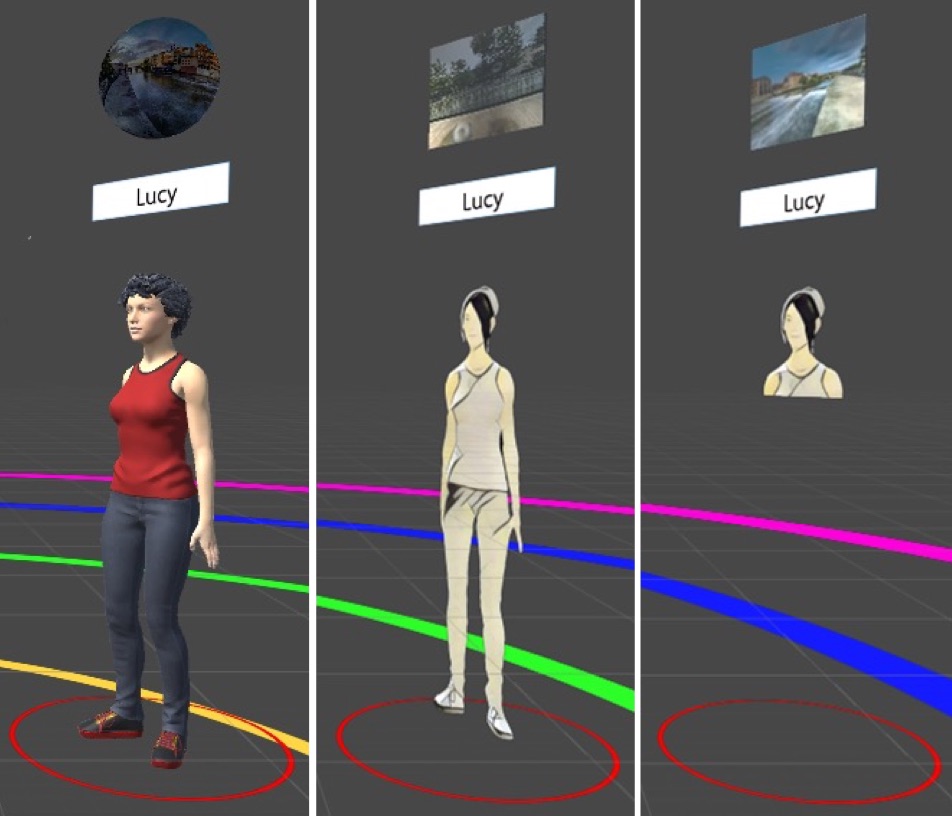

Mini-Me

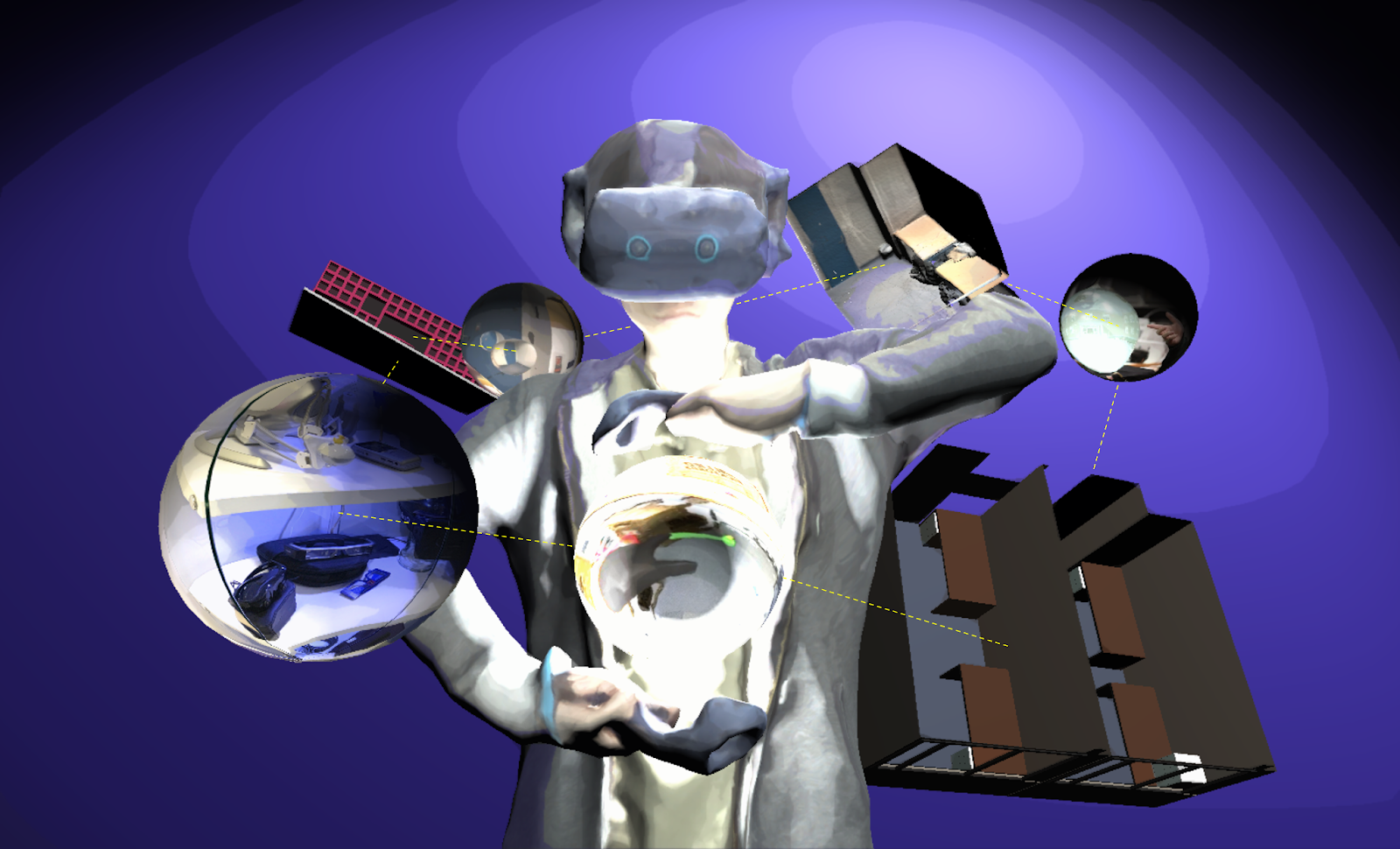

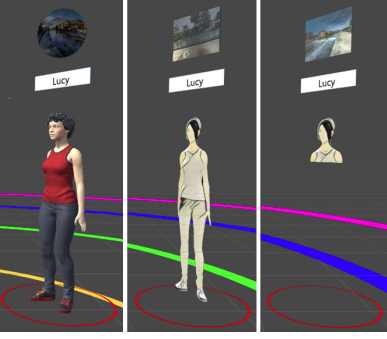

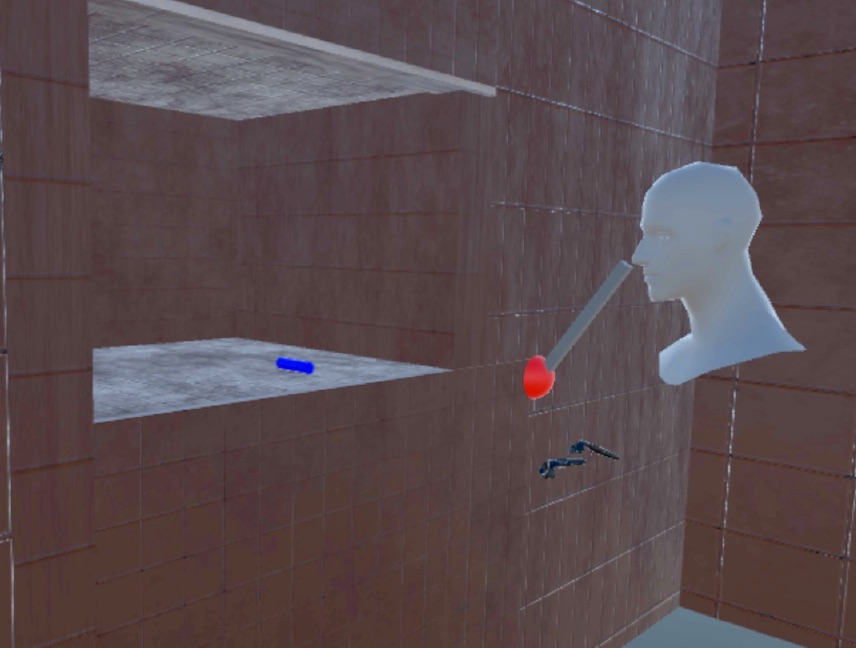

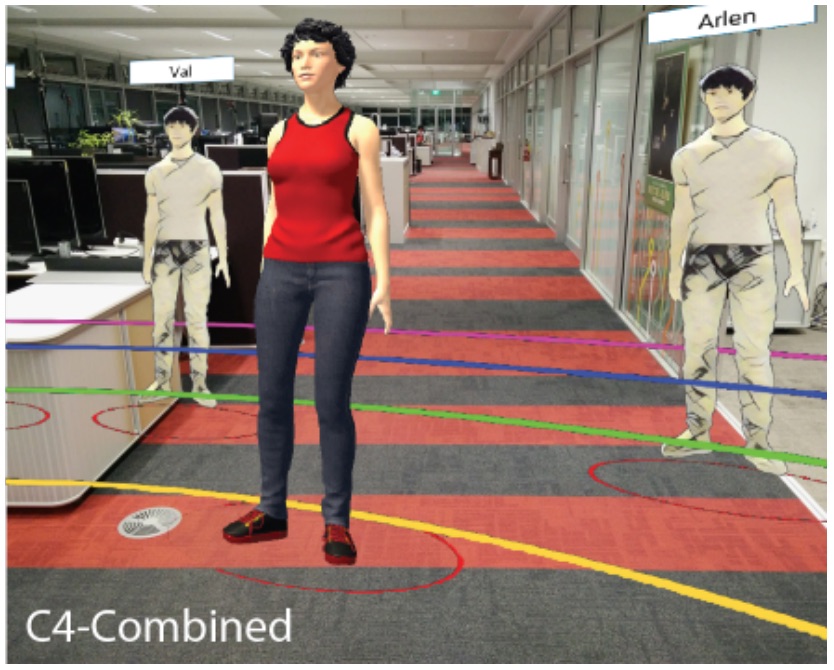

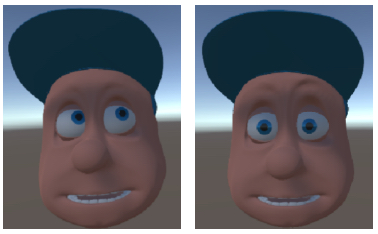

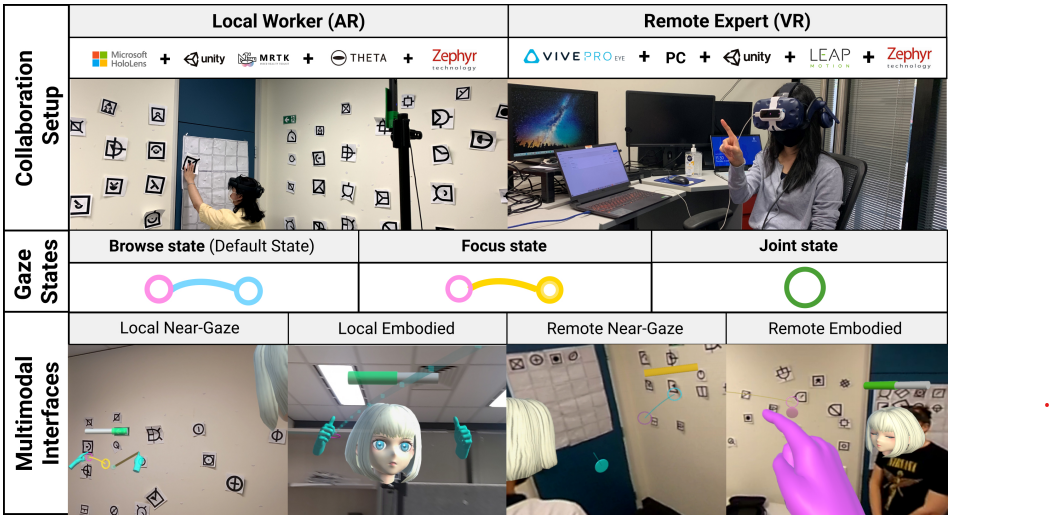

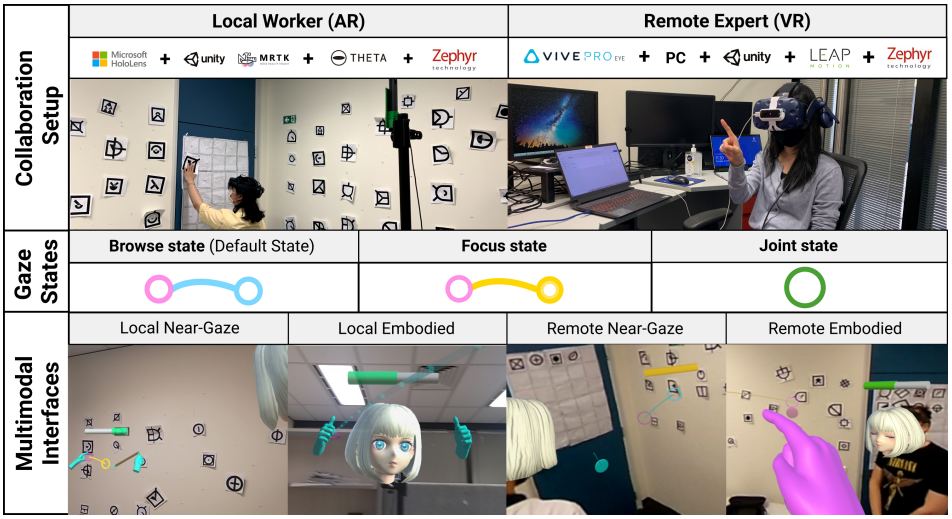

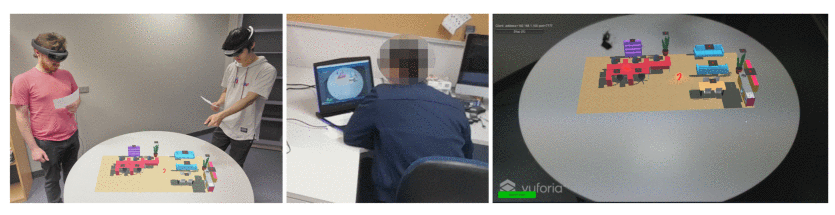

Mini-Me is an adaptive avatar for enhancing Mixed Reality (MR) remote collaboration between a local Augmented Reality (AR) user and a remote Virtual Reality (VR) user. The Mini-Me avatar represents the VR user’s gaze direction and body gestures while it transforms in size and orientation to stay within the AR user’s field of view. We tested Mini-Me in two collaborative scenarios: an asymmetric remote expert in VR assisting a local worker in AR, and a symmetric collaboration in urban planning. We found that the presence of the Mini-Me significantly improved Social Presence and the overall experience of MR collaboration.

-

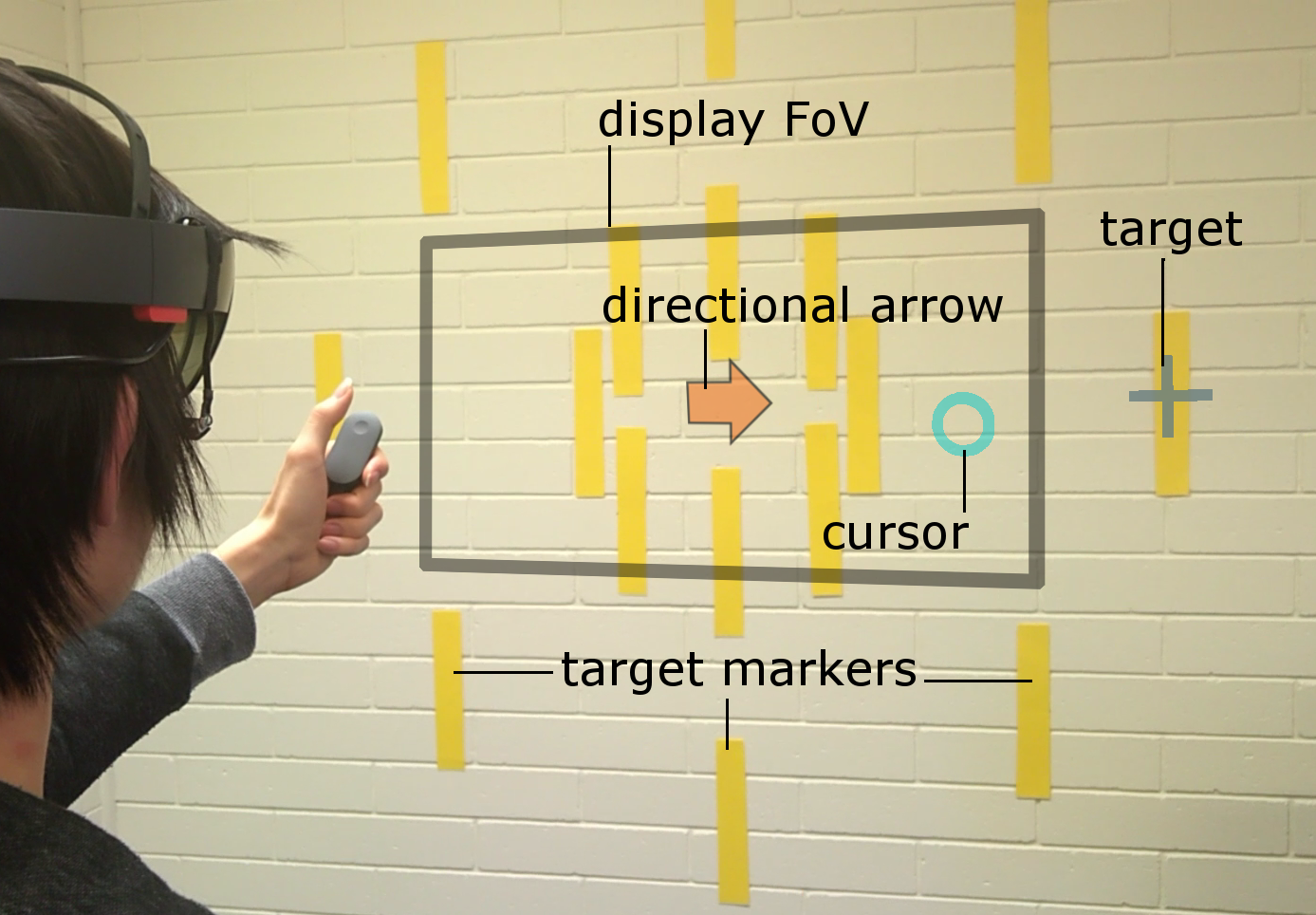

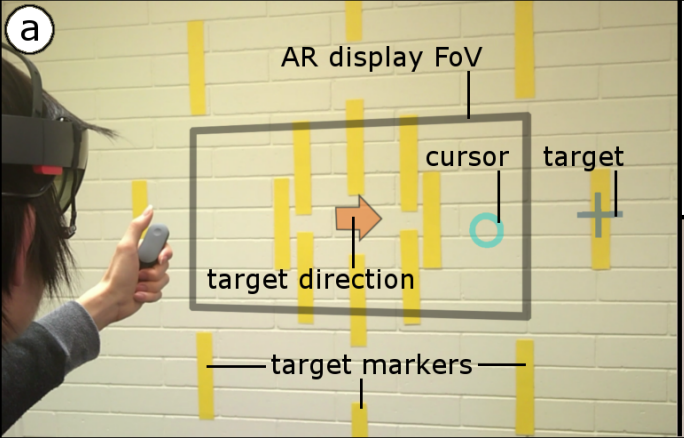

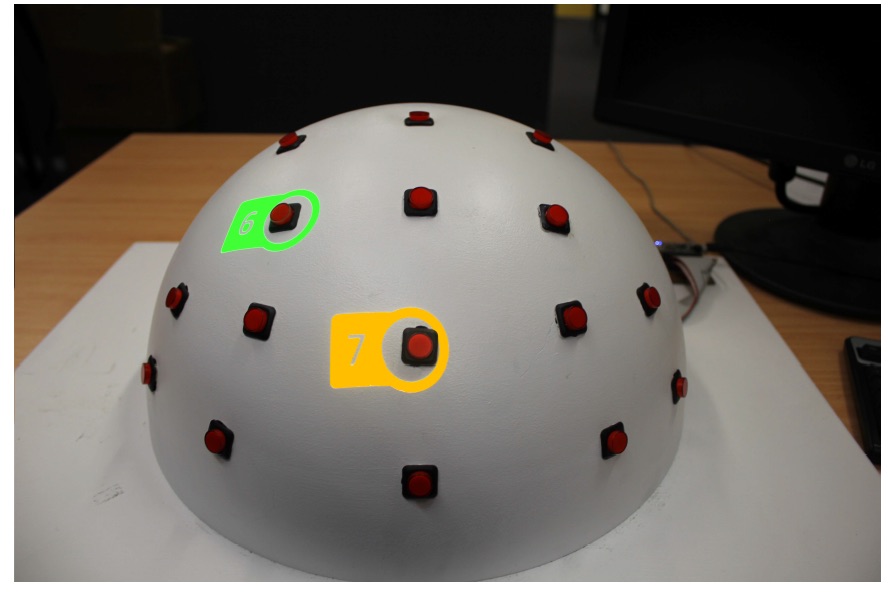

Pinpointing

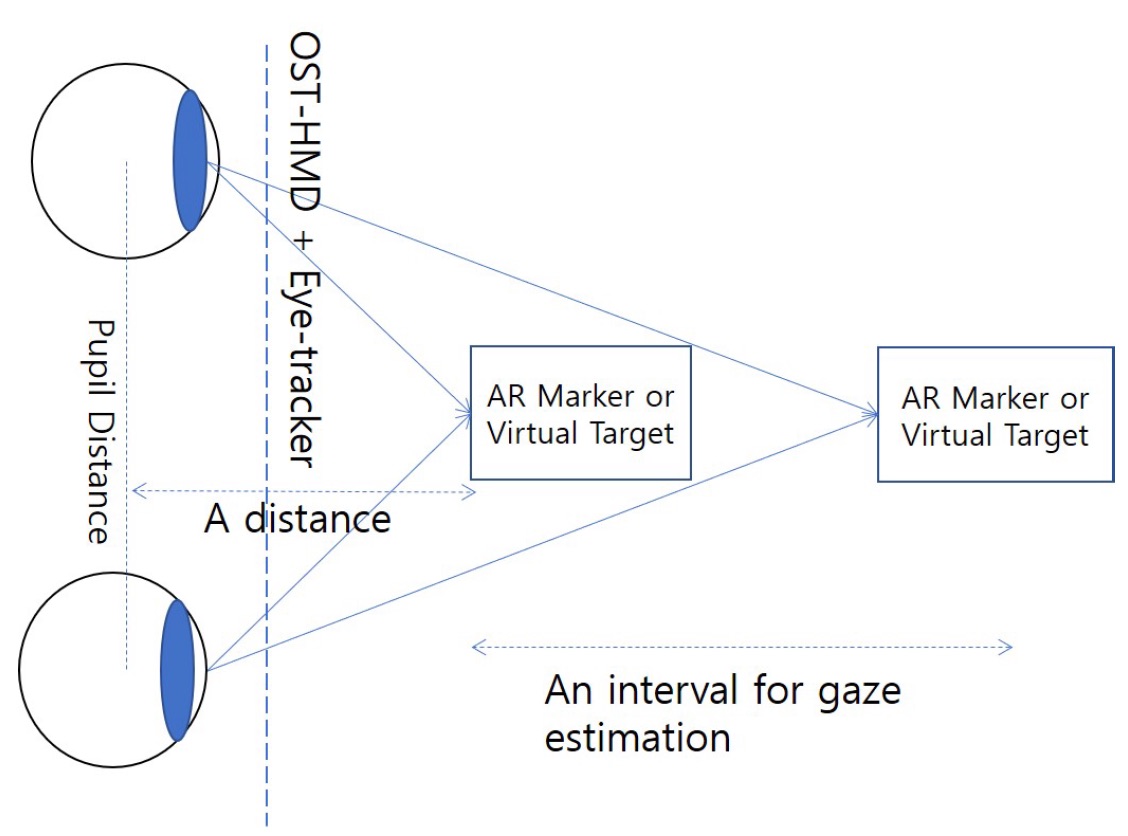

Head and eye movement can be leveraged to improve the user’s interaction repertoire for wearable displays. Head movements are deliberate and accurate, and provide the current state-of-the-art pointing technique. Eye gaze can potentially be faster and more ergonomic, but suffers from low accuracy due to calibration errors and drift of wearable eye-tracking sensors. This work investigates precise, multimodal selection techniques using head motion and eye gaze. A comparison of speed and pointing accuracy reveals the relative merits of each method, including the achievable target size for robust selection. We demonstrate and discuss example applications for augmented reality, including compact menus with deep structure, and a proof-of-concept method for on-line correction of calibration drift.

-

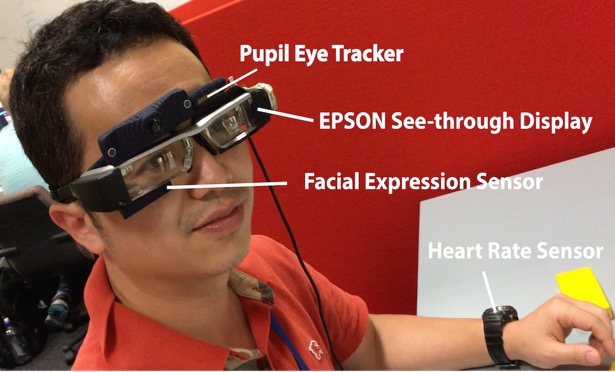

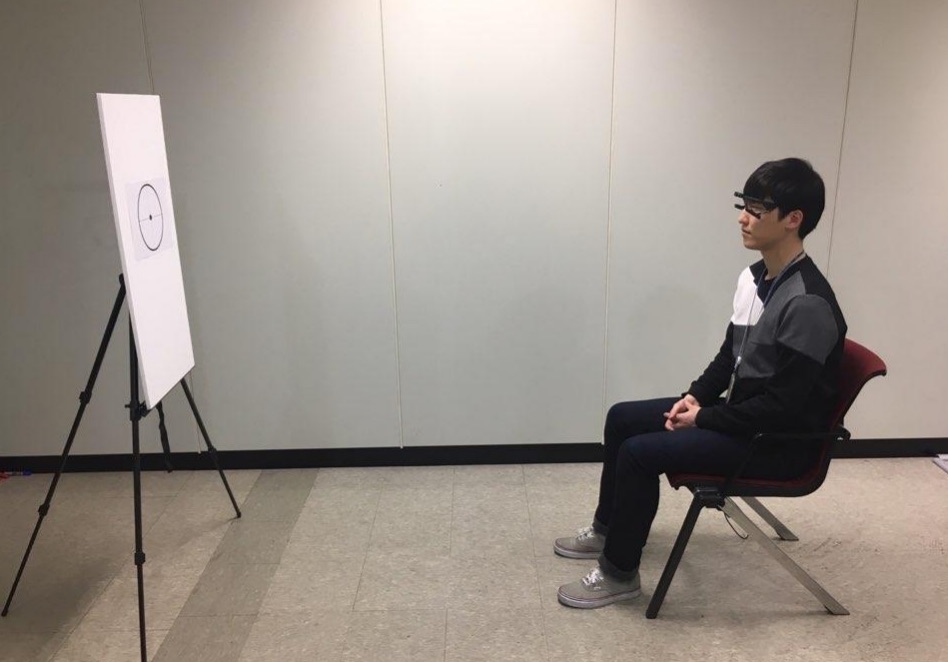

Empathy Glasses

We have been developing a remote collaboration system with Empathy Glasses, a head worn display designed to create a stronger feeling of empathy between remote collaborators. To do this, we combined a head- mounted see-through display with a facial expression recognition system, a heart rate sensor, and an eye tracker. The goal is to enable a remote person to see and hear from another person's perspective and to understand how they are feeling. In this way, the system shares non-verbal cues that could help increase empathy between remote collaborators.

-

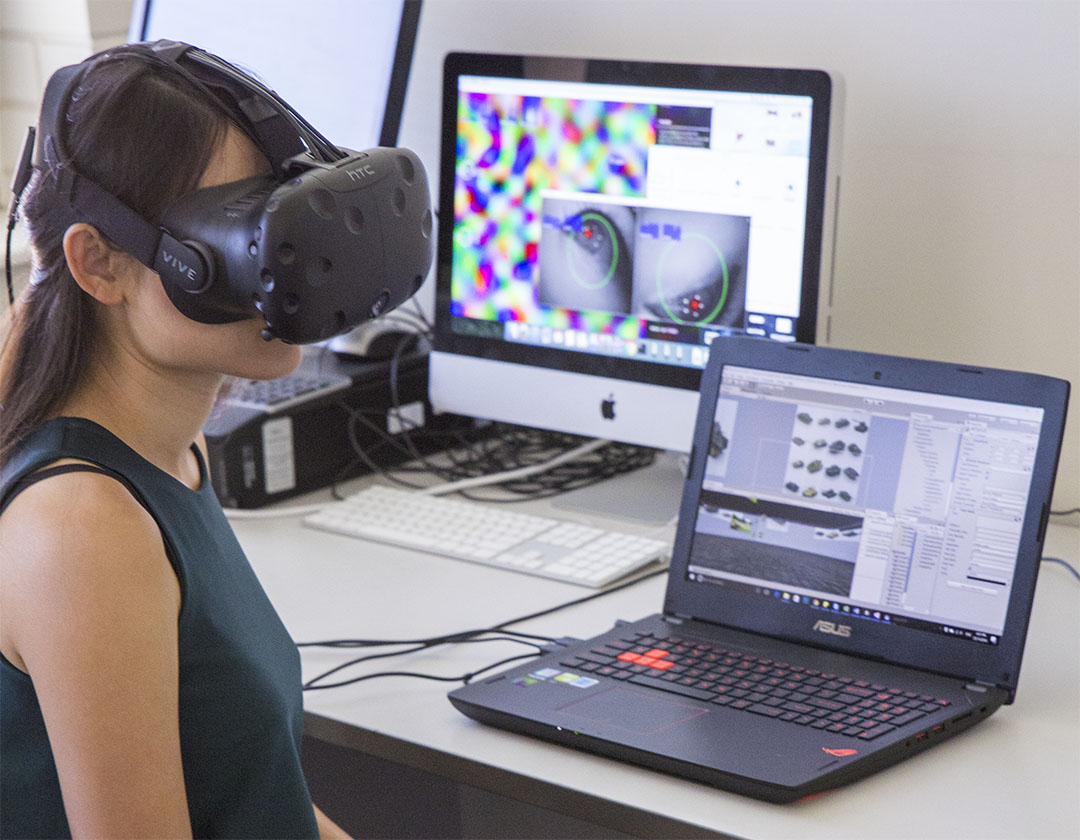

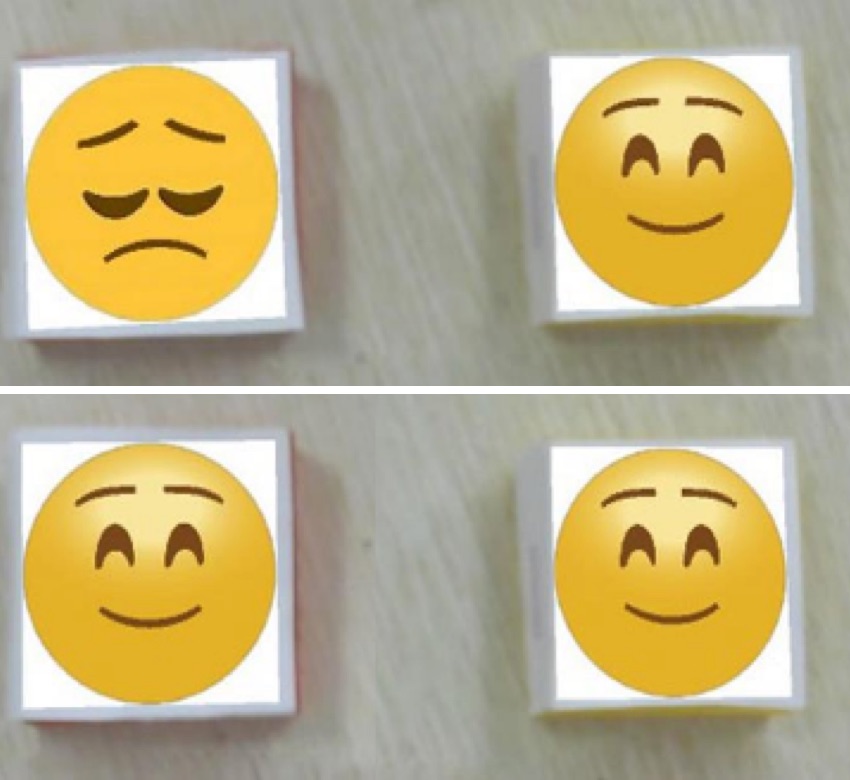

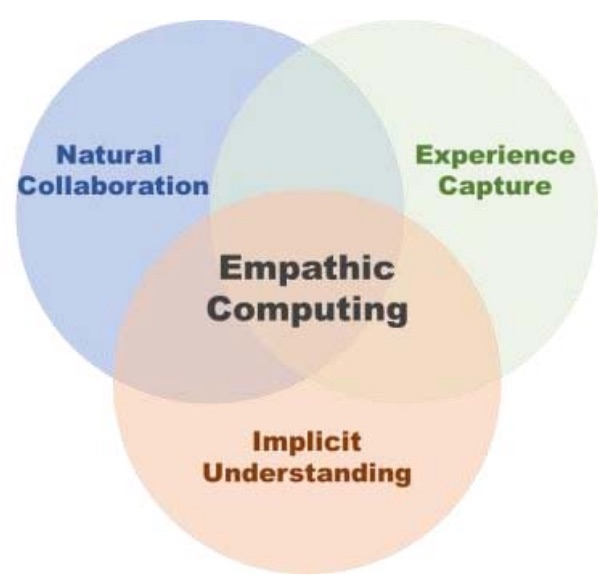

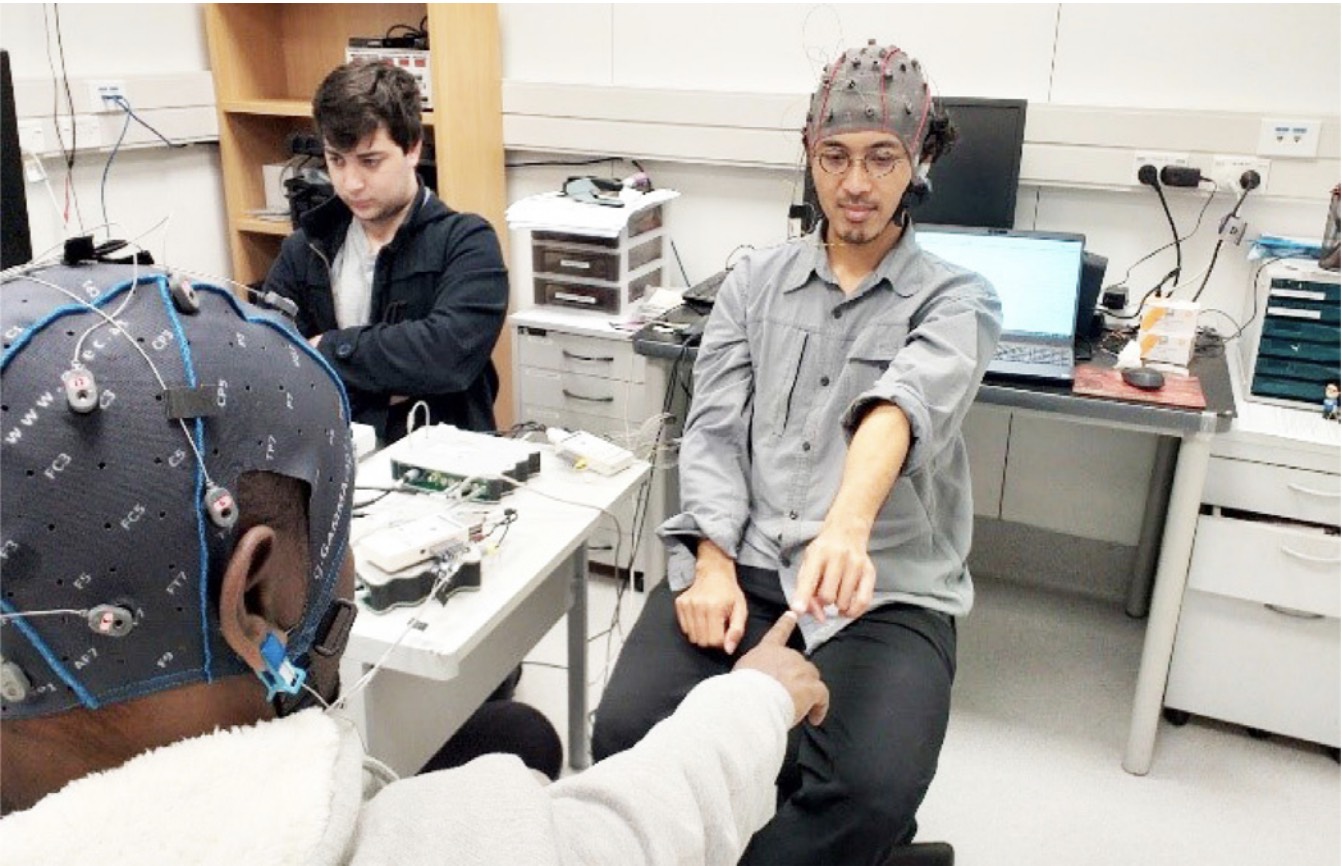

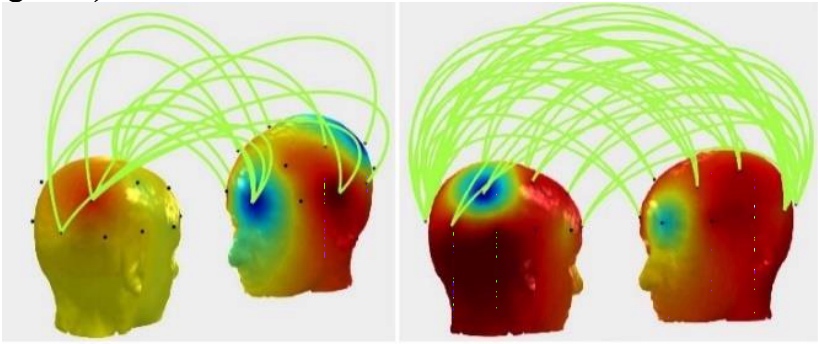

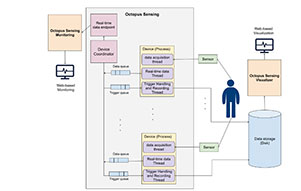

Empathy in Virtual Reality

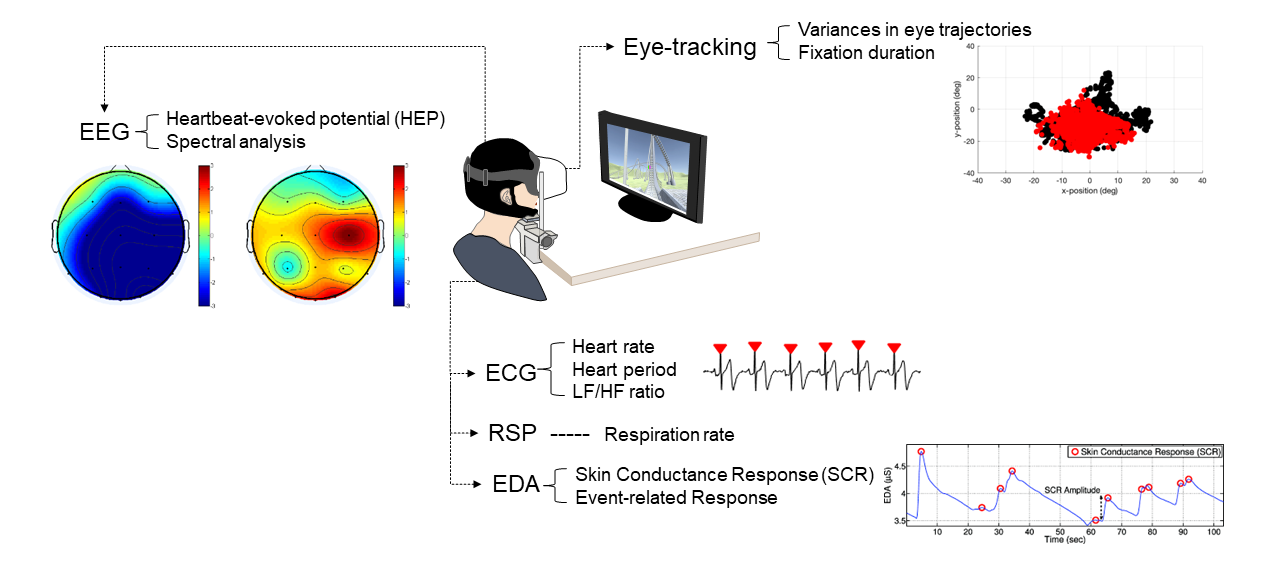

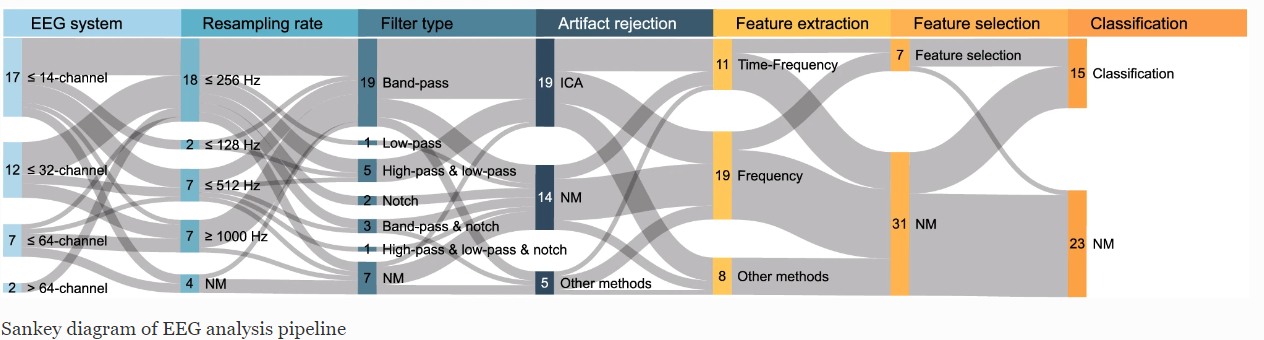

Virtual reality (VR) interfaces is an influential medium to trigger emotional changes in humans. However, there is little research on making users of VR interfaces aware of their own and in collaborative interfaces, one another's emotional state. In this project, through a series of system development and user evaluations, we are investigating how physiological data such as heart rate, galvanic skin response, pupil dilation, and EEG can be used as a medium to communicate emotional states either to self (single user interfaces) or the collaborator (collaborative interfaces). The overarching goal is to make VR environments more empathetic and collaborators more aware of each other's emotional state.

-

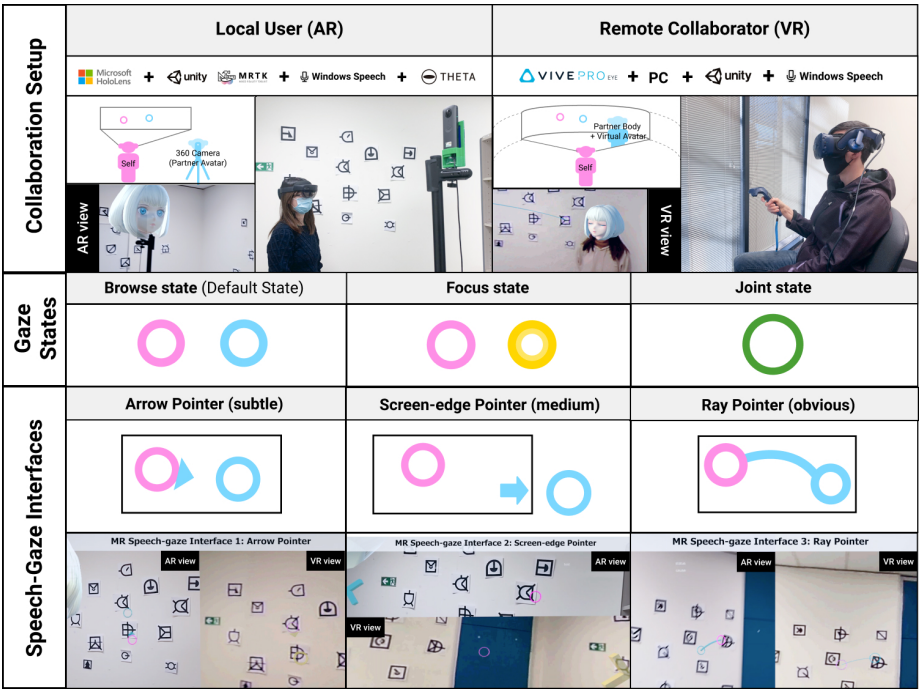

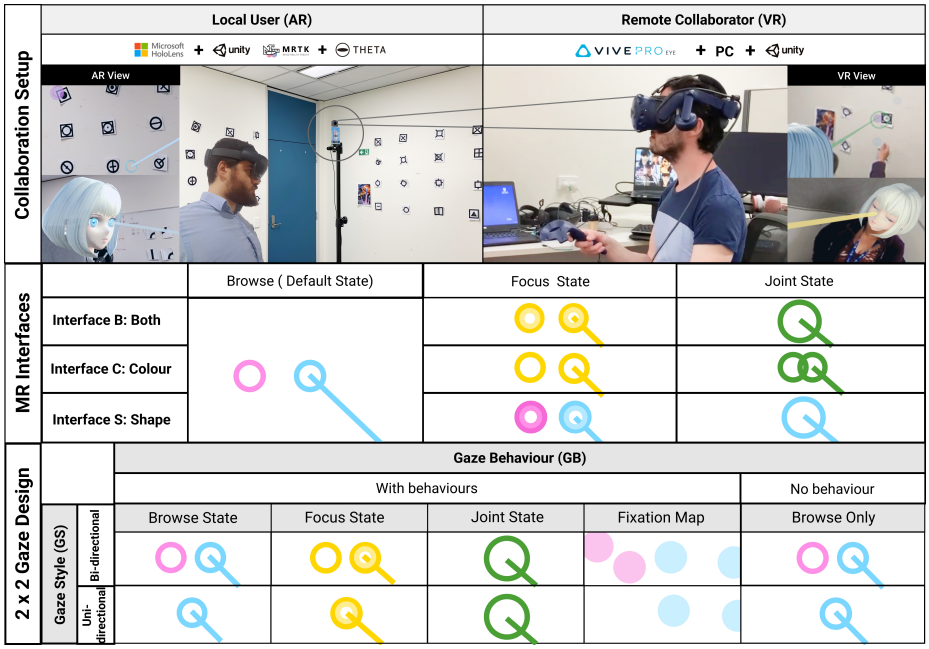

Sharing Gesture and Gaze Cues for Enhancing MR Collaboration

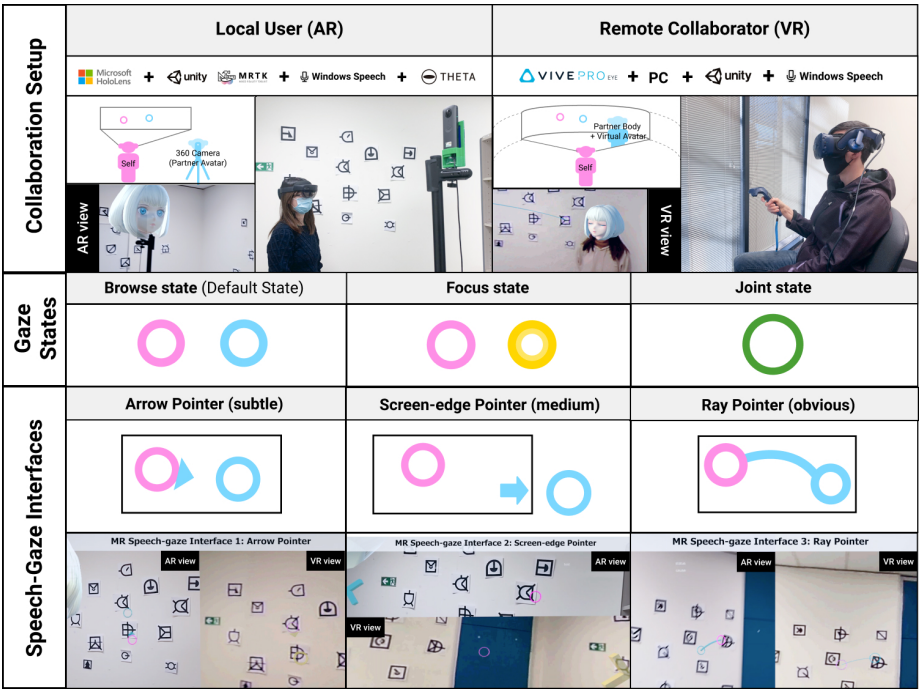

This research focuses on visualizing shared gaze cues, designing interfaces for collaborative experience, and incorporating multimodal interaction techniques and physiological cues to support empathic Mixed Reality (MR) remote collaboration using HoloLens 2, Vive Pro Eye, Meta Pro, HP Omnicept, Theta V 360 camera, Windows Speech Recognition, Leap motion hand tracking, and Zephyr/Shimmer Sensing technologies

-

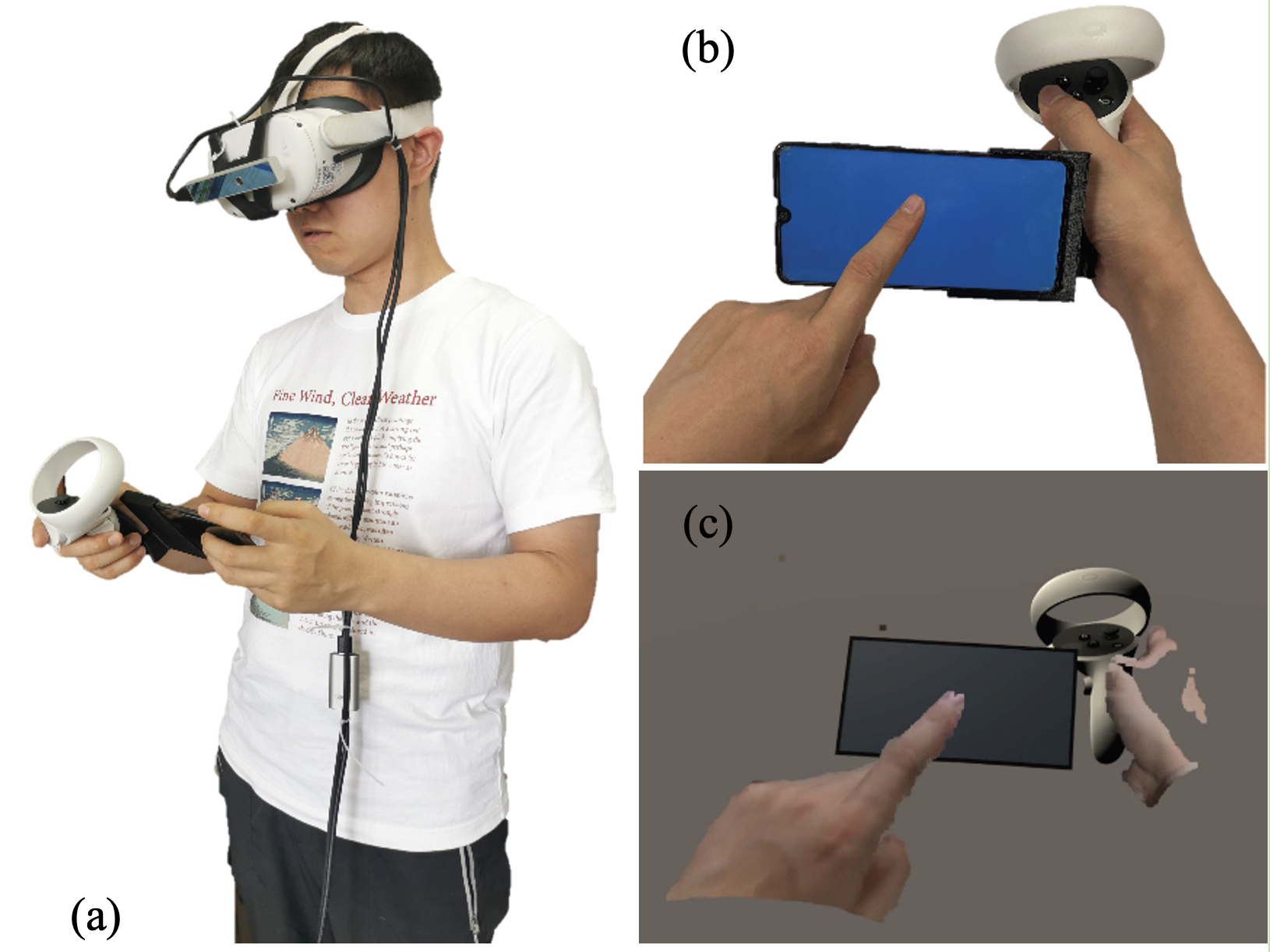

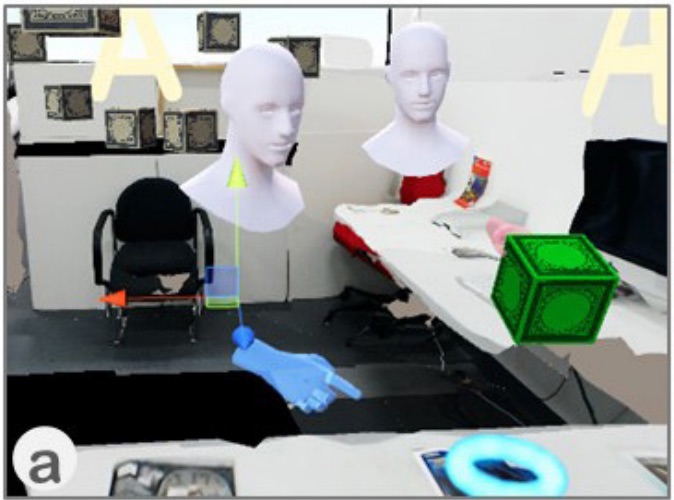

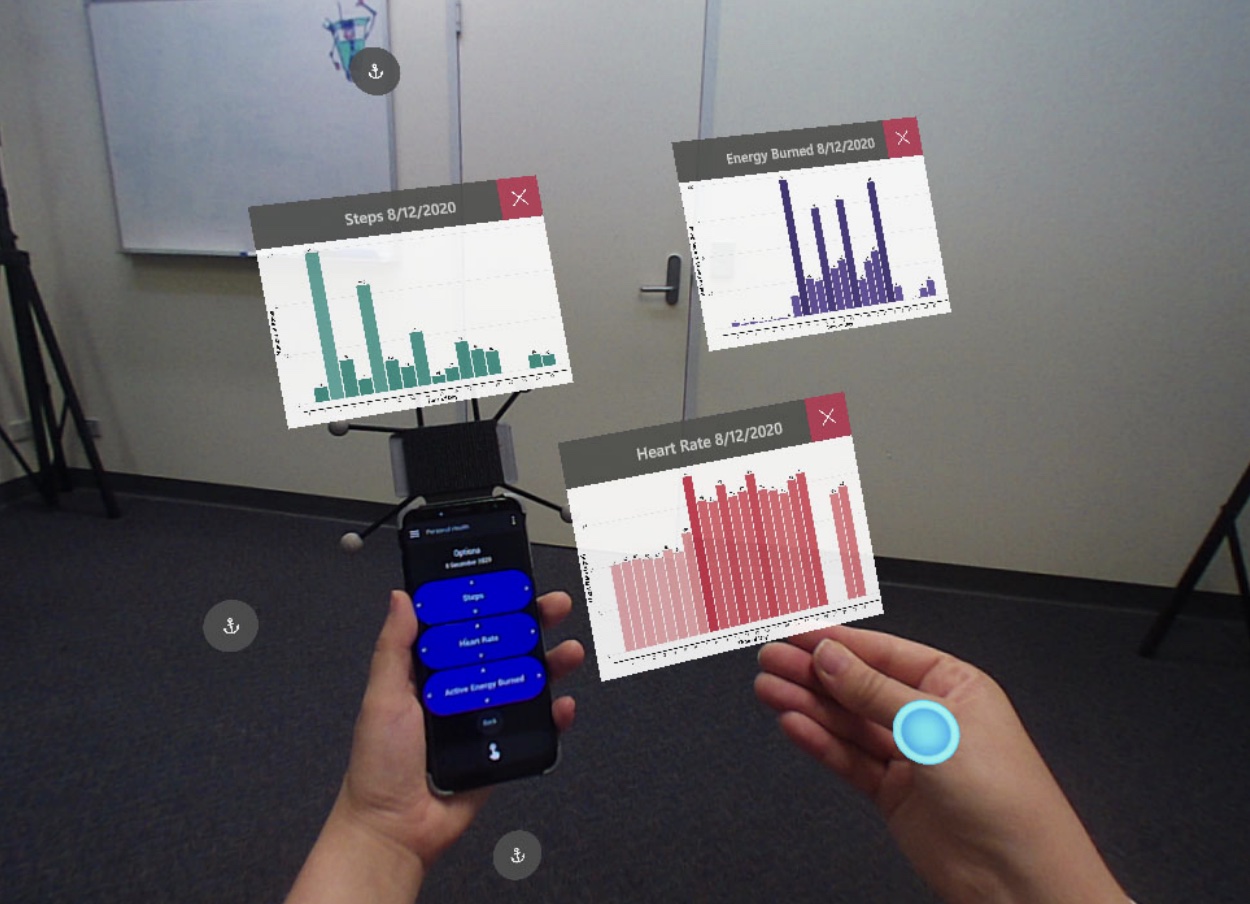

Using a Mobile Phone in VR

Virtual Reality (VR) Head-Mounted Display (HMD) technology immerses a user in a computer generated virtual environment. However, a VR HMD also blocks the users’ view of their physical surroundings, and so prevents them from using their mobile phones in a natural manner. In this project, we present a novel Augmented Virtuality (AV) interface that enables people to naturally interact with a mobile phone in real time in a virtual environment. The system allows the user to wear a VR HMD while seeing his/her 3D hands captured by a depth sensor and rendered in different styles, and enables the user to operate a virtual mobile phone aligned with their real phone.

-

Show Me Around

This project introduces an immersive way to experience a conference call - by using a 360° camera to live stream a person’s surroundings to remote viewers. Viewers have the ability to freely look around the host video and get a better understanding of the sender’s surroundings. Viewers can also observe where the other participants are looking, allowing them to understand better the conversation and what people are paying attention to. In a user study of the system, people found it much more immersive than a traditional video conferencing call and reported that they felt that they were transported to a remote location. Possible applications of this system include virtual tourism, education, industrial monitoring, entertainment, and more.

-

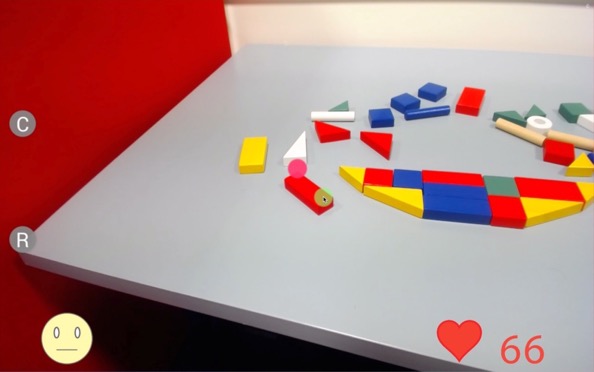

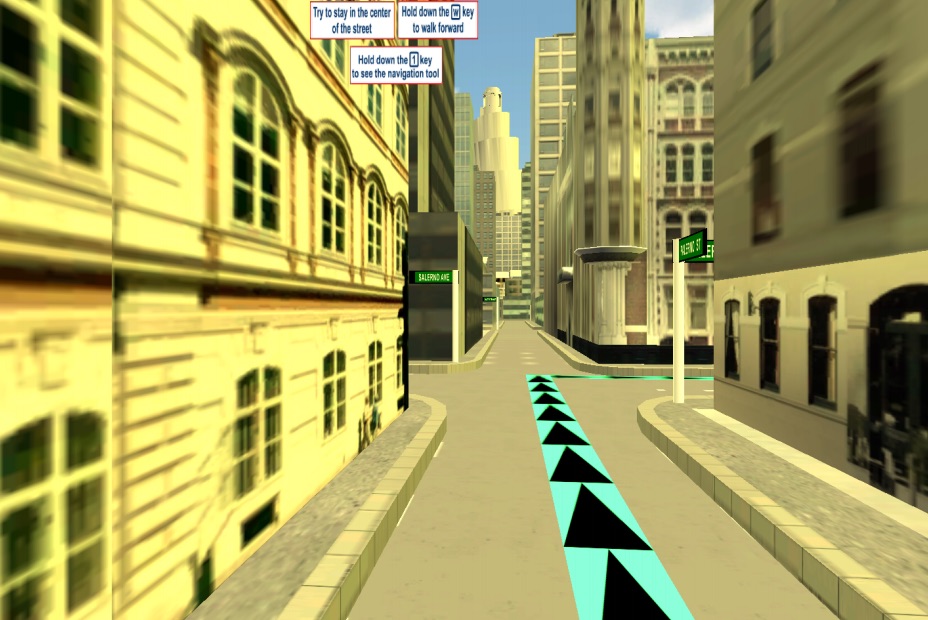

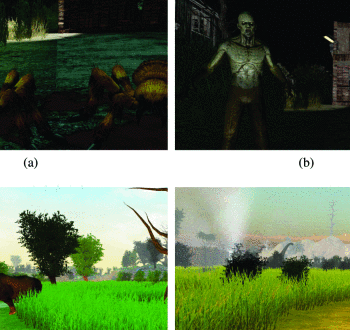

TBI Cafe

Over 36,000 Kiwis experience Traumatic Brain Injury (TBI) per year. TBI patients often experience severe cognitive fatigue, which impairs their ability to cope well in public/social settings. Rehabilitation can involve taking people into social settings with a therapist so that they can relearn how to interact in these environments. However, this is a time-consuming, expensive and difficult process. To address this, we've created the TBI Cafe, a VR tool designed to help TBI patients cope with their injury and practice interacting in a cafe. In this application, people in VR practice ordering food and drink while interacting with virtual characters. Different types of distractions are introduced, such as a crying baby and loud conversations, which are designed to make the experience more stressful, and let the user practice managing stressful situations. Clinical trials with the software are currently underway.

-

Haptic Hongi

This project explores if XR technologies help overcome intercultural discomfort by using Augmented Reality (AR) and haptic feedback to present a traditional Māori greeting. Using a Hololens2 AR headset, guests see a pre-recorded volumetric virtual video of Tania, a Māori woman, who greets them in a re-imagined, contemporary first encounter between indigenous Māori and newcomers. The visitors, manuhiri, consider their response in the absence of usual social pressures. After a brief introduction, the virtual Tania slowly leans forward, inviting the visitor to ‘hongi’, a pressing together of noses and foreheads in a gesture symbolising “ ...peace and oneness of thought, purpose, desire, and hope”. This is felt as a haptic response delivered via a custom-made actuator built into the visitors' AR headset.

-

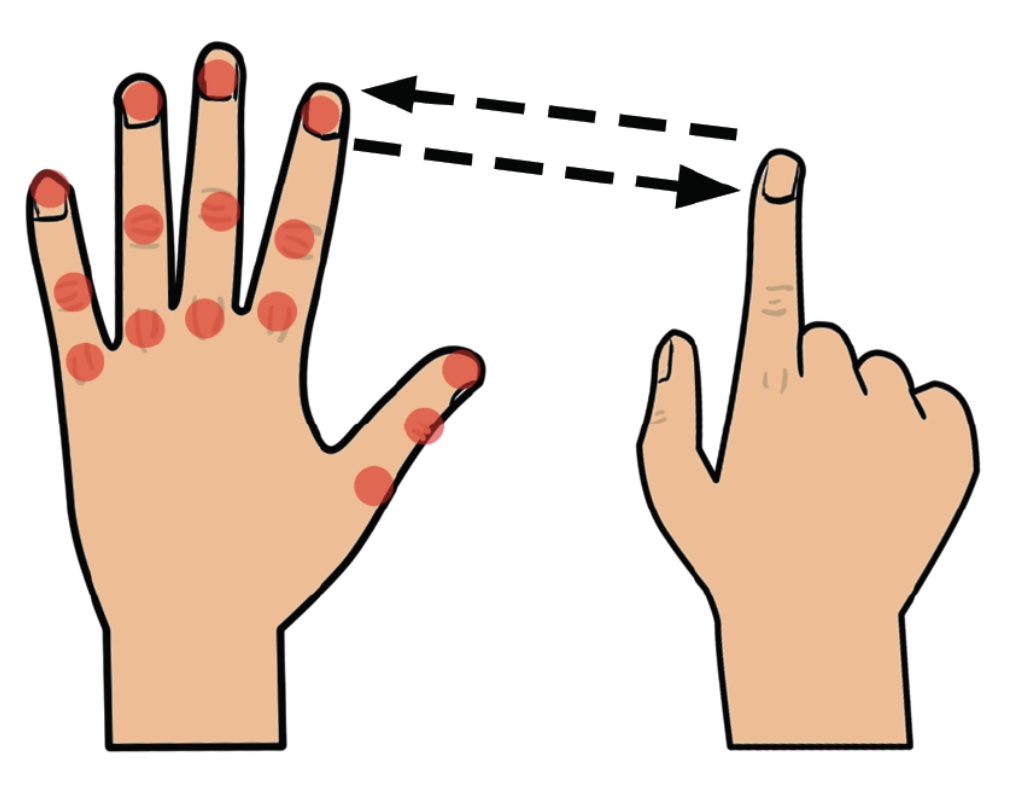

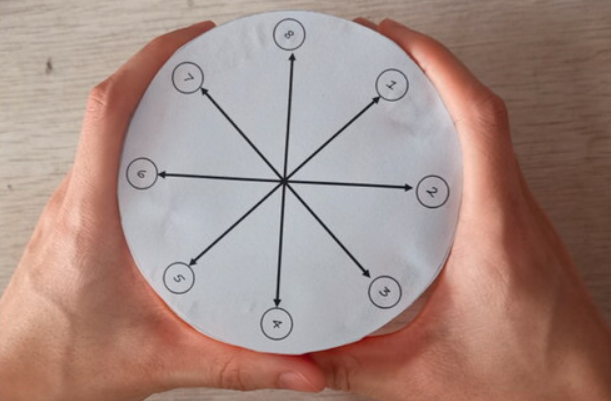

RadarHand

RadarHand is a wrist-worn wearable system that uses radar sensing to detect on-skin proprioceptive hand gestures, making it easy to interact with simple finger motions. Radar has the advantage of being robust, private, small, penetrating materials and requiring low computation costs. In this project, we first evaluated the proprioceptive nature of the back of the hand and found that the thumb is the most proprioceptive of all the finger joints, followed by the index finger, middle finger, ring finger and pinky finger. This helped determine the types of gestures most suitable for the system. Next, we trained deep-learning models for gesture classification. Out of 27 gesture group possibilities, we achieved 92% accuracy for a generic set of seven gestures and 93% accuracy for the proprioceptive set of eight gestures. We also evaluated RadarHand's performance in real-time and achieved an accuracy of between 74% and 91% depending if the system or user initiates the gesture first. This research could contribute to a new generation of radar-based interfaces that allow people to interact with computers in a more natural way.

-

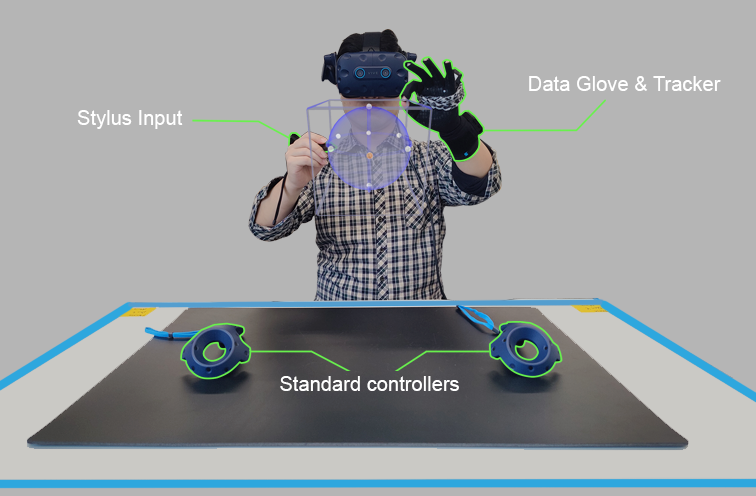

Asymmetric Interaction for VR sketching

This project explores how tool-based asymmetric VR interfaces can be used by artists to create immersive artwork more effectively. Most VR interfaces use two input methods of the same type, such as two handheld controllers or two bare-hand gestures. However, it is common for artists to use different tools in each hand, such as a pencil and sketch pad. The research involves developed interaction methods that use different input methods in the edge hand, such as a stylus and gesture. Using this interface, artists can rapidly sketch their designs in VR. User studies are being conducted to compare asymmetric and symmetric interfaces to see which provides the best performance and which the users prefer more.

-

Detecting of the Onset of Cybersickness using Physiological Cues

In this project we explore if the onset of cybersickness can be detected by considering multiple physiological signals simultaneously from users in VR. We are particularly interested in physiological cues that can be collected from the current generation of VR HMDs, such as eye-gaze, and heart rate. We are also interested in exploring other physiological cues that could be available in the near future in VR HMDs, such as GSR and EEG.

-

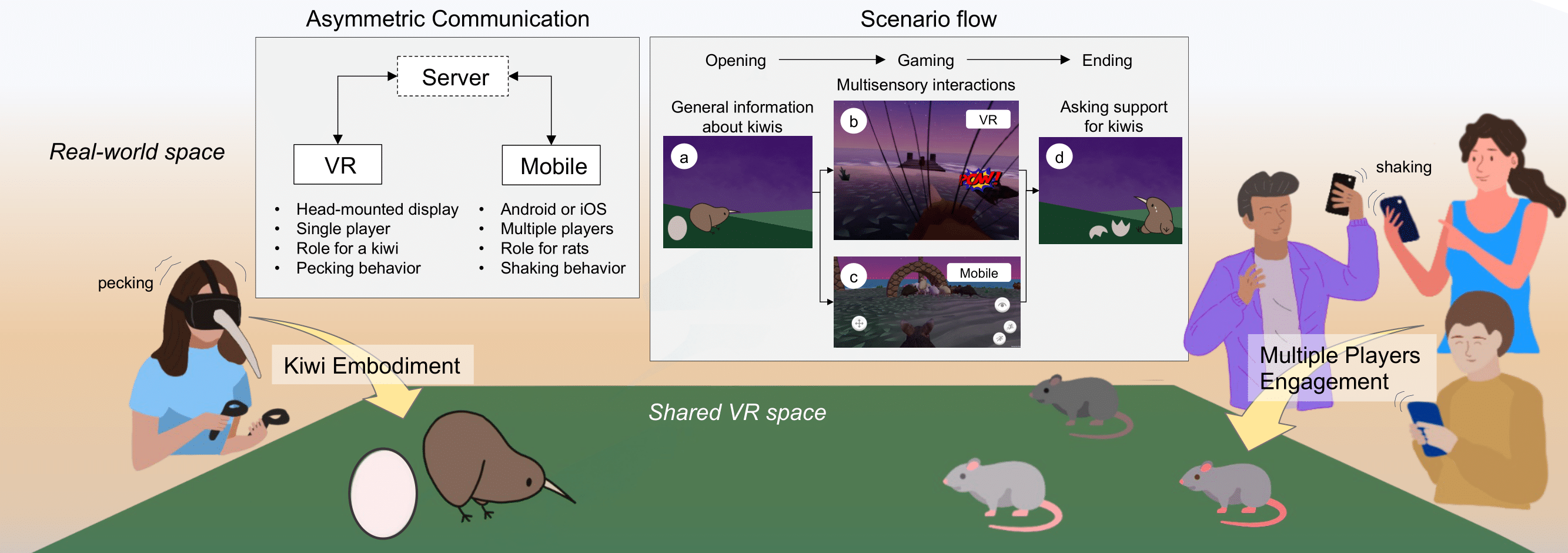

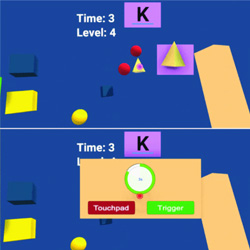

KiwiRescuer: A new interactive exhibition using an asymmetric interaction

This research demo aims to address the problem of passive and dull museum exhibition experiences that many audiences still encounter. The current approaches to exhibitions are typically less interactive and mostly provide single sensory information (e.g., visual, auditory, or haptic) in a one-to-one experience.

-

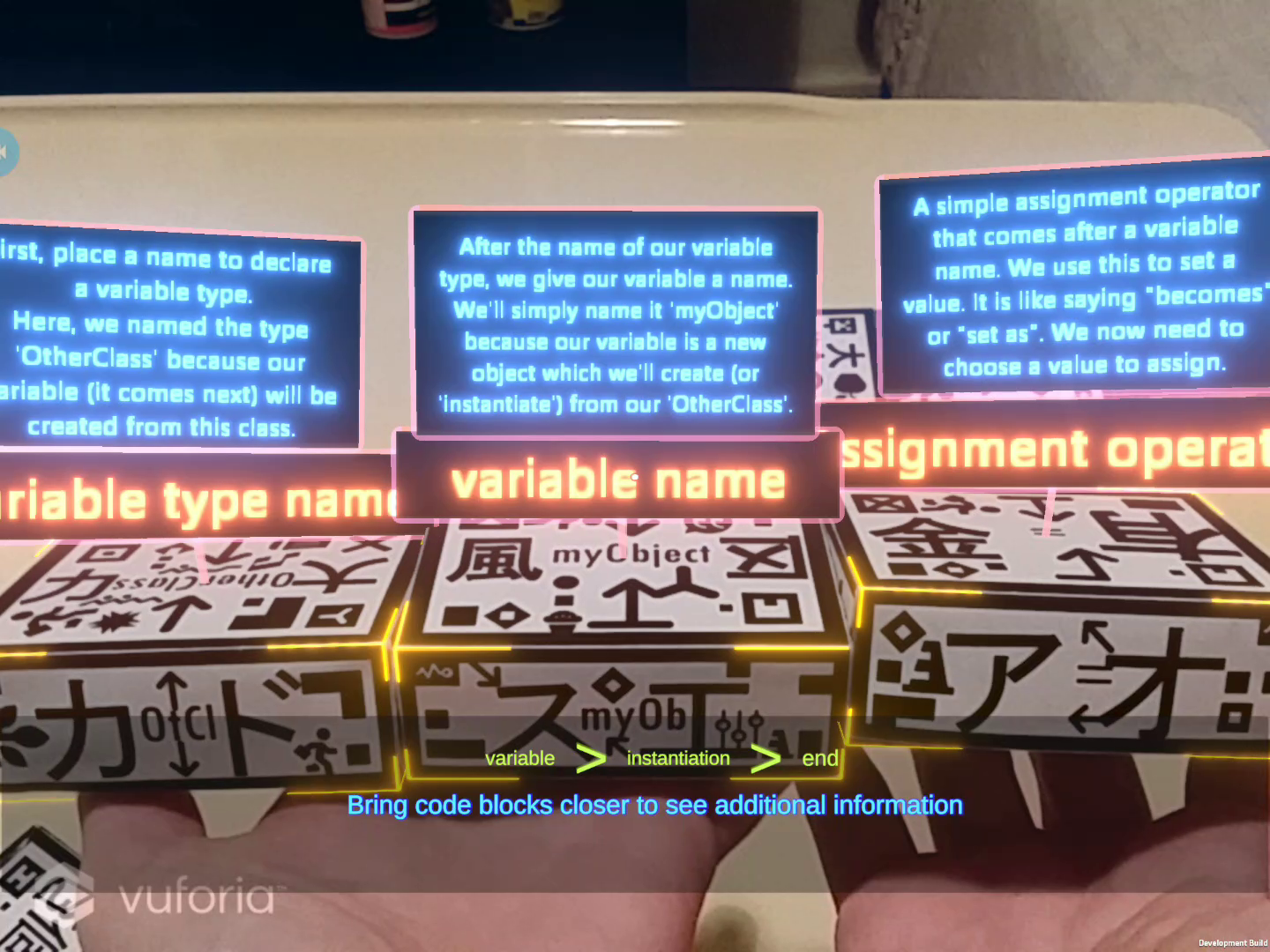

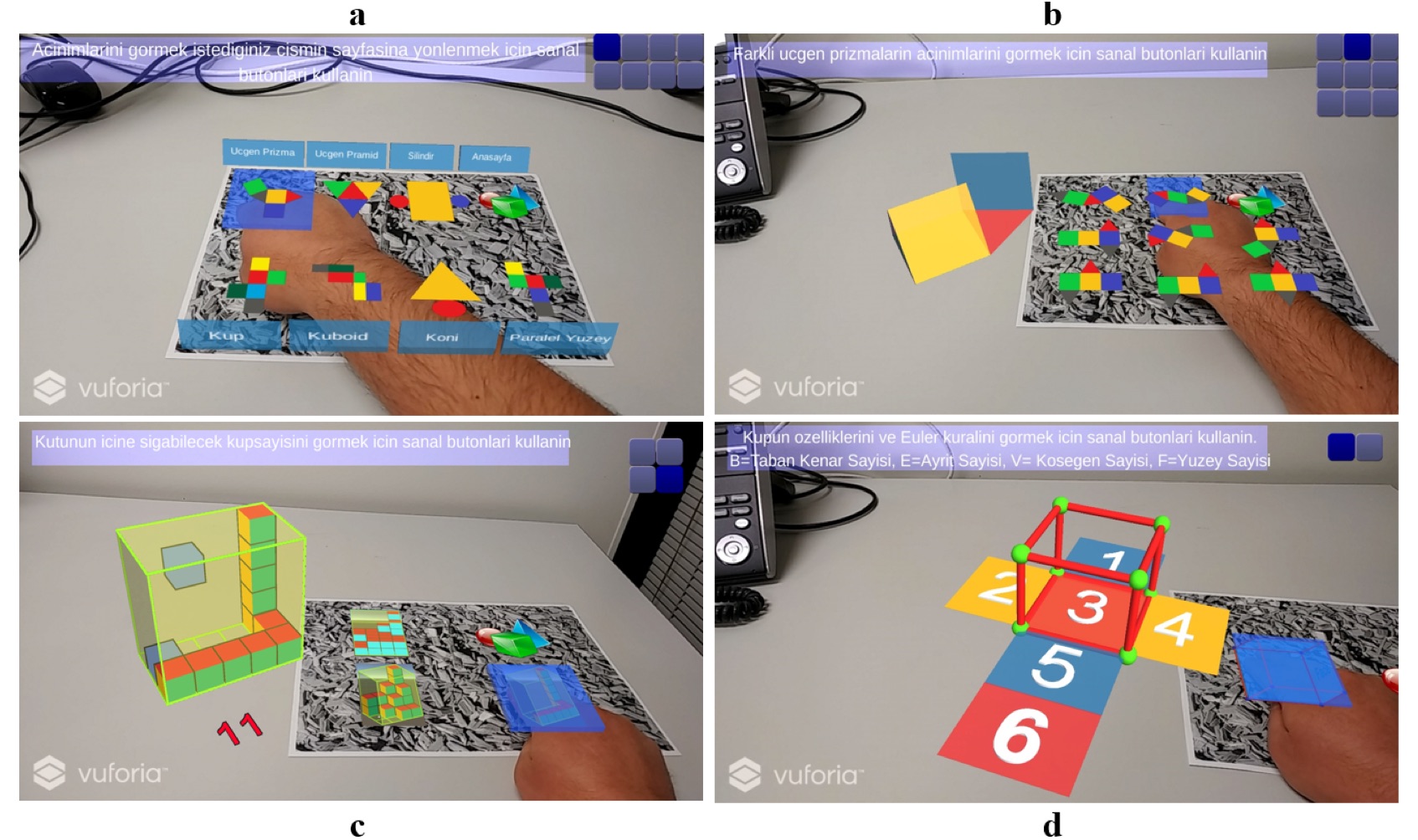

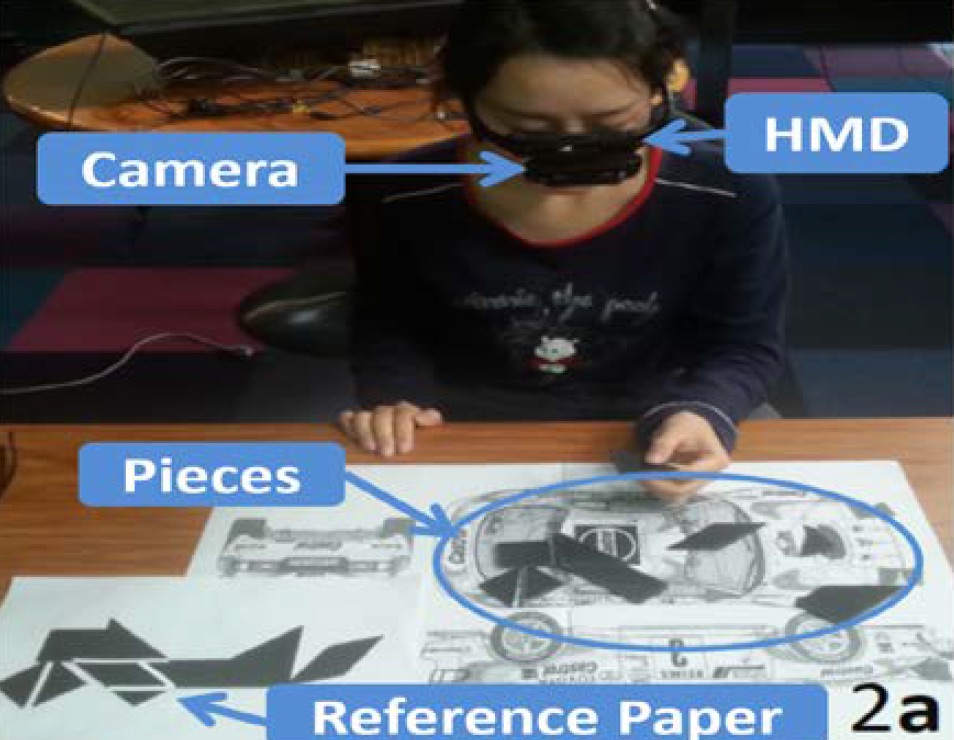

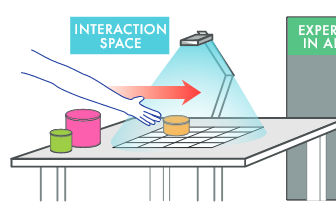

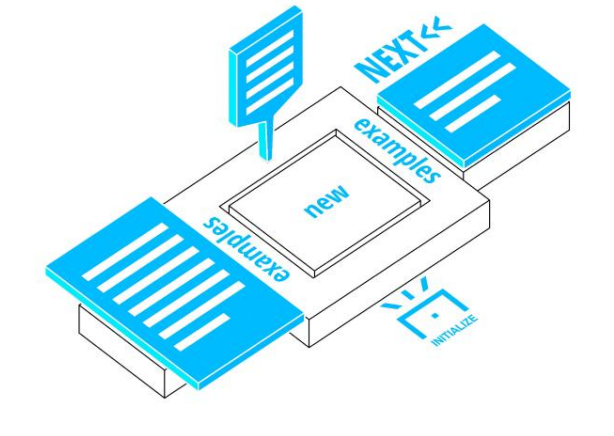

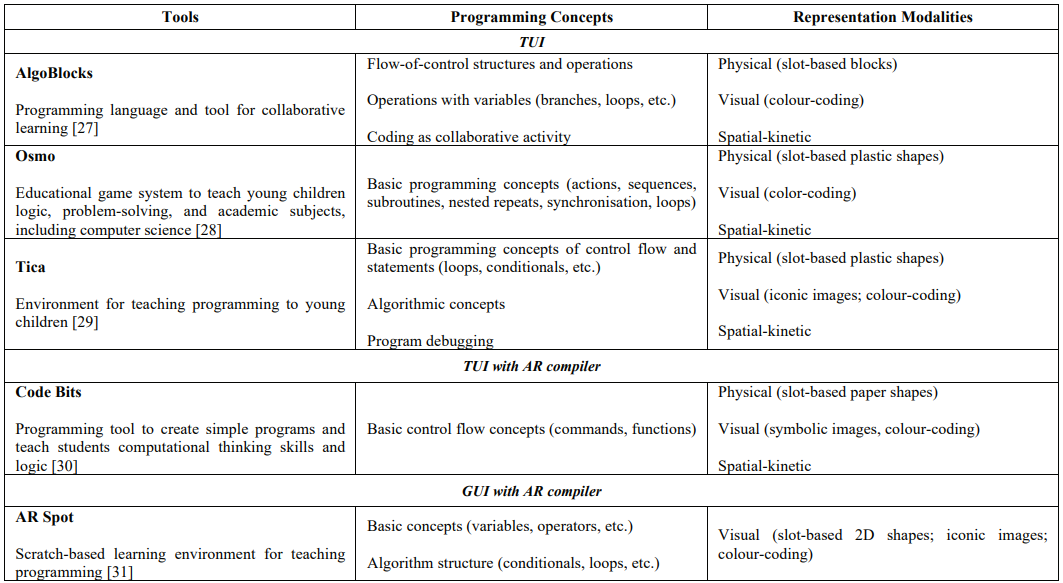

Tangible Augmented Reality for Learning Programming Learning

This project explores how tangible Augmented Reality (AR) can be used to teach computer programming. We have developed TARPLE, A Tangible Augmented Reality Programming Learning Environment, and are studying its efficacy for teaching text-based programming languages to novice learners. TARPLE uses physical blocks to represent programming functions and overlays virtual imagery on the blocks to show the programming code. Use can arrange the blocks by moving them with their hands, and see the AR content either through the Microsoft Hololens2 AR display, or a handheld tablet. This research project expands upon the broader question of educational AR as well as on the questions of tangible programming languages and tangible learning mediums. When supported by the embodied learning and natural interaction affordances of AR, physical objects may hold the key to developing fundamental knowledge of abstract, complex subjects for younger learners in particular. It may also serve as a powerful future tool in advancing early computational thinking skills in novices. Evaluation of such learning environments addresses the hypothesis that hybrid tangible AR mediums are able to support an extended learning taxonomy both within the classroom and without.

-

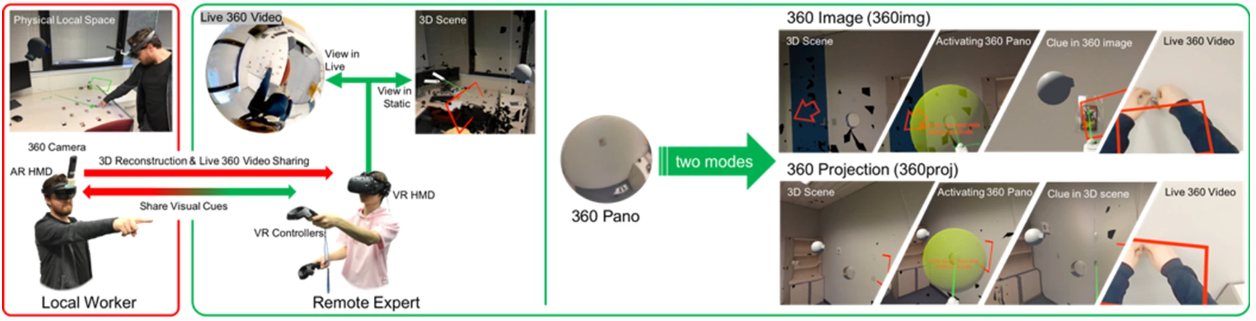

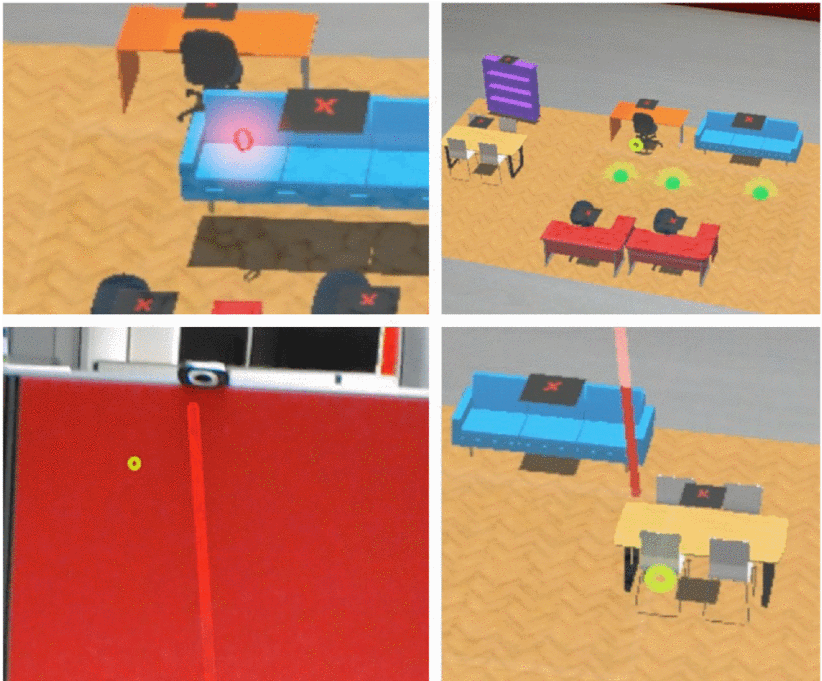

Using 3D Spaces and 360 Video Content for Collaboration

This project explores techniques to enhance collaborative experience in Mixed Reality environments using 3D reconstructions, 360 videos and 2D images. Previous research has shown that 360 video can provide a high resolution immersive visual space for collaboration, but little spatial information. Conversely, 3D scanned environments can provide high quality spatial cues, but with poor visual resolution. This project combines both approaches, enabling users to switch between a 3D view or 360 video of a collaborative space. In this hybrid interface, users can pick the representation of space best suited to the needs of the collaborative task. The project seeks to provide design guidelines for collaboration systems to enable empathic collaboration by sharing visual cues and environments across time and space.

-

MPConnect: A Mixed Presence Mixed Reality System

This project explores how a Mixed Presence Mixed Reality System can enhance remote collaboration. Collaborative Mixed Reality (MR) is a popular area of research, but most work has focused on one-to-one systems where either both collaborators are co-located or the collaborators are remote from one another. For example, remote users might collaborate in a shared Virtual Reality (VR) system, or a local worker might use an Augmented Reality (AR) display to connect with a remote expert to help them complete a task.

-

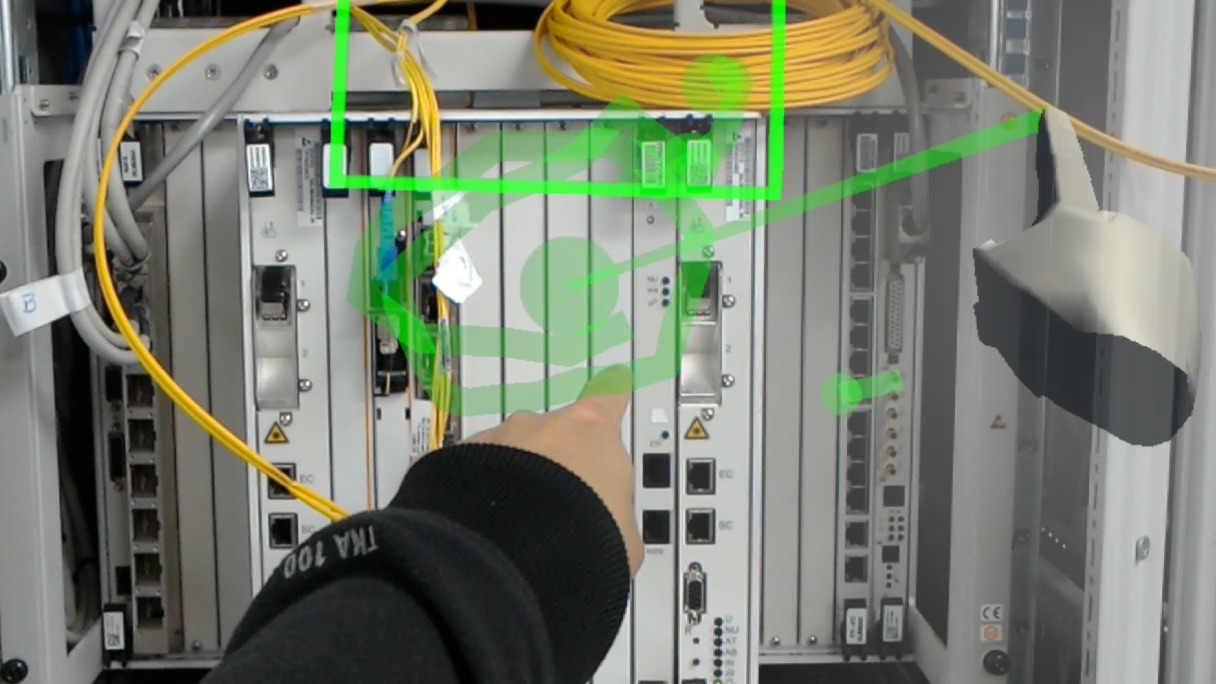

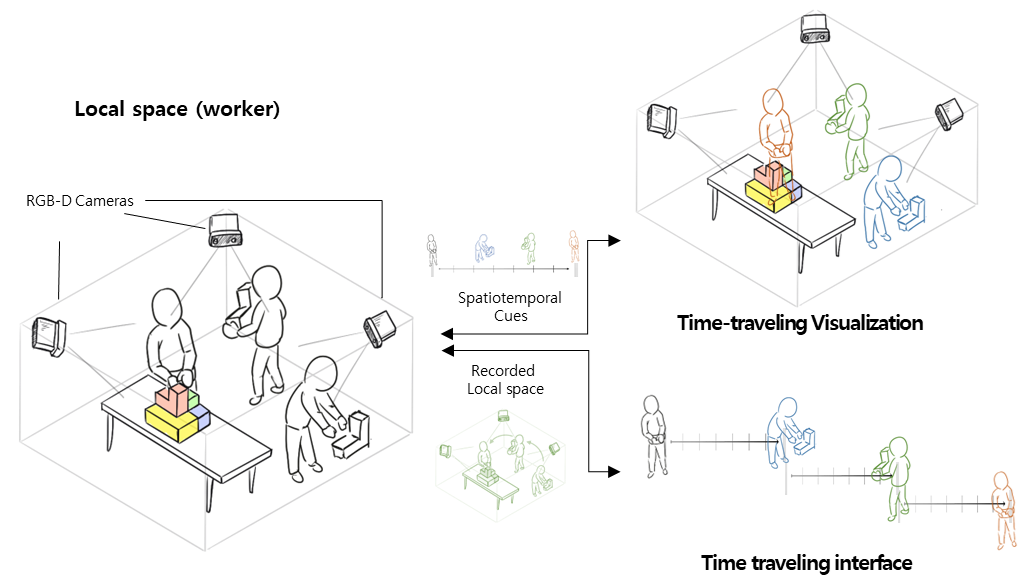

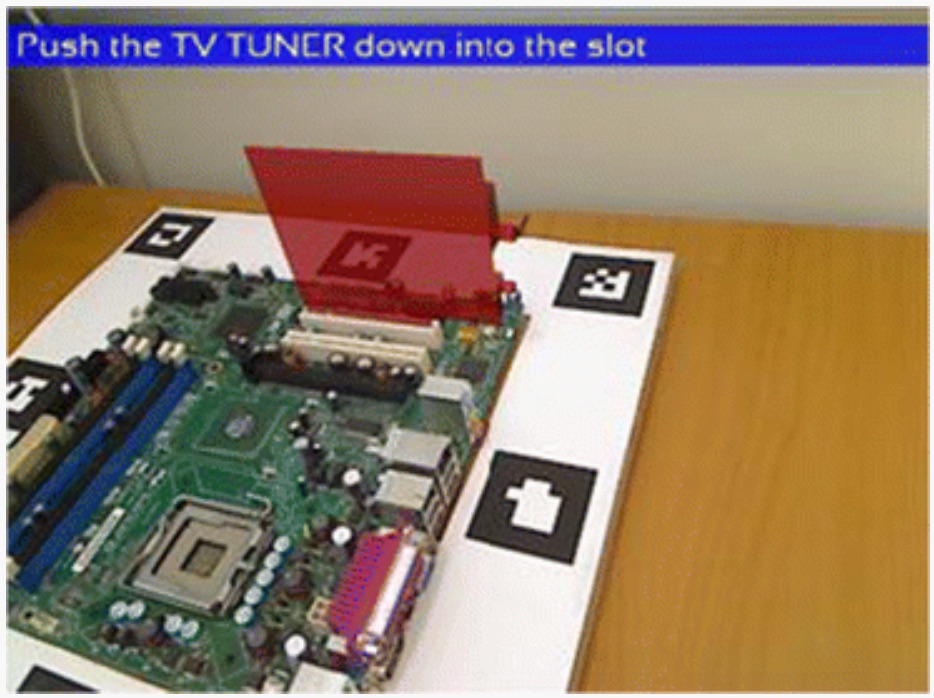

AR-based spatiotemporal interface and visualization for the physical task

The proposed study aims to assist in solving physical tasks such as mechanical assembly or collaborative design efficiently by using augmented reality-based space-time visualization techniques. In particular, when disassembling/reassembling is required, 3D recording of past actions and playback visualization are used to help memorize the exact assembly order and position of objects in the task. This study proposes a novel method that employs 3D-based spatial information recording and augmented reality-based playback to effectively support these types of physical tasks.

Publications

-

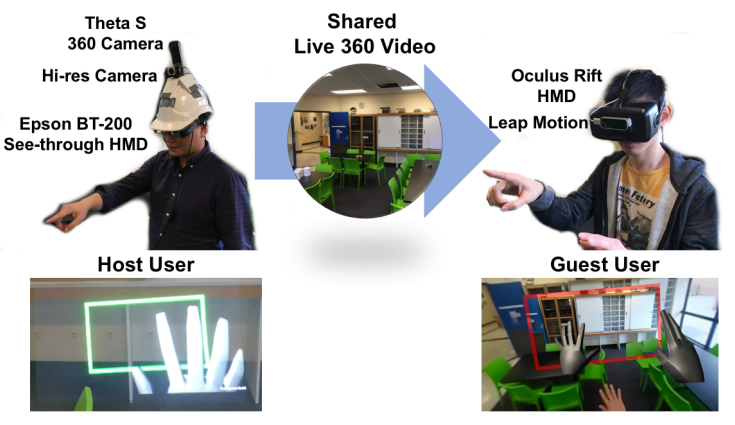

Mixed Reality Collaboration through Sharing a Live Panorama

Gun A. Lee, Theophilus Teo, Seungwon Kim, Mark BillinghurstGun A. Lee, Theophilus Teo, Seungwon Kim, and Mark Billinghurst. 2017. Mixed reality collaboration through sharing a live panorama. In SIGGRAPH Asia 2017 Mobile Graphics & Interactive Applications (SA '17). ACM, New York, NY, USA, Article 14, 4 pages. http://doi.acm.org/10.1145/3132787.3139203

@inproceedings{Lee:2017:MRC:3132787.3139203,

author = {Lee, Gun A. and Teo, Theophilus and Kim, Seungwon and Billinghurst, Mark},

title = {Mixed Reality Collaboration Through Sharing a Live Panorama},

booktitle = {SIGGRAPH Asia 2017 Mobile Graphics \& Interactive Applications},

series = {SA '17},

year = {2017},

isbn = {978-1-4503-5410-3},

location = {Bangkok, Thailand},

pages = {14:1--14:4},

articleno = {14},

numpages = {4},

url = {http://doi.acm.org/10.1145/3132787.3139203},

doi = {10.1145/3132787.3139203},

acmid = {3139203},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {panorama, remote collaboration, shared experience},

}One of the popular features on modern social networking platforms is sharing live 360 panorama video. This research investigates on how to further improve shared live panorama based collaborative experiences by applying Mixed Reality (MR) technology. SharedSphere is a wearable MR remote collaboration system. In addition to sharing a live captured immersive panorama, SharedSphere enriches the collaboration through overlaying MR visualisation of non-verbal communication cues (e.g., view awareness and gestures cues). User feedback collected through a preliminary user study indicated that sharing of live 360 panorama video was beneficial by providing a more immersive experience and supporting view independence. Users also felt that the view awareness cues were helpful for understanding the remote collaborator’s focus. -

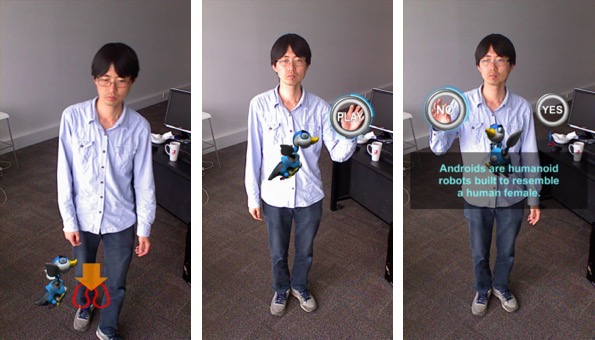

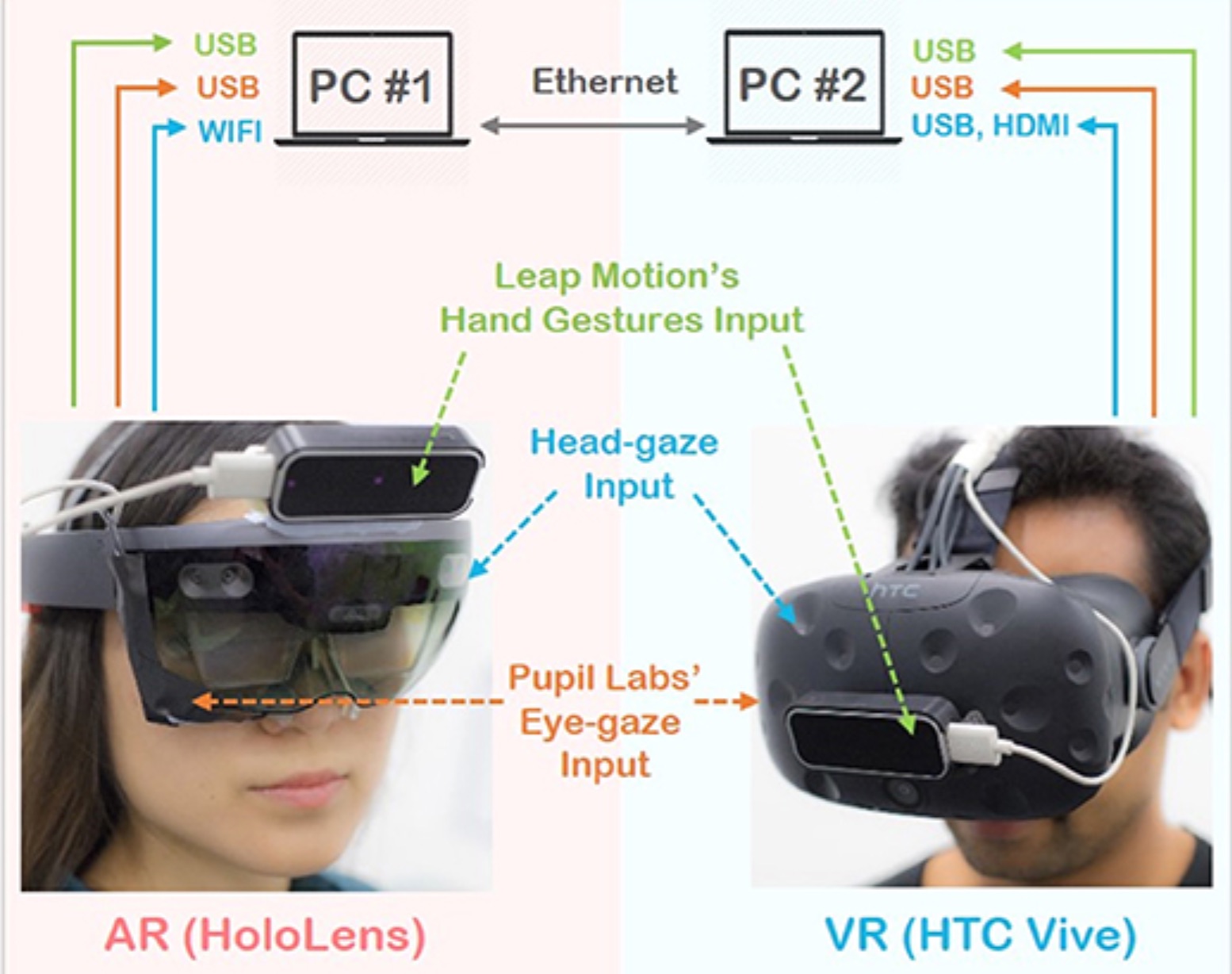

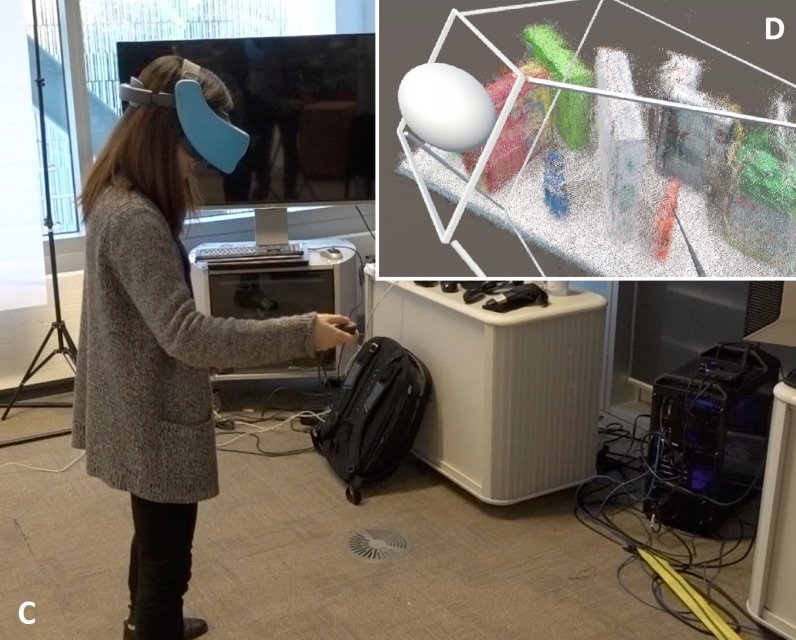

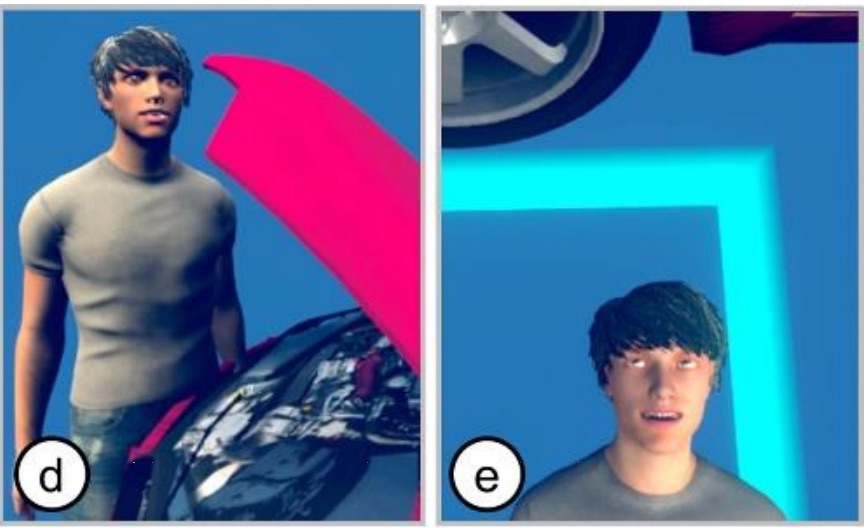

Mini-Me: An Adaptive Avatar for Mixed Reality Remote Collaboration

Thammathip Piumsomboon, Gun A Lee, Jonathon D Hart, Barrett Ens, Robert W Lindeman, Bruce H Thomas, Mark BillinghurstThammathip Piumsomboon, Gun A. Lee, Jonathon D. Hart, Barrett Ens, Robert W. Lindeman, Bruce H. Thomas, and Mark Billinghurst. 2018. Mini-Me: An Adaptive Avatar for Mixed Reality Remote Collaboration. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems (CHI '18). ACM, New York, NY, USA, Paper 46, 13 pages. DOI: https://doi.org/10.1145/3173574.3173620

@inproceedings{Piumsomboon:2018:MAA:3173574.3173620,

author = {Piumsomboon, Thammathip and Lee, Gun A. and Hart, Jonathon D. and Ens, Barrett and Lindeman, Robert W. and Thomas, Bruce H. and Billinghurst, Mark},

title = {Mini-Me: An Adaptive Avatar for Mixed Reality Remote Collaboration},

booktitle = {Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems},

series = {CHI '18},

year = {2018},

isbn = {978-1-4503-5620-6},

location = {Montreal QC, Canada},

pages = {46:1--46:13},

articleno = {46},

numpages = {13},

url = {http://doi.acm.org/10.1145/3173574.3173620},

doi = {10.1145/3173574.3173620},

acmid = {3173620},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {augmented reality, avatar, awareness, gaze, gesture, mixed reality, redirected, remote collaboration, remote embodiment, virtual reality},

}

[download]We present Mini-Me, an adaptive avatar for enhancing Mixed Reality (MR) remote collaboration between a local Augmented Reality (AR) user and a remote Virtual Reality (VR) user. The Mini-Me avatar represents the VR user's gaze direction and body gestures while it transforms in size and orientation to stay within the AR user's field of view. A user study was conducted to evaluate Mini-Me in two collaborative scenarios: an asymmetric remote expert in VR assisting a local worker in AR, and a symmetric collaboration in urban planning. We found that the presence of the Mini-Me significantly improved Social Presence and the overall experience of MR collaboration. -

Pinpointing: Precise Head-and Eye-Based Target Selection for Augmented Reality

Mikko Kytö, Barrett Ens, Thammathip Piumsomboon, Gun A Lee, Mark BillinghurstMikko Kytö, Barrett Ens, Thammathip Piumsomboon, Gun A. Lee, and Mark Billinghurst. 2018. Pinpointing: Precise Head- and Eye-Based Target Selection for Augmented Reality. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems (CHI '18). ACM, New York, NY, USA, Paper 81, 14 pages. DOI: https://doi.org/10.1145/3173574.3173655

@inproceedings{Kyto:2018:PPH:3173574.3173655,

author = {Kyt\"{o}, Mikko and Ens, Barrett and Piumsomboon, Thammathip and Lee, Gun A. and Billinghurst, Mark},

title = {Pinpointing: Precise Head- and Eye-Based Target Selection for Augmented Reality},

booktitle = {Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems},

series = {CHI '18},

year = {2018},

isbn = {978-1-4503-5620-6},

location = {Montreal QC, Canada},

pages = {81:1--81:14},

articleno = {81},

numpages = {14},

url = {http://doi.acm.org/10.1145/3173574.3173655},

doi = {10.1145/3173574.3173655},

acmid = {3173655},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {augmented reality, eye tracking, gaze interaction, head-worn display, refinement techniques, target selection},

}View: https://dl.acm.org/ft_gateway.cfm?id=3173655&ftid=1958752&dwn=1&CFID=51906271&CFTOKEN=b63dc7f7afbcc656-4D4F3907-C934-F85B-2D539C0F52E3652A

Video: https://youtu.be/nCX8zIEmv0sHead and eye movement can be leveraged to improve the user's interaction repertoire for wearable displays. Head movements are deliberate and accurate, and provide the current state-of-the-art pointing technique. Eye gaze can potentially be faster and more ergonomic, but suffers from low accuracy due to calibration errors and drift of wearable eye-tracking sensors. This work investigates precise, multimodal selection techniques using head motion and eye gaze. A comparison of speed and pointing accuracy reveals the relative merits of each method, including the achievable target size for robust selection. We demonstrate and discuss example applications for augmented reality, including compact menus with deep structure, and a proof-of-concept method for on-line correction of calibration drift. -

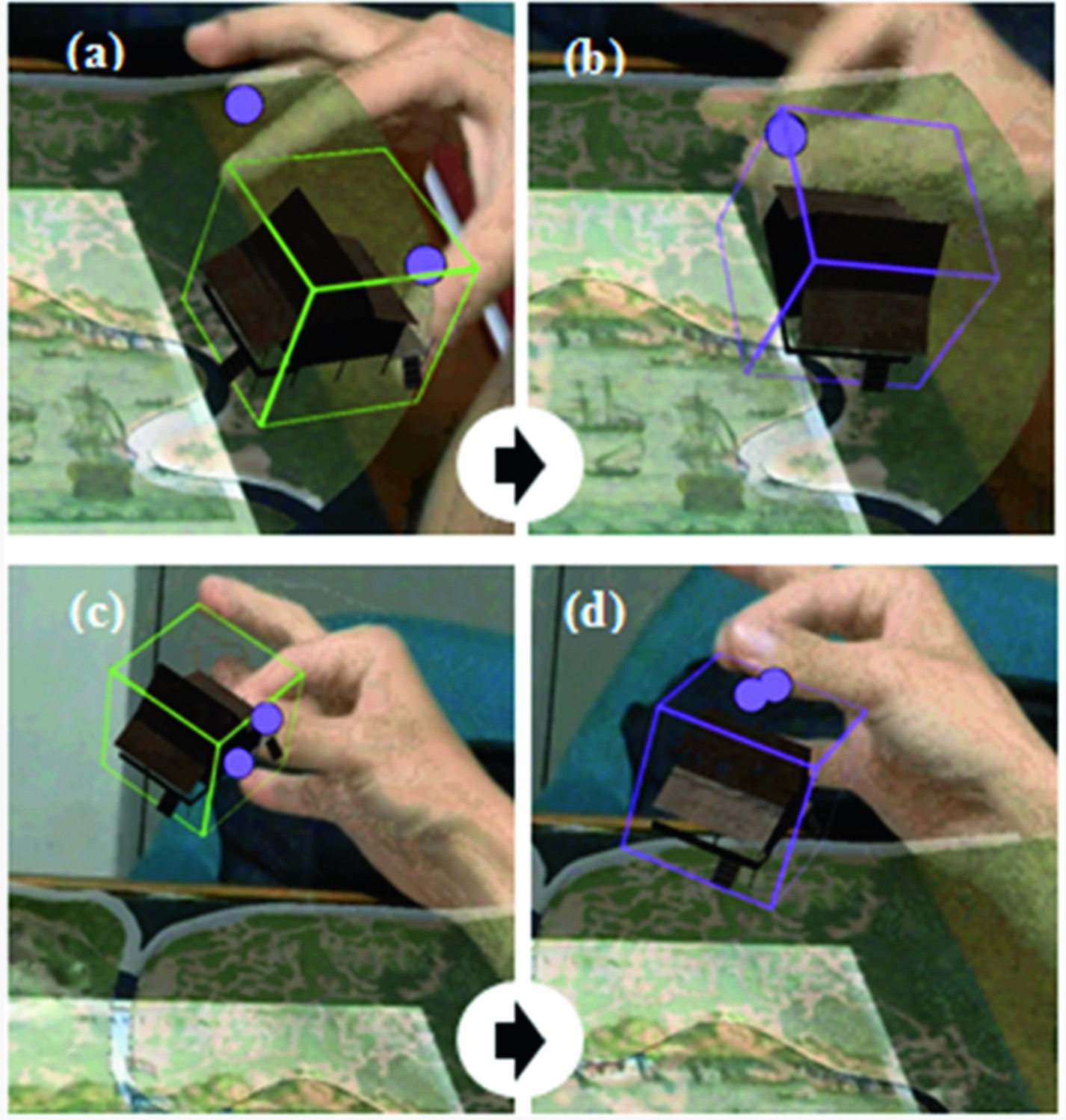

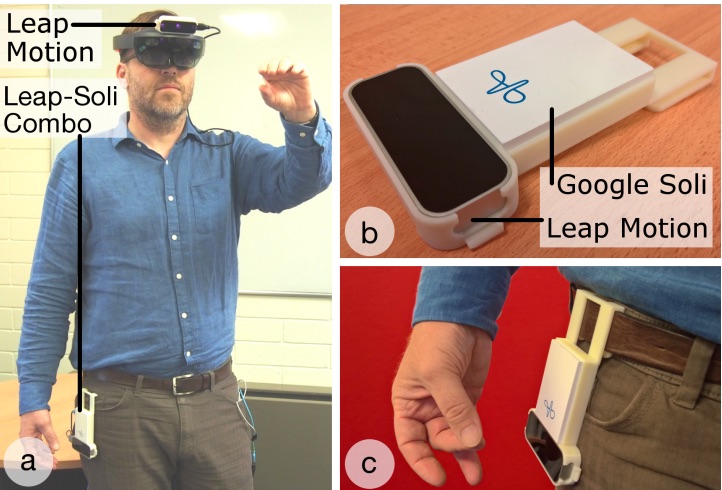

Counterpoint: Exploring Mixed-Scale Gesture Interaction for AR Applications

Barrett Ens, Aaron Quigley, Hui-Shyong Yeo, Pourang Irani, Thammathip Piumsomboon, Mark BillinghurstBarrett Ens, Aaron Quigley, Hui-Shyong Yeo, Pourang Irani, Thammathip Piumsomboon, and Mark Billinghurst. 2018. Counterpoint: Exploring Mixed-Scale Gesture Interaction for AR Applications. In Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems (CHI EA '18). ACM, New York, NY, USA, Paper LBW120, 6 pages. DOI: https://doi.org/10.1145/3170427.3188513

@inproceedings{Ens:2018:CEM:3170427.3188513,

author = {Ens, Barrett and Quigley, Aaron and Yeo, Hui-Shyong and Irani, Pourang and Piumsomboon, Thammathip and Billinghurst, Mark},

title = {Counterpoint: Exploring Mixed-Scale Gesture Interaction for AR Applications},

booktitle = {Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems},

series = {CHI EA '18},

year = {2018},

isbn = {978-1-4503-5621-3},

location = {Montreal QC, Canada},

pages = {LBW120:1--LBW120:6},

articleno = {LBW120},

numpages = {6},

url = {http://doi.acm.org/10.1145/3170427.3188513},

doi = {10.1145/3170427.3188513},

acmid = {3188513},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {augmented reality, gesture interaction, wearable computing},

}View: http://delivery.acm.org/10.1145/3190000/3188513/LBW120.pdf?ip=130.220.8.189&id=3188513&acc=ACTIVE%20SERVICE&key=65D80644F295BC0D%2E66BF2BADDFDC7DE0%2EF0418AF7A4636953%2E4D4702B0C3E38B35&__acm__=1531307952_cec04550a31b41dfba9a6865be86b8ac

Video: https://youtu.be/GZzC0Vhte4MThis paper presents ongoing work on a design exploration for mixed-scale gestures, which interleave microgestures with larger gestures for computer interaction. We describe three prototype applications that show various facets of this multi-dimensional design space. These applications portray various tasks on a Hololens Augmented Reality display, using different combinations of wearable sensors. Future work toward expanding the design space and exploration is discussed, along with plans toward evaluation of mixed-scale gesture design. -

Levity: A Virtual Reality System that Responds to Cognitive Load

Lynda Gerry, Barrett Ens, Adam Drogemuller, Bruce Thomas, Mark BillinghurstLynda Gerry, Barrett Ens, Adam Drogemuller, Bruce Thomas, and Mark Billinghurst. 2018. Levity: A Virtual Reality System that Responds to Cognitive Load. In Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems (CHI EA '18). ACM, New York, NY, USA, Paper LBW610, 6 pages. DOI: https://doi.org/10.1145/3170427.3188479

@inproceedings{Gerry:2018:LVR:3170427.3188479,

author = {Gerry, Lynda and Ens, Barrett and Drogemuller, Adam and Thomas, Bruce and Billinghurst, Mark},

title = {Levity: A Virtual Reality System That Responds to Cognitive Load},

booktitle = {Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems},

series = {CHI EA '18},

year = {2018},

isbn = {978-1-4503-5621-3},

location = {Montreal QC, Canada},

pages = {LBW610:1--LBW610:6},

articleno = {LBW610},

numpages = {6},

url = {http://doi.acm.org/10.1145/3170427.3188479},

doi = {10.1145/3170427.3188479},

acmid = {3188479},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {brain computer interface, cognitive load, virtual reality, visual search task},

}View: http://delivery.acm.org/10.1145/3190000/3188479/LBW610.pdf?ip=130.220.8.189&id=3188479&acc=ACTIVE%20SERVICE&key=65D80644F295BC0D%2E66BF2BADDFDC7DE0%2E4D4702B0C3E38B35%2E4D4702B0C3E38B35&__acm__=1531308138_ee3f5f719b239f1ee561612023b6fe1a

Video: https://youtu.be/r2csCoMvLeMThis paper presents the ongoing development of a proof-of-concept, adaptive system that uses a neurocognitive signal to facilitate efficient performance in a Virtual Reality visual search task. The Levity system measures and interactively adjusts the display of a visual array during a visual search task based on the user's level of cognitive load, measured with a 16-channel EEG device. Future developments will validate the system and evaluate its ability to improve search efficiency by detecting and adapting to a user's cognitive demands. -

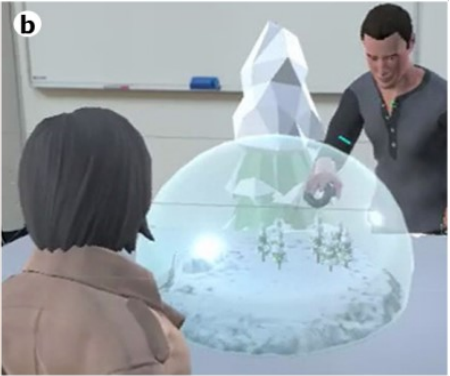

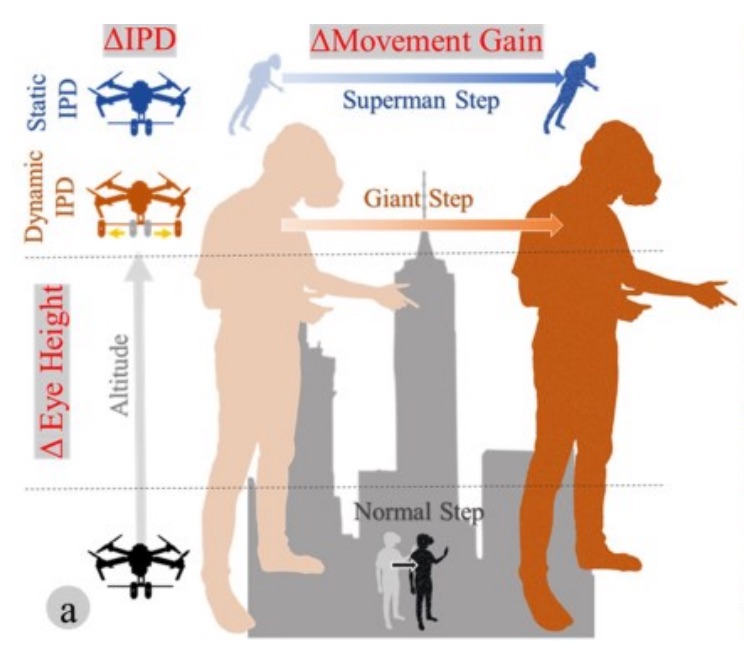

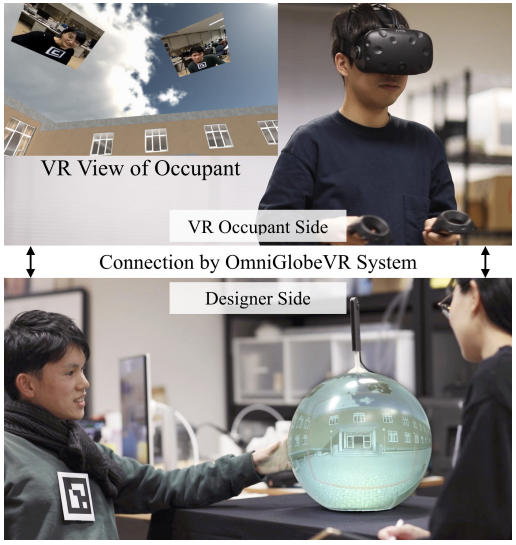

Snow Dome: A Multi-Scale Interaction in Mixed Reality Remote Collaboration

Thammathip Piumsomboon, Gun A Lee, Mark BillinghurstThammathip Piumsomboon, Gun A. Lee, and Mark Billinghurst. 2018. Snow Dome: A Multi-Scale Interaction in Mixed Reality Remote Collaboration. In Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems (CHI EA '18). ACM, New York, NY, USA, Paper D115, 4 pages. DOI: https://doi.org/10.1145/3170427.3186495

@inproceedings{Piumsomboon:2018:SDM:3170427.3186495,

author = {Piumsomboon, Thammathip and Lee, Gun A. and Billinghurst, Mark},

title = {Snow Dome: A Multi-Scale Interaction in Mixed Reality Remote Collaboration},

booktitle = {Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems},

series = {CHI EA '18},

year = {2018},

isbn = {978-1-4503-5621-3},

location = {Montreal QC, Canada},

pages = {D115:1--D115:4},

articleno = {D115},

numpages = {4},

url = {http://doi.acm.org/10.1145/3170427.3186495},

doi = {10.1145/3170427.3186495},

acmid = {3186495},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {augmented reality, avatar, mixed reality, multiple, remote collaboration, remote embodiment, scale, virtual reality},

}View: http://delivery.acm.org/10.1145/3190000/3186495/D115.pdf?ip=130.220.8.189&id=3186495&acc=ACTIVE%20SERVICE&key=65D80644F295BC0D%2E66BF2BADDFDC7DE0%2EF0418AF7A4636953%2E4D4702B0C3E38B35&__acm__=1531308619_3eface84d74bae70fd47b11af3589b10

Video: https://youtu.be/nm8A9wzobIEWe present Snow Dome, a Mixed Reality (MR) remote collaboration application that supports a multi-scale interaction for a Virtual Reality (VR) user. We share a local Augmented Reality (AR) user's reconstructed space with a remote VR user who has an ability to scale themselves up into a giant or down into a miniature for different perspectives and interaction at that scale within the shared space. -

Filtering Shared Social Data in AR

Alaeddin Nassani, Huidong Bai, Gun Lee, Mark Billinghurst, Tobias Langlotz, Robert W LindemanAlaeddin Nassani, Huidong Bai, Gun Lee, Mark Billinghurst, Tobias Langlotz, and Robert W. Lindeman. 2018. Filtering Shared Social Data in AR. In Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems (CHI EA '18). ACM, New York, NY, USA, Paper LBW100, 6 pages. DOI: https://doi.org/10.1145/3170427.3188609

@inproceedings{Nassani:2018:FSS:3170427.3188609,

author = {Nassani, Alaeddin and Bai, Huidong and Lee, Gun and Billinghurst, Mark and Langlotz, Tobias and Lindeman, Robert W.},

title = {Filtering Shared Social Data in AR},

booktitle = {Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems},

series = {CHI EA '18},

year = {2018},

isbn = {978-1-4503-5621-3},

location = {Montreal QC, Canada},

pages = {LBW100:1--LBW100:6},

articleno = {LBW100},

numpages = {6},

url = {http://doi.acm.org/10.1145/3170427.3188609},

doi = {10.1145/3170427.3188609},

acmid = {3188609},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {360 panoramas, augmented reality, live video stream, sharing social experiences, virtual avatars},

}View: http://delivery.acm.org/10.1145/3190000/3188609/LBW100.pdf?ip=130.220.8.189&id=3188609&acc=ACTIVE%20SERVICE&key=65D80644F295BC0D%2E66BF2BADDFDC7DE0%2EF0418AF7A4636953%2E4D4702B0C3E38B35&__acm__=1531309037_59c3c6e906e725ca712e49b5a67d51af

Video: https://youtu.be/W-CDpBqe1yIWe describe a method and a prototype implementation for filtering shared social data (eg, 360 video) in a wearable Augmented Reality (eg, HoloLens) application. The data filtering is based on user-viewer relationships. For example, when sharing a 360 video, if the user has an intimate relationship with the viewer, then full fidelity (ie the 360 video) of the user's environment is visible. But if the two are strangers then only a snapshot image is shared. By varying the fidelity of the shared content, the viewer is able to focus more on the data shared by their close relations and differentiate this from other content. Also, the approach enables the sharing-user to have more control over the fidelity of the content shared with their contacts for privacy. -

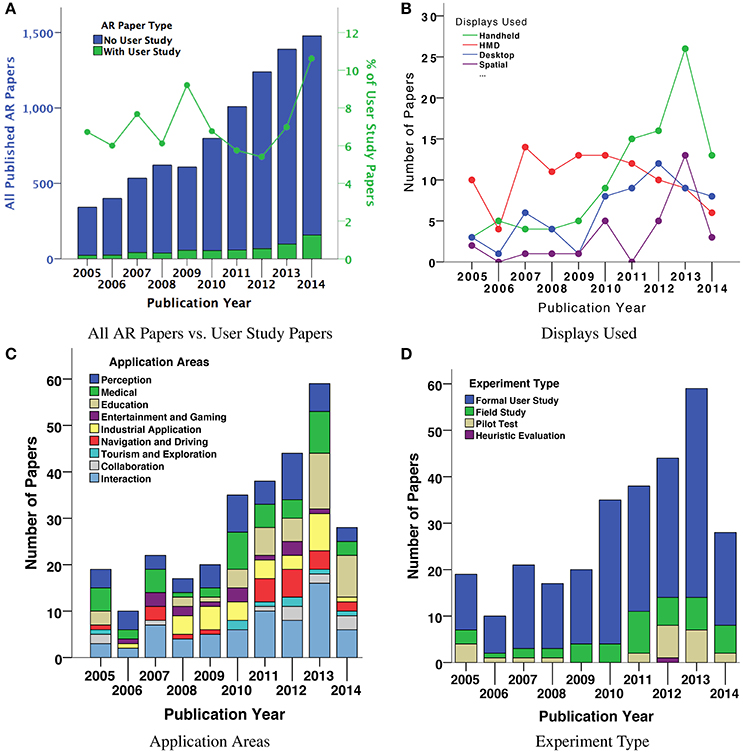

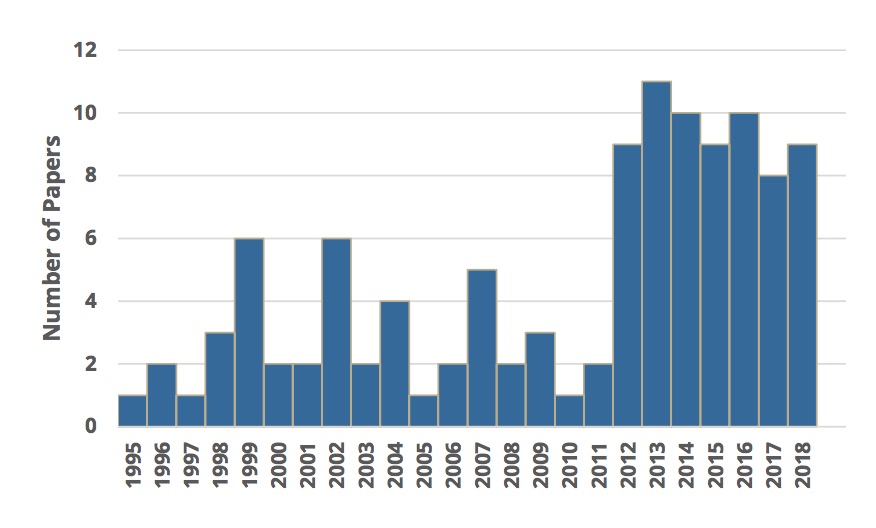

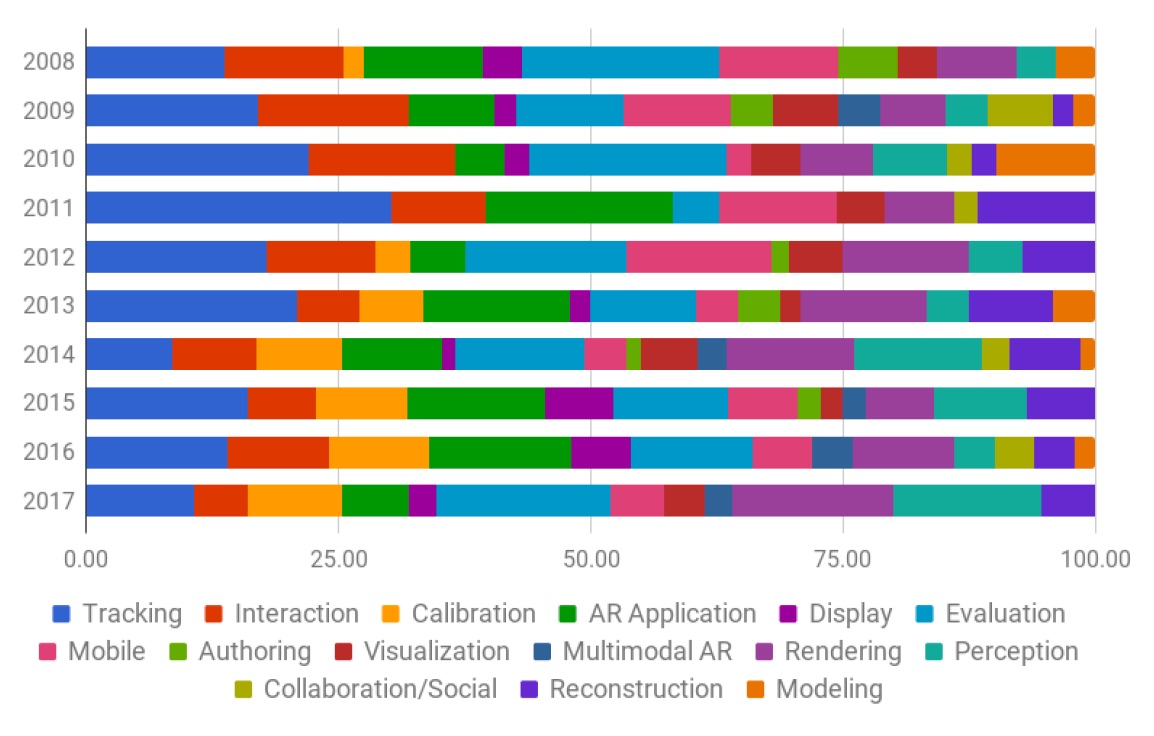

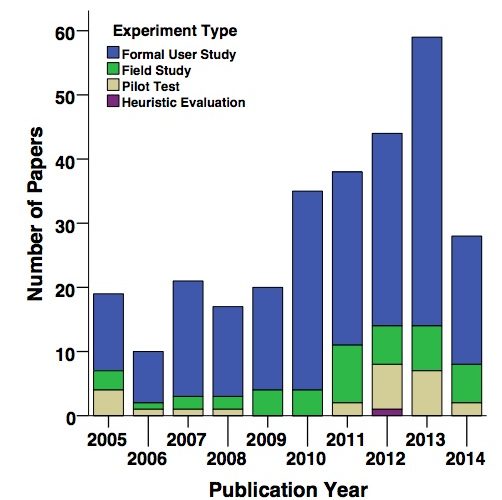

A Systematic Review of 10 Years of Augmented Reality Usability Studies: 2005 to 2014

Arindam Dey, Mark Billinghurst, Robert W Lindeman, J SwanDey A, Billinghurst M, Lindeman RW and Swan JE II (2018) A Systematic Review of 10 Years of Augmented Reality Usability Studies: 2005 to 2014. Front. Robot. AI 5:37. doi: 10.3389/frobt.2018.00037

@ARTICLE{10.3389/frobt.2018.00037,

AUTHOR={Dey, Arindam and Billinghurst, Mark and Lindeman, Robert W. and Swan, J. Edward},

TITLE={A Systematic Review of 10 Years of Augmented Reality Usability Studies: 2005 to 2014},

JOURNAL={Frontiers in Robotics and AI},

VOLUME={5},

PAGES={37},

YEAR={2018},

URL={https://www.frontiersin.org/article/10.3389/frobt.2018.00037},

DOI={10.3389/frobt.2018.00037},

ISSN={2296-9144},

}Augmented Reality (AR) interfaces have been studied extensively over the last few decades, with a growing number of user-based experiments. In this paper, we systematically review 10 years of the most influential AR user studies, from 2005 to 2014. A total of 291 papers with 369 individual user studies have been reviewed and classified based on their application areas. The primary contribution of the review is to present the broad landscape of user-based AR research, and to provide a high-level view of how that landscape has changed. We summarize the high-level contributions from each category of papers, and present examples of the most influential user studies. We also identify areas where there have been few user studies, and opportunities for future research. Among other things, we find that there is a growing trend toward handheld AR user studies, and that most studies are conducted in laboratory settings and do not involve pilot testing. This research will be useful for AR researchers who want to follow best practices in designing their own AR user studies. -

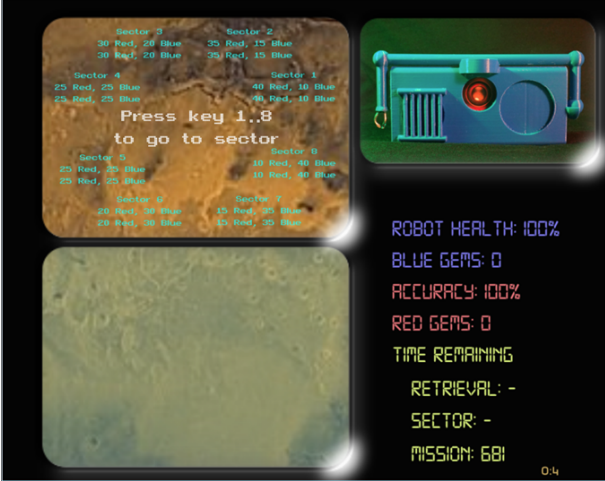

He who hesitates is lost (... in thoughts over a robot)

James Wen, Amanda Stewart, Mark Billinghurst, Arindam Dey, Chad Tossell, Victor FinomoreJames Wen, Amanda Stewart, Mark Billinghurst, Arindam Dey, Chad Tossell, and Victor Finomore. 2018. He who hesitates is lost (...in thoughts over a robot). In Proceedings of the Technology, Mind, and Society (TechMindSociety '18). ACM, New York, NY, USA, Article 43, 6 pages. DOI: https://doi.org/10.1145/3183654.3183703

@inproceedings{Wen:2018:HHL:3183654.3183703,

author = {Wen, James and Stewart, Amanda and Billinghurst, Mark and Dey, Arindam and Tossell, Chad and Finomore, Victor},

title = {He Who Hesitates is Lost (...In Thoughts over a Robot)},

booktitle = {Proceedings of the Technology, Mind, and Society},

series = {TechMindSociety '18},

year = {2018},

isbn = {978-1-4503-5420-2},

location = {Washington, DC, USA},

pages = {43:1--43:6},

articleno = {43},

numpages = {6},

url = {http://doi.acm.org/10.1145/3183654.3183703},

doi = {10.1145/3183654.3183703},

acmid = {3183703},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {Anthropomorphism, Empathy, Human Machine Team, Robotics, User Study},

}In a team, the strong bonds that can form between teammates are often seen as critical for reaching peak performance. This perspective may need to be reconsidered, however, if some team members are autonomous robots since establishing bonds with fundamentally inanimate and expendable objects may prove counterproductive. Previous work has measured empathic responses towards robots as singular events at the conclusion of experimental sessions. As relationships extend over long periods of time, sustained empathic behavior towards robots would be of interest. In order to measure user actions that may vary over time and are affected by empathy towards a robot teammate, we created the TEAMMATE simulation system. Our findings suggest that inducing empathy through a back story narrative can significantly change participant decisions in actions that may have consequences for a robot companion over time. The results of our study can have strong implications for the overall performance of human machine teams. -

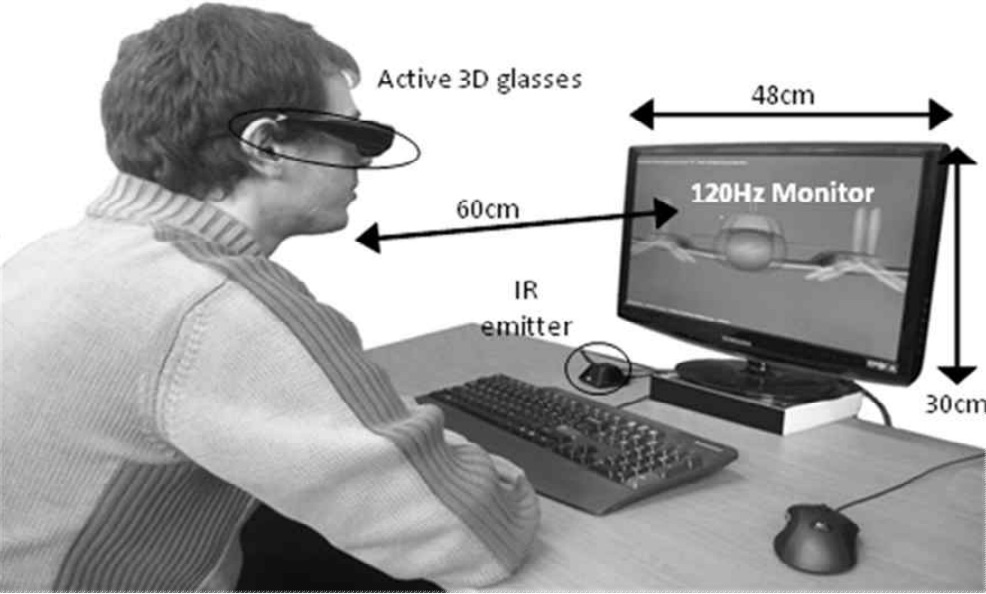

A hybrid 2D/3D user Interface for radiological diagnosis

Veera Bhadra Harish Mandalika, Alexander I Chernoglazov, Mark Billinghurst, Christoph Bartneck, Michael A Hurrell, Niels de Ruiter, Anthony PH Butler, Philip H ButlerA hybrid 2D/3D user Interface for radiological diagnosis Veera Bhadra Harish Mandalika, Alexander I Chernoglazov, Mark Billinghurst, Christoph Bartneck, Michael A Hurrell, Niels de Ruiter, Anthony PH Butler, Philip H ButlerJournal of digital imaging 31 (1), 56-73

@Article{Mandalika2018,

author="Mandalika, Veera Bhadra Harish

and Chernoglazov, Alexander I.

and Billinghurst, Mark

and Bartneck, Christoph

and Hurrell, Michael A.

and Ruiter, Niels de

and Butler, Anthony P. H.

and Butler, Philip H.",

title="A Hybrid 2D/3D User Interface for Radiological Diagnosis",

journal="Journal of Digital Imaging",

year="2018",

month="Feb",

day="01",

volume="31",

number="1",

pages="56--73",

abstract="This paper presents a novel 2D/3D desktop virtual reality hybrid user interface for radiology that focuses on improving 3D manipulation required in some diagnostic tasks. An evaluation of our system revealed that our hybrid interface is more efficient for novice users and more accurate for both novice and experienced users when compared to traditional 2D only interfaces. This is a significant finding because it indicates, as the techniques mature, that hybrid interfaces can provide significant benefit to image evaluation. Our hybrid system combines a zSpace stereoscopic display with 2D displays, and mouse and keyboard input. It allows the use of 2D and 3D components interchangeably, or simultaneously. The system was evaluated against a 2D only interface with a user study that involved performing a scoliosis diagnosis task. There were two user groups: medical students and radiology residents. We found improvements in completion time for medical students, and in accuracy for both groups. In particular, the accuracy of medical students improved to match that of the residents.",

issn="1618-727X",

doi="10.1007/s10278-017-0002-6",

url="https://doi.org/10.1007/s10278-017-0002-6"

}This paper presents a novel 2D/3D desktop virtual reality hybrid user interface for radiology that focuses on improving 3D manipulation required in some diagnostic tasks. An evaluation of our system revealed that our hybrid interface is more efficient for novice users and more accurate for both novice and experienced users when compared to traditional 2D only interfaces. This is a significant finding because it indicates, as the techniques mature, that hybrid interfaces can provide significant benefit to image evaluation. Our hybrid system combines a zSpace stereoscopic display with 2D displays, and mouse and keyboard input. It allows the use of 2D and 3D components interchangeably, or simultaneously. The system was evaluated against a 2D only interface with a user study that involved performing a scoliosis diagnosis task. There were two user groups: medical students and radiology residents. We found improvements in completion time for medical students, and in accuracy for both groups. In particular, the accuracy of medical students improved to match that of the residents. -

The Effect of Collaboration Styles and View Independence on Video-Mediated Remote Collaboration

Seungwon Kim, Mark Billinghurst, Gun LeeKim, S., Billinghurst, M., & Lee, G. (2018). The Effect of Collaboration Styles and View Independence on Video-Mediated Remote Collaboration. Computer Supported Cooperative Work (CSCW), 1-39.

@Article{Kim2018,

author="Kim, Seungwon

and Billinghurst, Mark

and Lee, Gun",

title="The Effect of Collaboration Styles and View Independence on Video-Mediated Remote Collaboration",

journal="Computer Supported Cooperative Work (CSCW)",

year="2018",

month="Jun",

day="02",

abstract="This paper investigates how different collaboration styles and view independence affect remote collaboration. Our remote collaboration system shares a live video of a local user's real-world task space with a remote user. The remote user can have an independent view or a dependent view of a shared real-world object manipulation task and can draw virtual annotations onto the real-world objects as a visual communication cue. With the system, we investigated two different collaboration styles; (1) remote expert collaboration where a remote user has the solution and gives instructions to a local partner and (2) mutual collaboration where neither user has a solution but both remote and local users share ideas and discuss ways to solve the real-world task. In the user study, the remote expert collaboration showed a number of benefits over the mutual collaboration. With the remote expert collaboration, participants had better communication from the remote user to the local user, more aligned focus between participants, and the remote participants' feeling of enjoyment and togetherness. However, the benefits were not always apparent at the local participants' end, especially with measures of enjoyment and togetherness. The independent view also had several benefits over the dependent view, such as allowing remote participants to freely navigate around the workspace while having a wider fully zoomed-out view. The benefits of the independent view were more prominent in the mutual collaboration than in the remote expert collaboration, especially in enabling the remote participants to see the workspace.",

issn="1573-7551",

doi="10.1007/s10606-018-9324-2",

url="https://doi.org/10.1007/s10606-018-9324-2"

}This paper investigates how different collaboration styles and view independence affect remote collaboration. Our remote collaboration system shares a live video of a local user’s real-world task space with a remote user. The remote user can have an independent view or a dependent view of a shared real-world object manipulation task and can draw virtual annotations onto the real-world objects as a visual communication cue. With the system, we investigated two different collaboration styles; (1) remote expert collaboration where a remote user has the solution and gives instructions to a local partner and (2) mutual collaboration where neither user has a solution but both remote and local users share ideas and discuss ways to solve the real-world task. In the user study, the remote expert collaboration showed a number of benefits over the mutual collaboration. With the remote expert collaboration, participants had better communication from the remote user to the local user, more aligned focus between participants, and the remote participants’ feeling of enjoyment and togetherness. However, the benefits were not always apparent at the local participants’ end, especially with measures of enjoyment and togetherness. The independent view also had several benefits over the dependent view, such as allowing remote participants to freely navigate around the workspace while having a wider fully zoomed-out view. The benefits of the independent view were more prominent in the mutual collaboration than in the remote expert collaboration, especially in enabling the remote participants to see the workspace. -

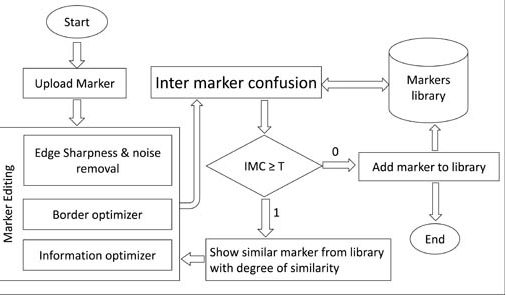

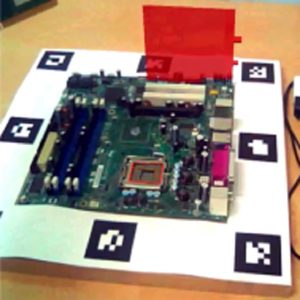

Robust tracking through the design of high quality fiducial markers: An optimization tool for ARToolKit

Dawar Khan, Sehat Ullah, Dong-Ming Yan, Ihsan Rabbi, Paul Richard, Thuong Hoang, Mark Billinghurst, Xiaopeng ZhangD. Khan et al., "Robust Tracking Through the Design of High Quality Fiducial Markers: An Optimization Tool for ARToolKit," in IEEE Access, vol. 6, pp. 22421-22433, 2018. doi: 10.1109/ACCESS.2018.2801028

@ARTICLE{8287815,

author={D. Khan and S. Ullah and D. M. Yan and I. Rabbi and P. Richard and T. Hoang and M. Billinghurst and X. Zhang},

journal={IEEE Access},

title={Robust Tracking Through the Design of High Quality Fiducial Markers: An Optimization Tool for ARToolKit},

year={2018},

volume={6},

number={},

pages={22421-22433},

keywords={augmented reality;image recognition;object tracking;optical tracking;pose estimation;ARToolKit markers;B:W;augmented reality applications;camera tracking;edge sharpness;fiducial marker optimizer;high quality fiducial markers;optimization tool;pose estimation;robust tracking;specialized image processing algorithms;Cameras;Complexity theory;Fiducial markers;Libraries;Robustness;Tools;ARToolKit;Fiducial markers;augmented reality;marker tracking;robust recognition},

doi={10.1109/ACCESS.2018.2801028},

ISSN={},

month={},}Fiducial markers are images or landmarks placed in real environment, typically used for pose estimation and camera tracking. Reliable fiducials are strongly desired for many augmented reality (AR) applications, but currently there is no systematic method to design highly reliable fiducials. In this paper, we present fiducial marker optimizer (FMO), a tool to optimize the design attributes of ARToolKit markers, including black to white (B:W) ratio, edge sharpness, and information complexity, and to reduce inter-marker confusion. For these operations, the FMO provides a user friendly interface at the front-end and specialized image processing algorithms at the back-end. We tested manually designed markers and FMO optimized markers in ARToolKit and found that the latter were more robust. The FMO will be used for designing highly reliable fiducials in easy to use fashion. It will improve the application's performance, where it is used. -

User Interface Agents for Guiding Interaction with Augmented Virtual Mirrors

Gun Lee, Omprakash Rudhru, Hye Sun Park, Ho Won Kim, and Mark BillinghurstGun Lee, Omprakash Rudhru, Hye Sun Park, Ho Won Kim, and Mark Billinghurst. User Interface Agents for Guiding Interaction with Augmented Virtual Mirrors. In Proceedings of ICAT-EGVE 2017 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments, 109-116. http://dx.doi.org/10.2312/egve.20171347

@inproceedings {egve.20171347,

booktitle = {ICAT-EGVE 2017 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments},

editor = {Robert W. Lindeman and Gerd Bruder and Daisuke Iwai},

title = {{User Interface Agents for Guiding Interaction with Augmented Virtual Mirrors}},

author = {Lee, Gun A. and Rudhru, Omprakash and Park, Hye Sun and Kim, Ho Won and Billinghurst, Mark},

year = {2017},

publisher = {The Eurographics Association},

ISSN = {1727-530X},

ISBN = {978-3-03868-038-3},

DOI = {10.2312/egve.20171347}

}This research investigates using user interface (UI) agents for guiding gesture based interaction with Augmented Virtual Mirrors. Compared to prior work in gesture interaction, where graphical symbols are used for guiding user interaction, we propose using UI agents. We explore two approaches for using UI agents: 1) using a UI agent as a delayed cursor and 2) using a UI agent as an interactive button. We conducted two user studies to evaluate the proposed designs. The results from the user studies show that UI agents are effective for guiding user interactions in a similar way as a traditional graphical user interface providing visual cues, while they are useful in emotionally engaging with users. -

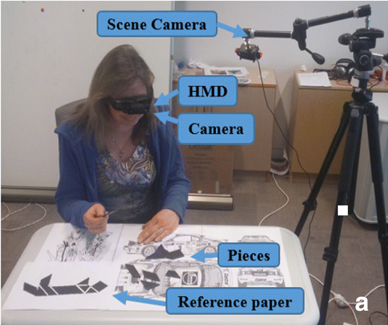

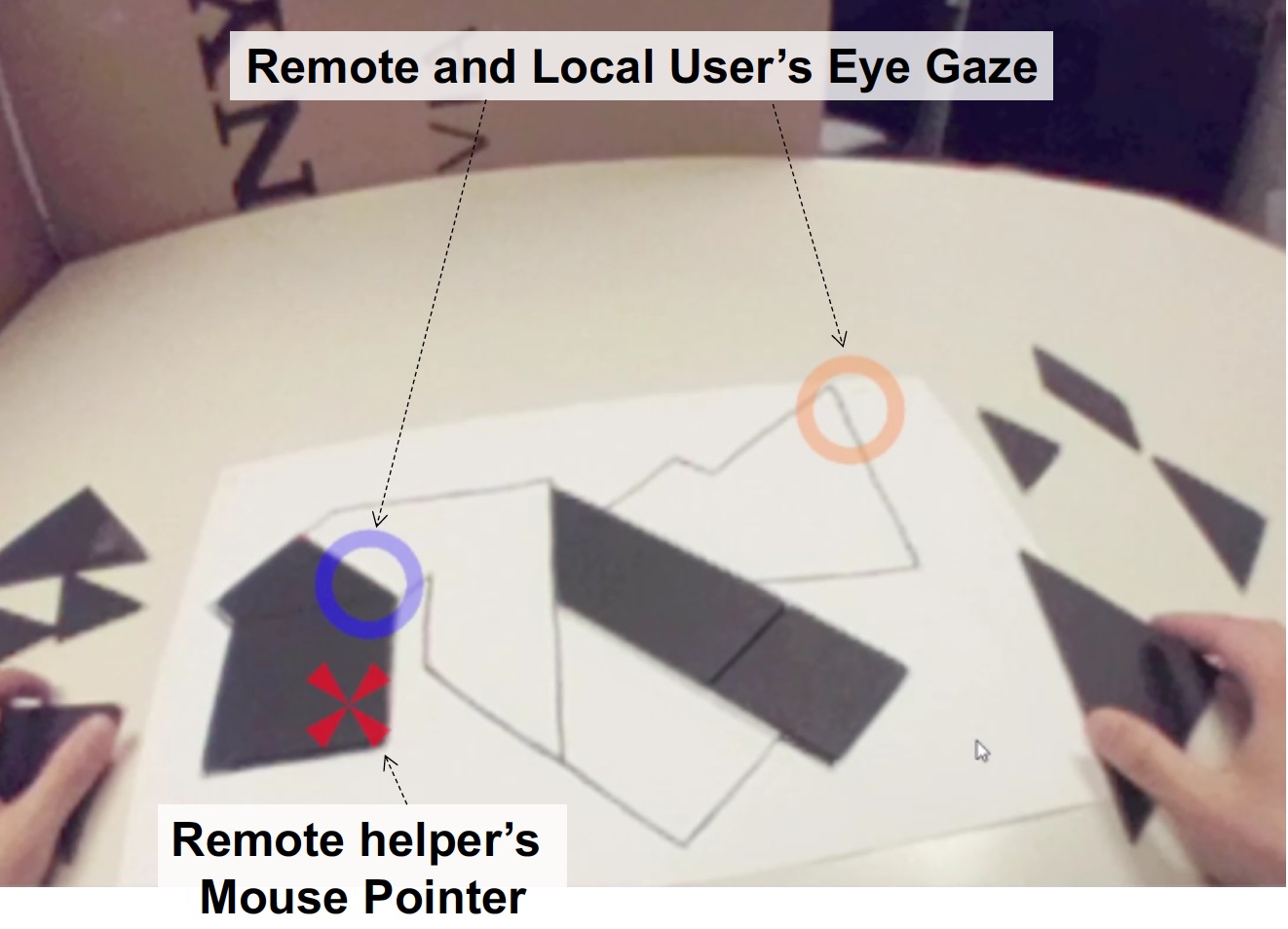

Improving Collaboration in Augmented Video Conference using Mutually Shared Gaze

Gun Lee, Seungwon Kim, Youngho Lee, Arindam Dey, Thammathip Piumsomboon, Mitchell Norman and Mark BillinghurstGun Lee, Seungwon Kim, Youngho Lee, Arindam Dey, Thammathip Piumsomboon, Mitchell Norman and Mark Billinghurst. 2017. Improving Collaboration in Augmented Video Conference using Mutually Shared Gaze. In Proceedings of ICAT-EGVE 2017 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments, pp. 197-204. http://dx.doi.org/10.2312/egve.20171359

@inproceedings {egve.20171359,

booktitle = {ICAT-EGVE 2017 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments},

editor = {Robert W. Lindeman and Gerd Bruder and Daisuke Iwai},

title = {{Improving Collaboration in Augmented Video Conference using Mutually Shared Gaze}},

author = {Lee, Gun A. and Kim, Seungwon and Lee, Youngho and Dey, Arindam and Piumsomboon, Thammathip and Norman, Mitchell and Billinghurst, Mark},

year = {2017},

publisher = {The Eurographics Association},

ISSN = {1727-530X},

ISBN = {978-3-03868-038-3},

DOI = {10.2312/egve.20171359}

}To improve remote collaboration in video conferencing systems, researchers have been investigating augmenting visual cues onto a shared live video stream. In such systems, a person wearing a head-mounted display (HMD) and camera can share her view of the surrounding real-world with a remote collaborator to receive assistance on a real-world task. While this concept of augmented video conferencing (AVC) has been actively investigated, there has been little research on how sharing gaze cues might affect the collaboration in video conferencing. This paper investigates how sharing gaze in both directions between a local worker and remote helper in an AVC system affects the collaboration and communication. Using a prototype AVC system that shares the eye gaze of both users, we conducted a user study that compares four conditions with different combinations of eye gaze sharing between the two users. The results showed that sharing each other’s gaze significantly improved collaboration and communication. -

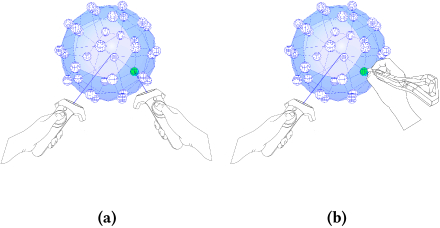

Exploring Natural Eye-Gaze-Based Interaction for Immersive Virtual Reality

Thammathip Piumsomboon, Gun Lee, Robert W. Lindeman and Mark BillinghurstThammathip Piumsomboon, Gun Lee, Robert W. Lindeman and Mark Billinghurst. 2017. Exploring Natural Eye-Gaze-Based Interaction for Immersive Virtual Reality. In 2017 IEEE Symposium on 3D User Interfaces (3DUI), pp. 36-39. https://doi.org/10.1109/3DUI.2017.7893315

@INPROCEEDINGS{7893315,

author={T. Piumsomboon and G. Lee and R. W. Lindeman and M. Billinghurst},

booktitle={2017 IEEE Symposium on 3D User Interfaces (3DUI)},

title={Exploring natural eye-gaze-based interaction for immersive virtual reality},

year={2017},

volume={},

number={},

pages={36-39},

keywords={gaze tracking;gesture recognition;helmet mounted displays;virtual reality;Duo-Reticles;Nod and Roll;Radial Pursuit;cluttered-object selection;eye tracking technology;eye-gaze selection;head-gesture-based interaction;head-mounted display;immersive virtual reality;inertial reticles;natural eye movements;natural eye-gaze-based interaction;smooth pursuit;vestibulo-ocular reflex;Electronic mail;Erbium;Gaze tracking;Painting;Portable computers;Resists;Two dimensional displays;H.5.2 [Information Interfaces and Presentation]: User Interfaces—Interaction styles},

doi={10.1109/3DUI.2017.7893315},

ISSN={},

month={March},}Eye tracking technology in a head-mounted display has undergone rapid advancement in recent years, making it possible for researchers to explore new interaction techniques using natural eye movements. This paper explores three novel eye-gaze-based interaction techniques: (1) Duo-Reticles, eye-gaze selection based on eye-gaze and inertial reticles, (2) Radial Pursuit, cluttered-object selection that takes advantage of smooth pursuit, and (3) Nod and Roll, head-gesture-based interaction based on the vestibulo-ocular reflex. In an initial user study, we compare each technique against a baseline condition in a scenario that demonstrates its strengths and weaknesses. -

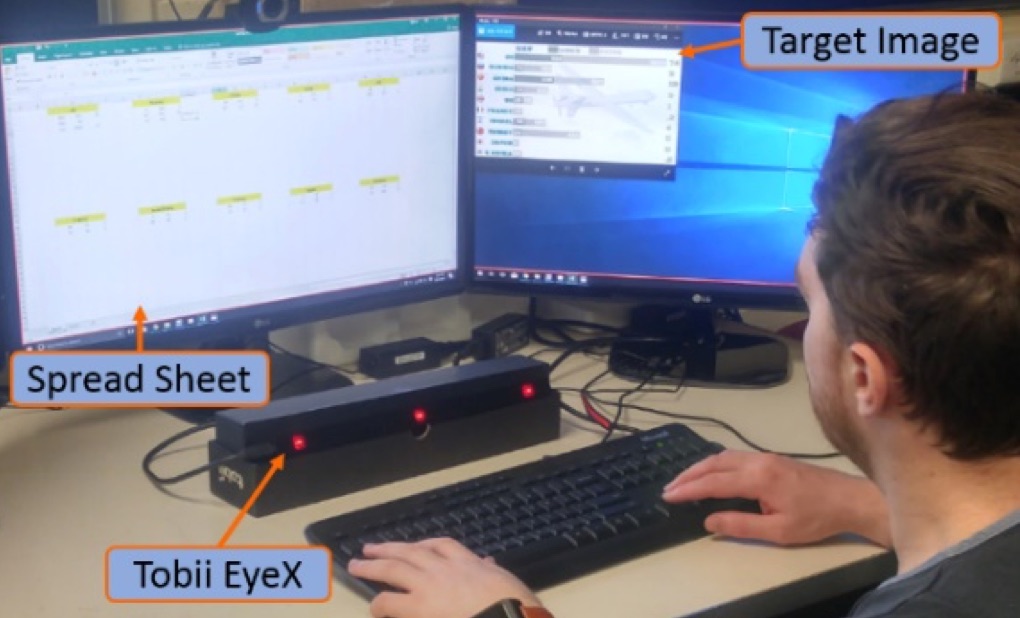

Do You See What I See? The Effect of Gaze Tracking on Task Space Remote Collaboration

Kunal Gupta, Gun A. Lee and Mark BillinghurstKunal Gupta, Gun A. Lee and Mark Billinghurst. 2016. Do You See What I See? The Effect of Gaze Tracking on Task Space Remote Collaboration. IEEE Transactions on Visualization and Computer Graphics Vol.22, No.11, pp.2413-2422. https://doi.org/10.1109/TVCG.2016.2593778

@ARTICLE{7523400,

author={K. Gupta and G. A. Lee and M. Billinghurst},

journal={IEEE Transactions on Visualization and Computer Graphics},

title={Do You See What I See? The Effect of Gaze Tracking on Task Space Remote Collaboration},

year={2016},

volume={22},

number={11},

pages={2413-2422},

keywords={cameras;gaze tracking;helmet mounted displays;eye-tracking camera;gaze tracking;head-mounted camera;head-mounted display;remote collaboration;task space remote collaboration;virtual gaze information;virtual pointer;wearable interface;Cameras;Collaboration;Computers;Gaze tracking;Head;Prototypes;Teleconferencing;Computer conferencing;Computer-supported collaborative work;teleconferencing;videoconferencing},

doi={10.1109/TVCG.2016.2593778},

ISSN={1077-2626},

month={Nov},}We present results from research exploring the effect of sharing virtual gaze and pointing cues in a wearable interface for remote collaboration. A local worker wears a Head-mounted Camera, Eye-tracking camera and a Head-Mounted Display and shares video and virtual gaze information with a remote helper. The remote helper can provide feedback using a virtual pointer on the live video view. The prototype system was evaluated with a formal user study. Comparing four conditions, (1) NONE (no cue), (2) POINTER, (3) EYE-TRACKER and (4) BOTH (both pointer and eye-tracker cues), we observed that the task completion performance was best in the BOTH condition with a significant difference of POINTER and EYETRACKER individually. The use of eye-tracking and a pointer also significantly improved the co-presence felt between the users. We discuss the implications of this research and the limitations of the developed system that could be improved in further work. -

A Remote Collaboration System with Empathy Glasses

Y. Lee, K. Masai, K. Kunze, M. Sugimoto and M. Billinghurst. 2016. A Remote Collaboration System with Empathy Glasses. 2016 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct)(ISMARW), Merida, pp. 342-343. http://doi.ieeecomputersociety.org/10.1109/ISMAR-Adjunct.2016.0112

@INPROCEEDINGS{7836533,

author = {Y. Lee and K. Masai and K. Kunze and M. Sugimoto and M. Billinghurst},

booktitle = {2016 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct)(ISMARW)},

title = {A Remote Collaboration System with Empathy Glasses},

year = {2017},

volume = {00},

number = {},

pages = {342-343},

keywords={Collaboration;Glass;Heart rate;Biomedical monitoring;Cameras;Hardware;Computers},

doi = {10.1109/ISMAR-Adjunct.2016.0112},

url = {doi.ieeecomputersociety.org/10.1109/ISMAR-Adjunct.2016.0112},

ISSN = {},

month={Sept.}

}

View: http://doi.ieeecomputersociety.org/10.1109/ISMAR-Adjunct.2016.0112

Video: https://www.youtube.com/watch?v=CdgWVDbMwp4In this paper, we describe a demonstration of remote collaboration system using Empathy glasses. Using our system, a local worker can share a view of their environment with a remote helper, as well as their gaze, facial expressions, and physiological signals. The remote user can send back visual cues via a see-through head mounted display to help them perform better on a real world task. The system also provides some indication of the remote users face expression using face tracking technology. -

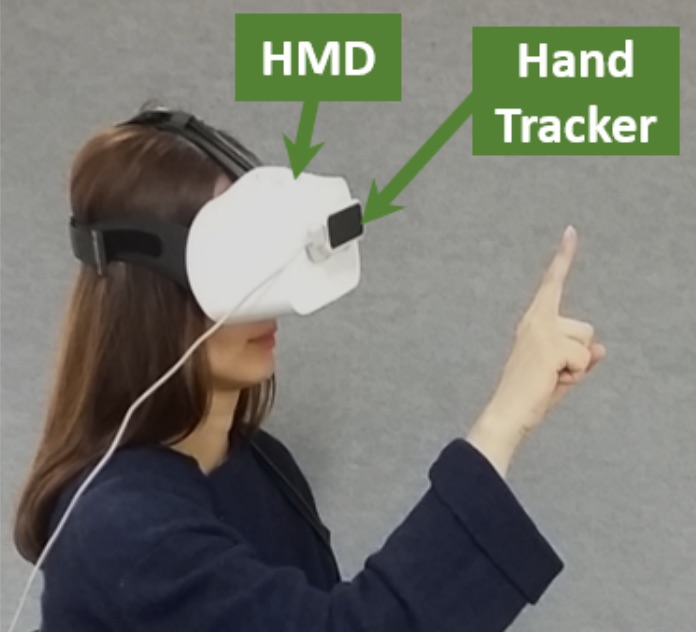

Empathy Glasses

Katsutoshi Masai, Kai Kunze, Maki Sugimoto, and Mark BillinghurstKatsutoshi Masai, Kai Kunze, Maki Sugimoto, and Mark Billinghurst. 2016. Empathy Glasses. In Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems (CHI EA '16). ACM, New York, NY, USA, 1257-1263. https://doi.org/10.1145/2851581.2892370

@inproceedings{Masai:2016:EG:2851581.2892370,

author = {Masai, Katsutoshi and Kunze, Kai and sugimoto, Maki and Billinghurst, Mark},

title = {Empathy Glasses},

booktitle = {Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems},

series = {CHI EA '16},

year = {2016},

isbn = {978-1-4503-4082-3},

location = {San Jose, California, USA},

pages = {1257--1263},

numpages = {7},

url = {http://doi.acm.org/10.1145/2851581.2892370},

doi = {10.1145/2851581.2892370},

acmid = {2892370},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {emotional interface, facial expression, remote collaboration, wearables},

}

In this paper, we describe Empathy Glasses, a head worn prototype designed to create an empathic connection between remote collaborators. The main novelty of our system is that it is the first to combine the following technologies together: (1) wearable facial expression capture hardware, (2) eye tracking, (3) a head worn camera, and (4) a see-through head mounted display, with a focus on remote collaboration. Using the system, a local user can send their information and a view of their environment to a remote helper who can send back visual cues on the local user's see-through display to help them perform a real world task. A pilot user study was conducted to explore how effective the Empathy Glasses were at supporting remote collaboration. We describe the implications that can be drawn from this user study. -

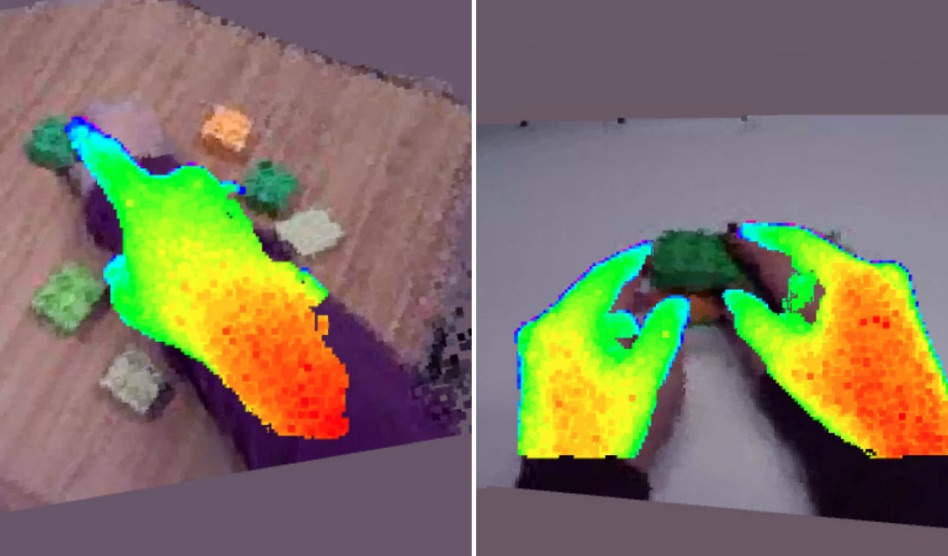

Hand gestures and visual annotation in live 360 panorama-based mixed reality remote collaboration

Theophilus Teo, Gun A. Lee, Mark Billinghurst, Matt AdcockTheophilus Teo, Gun A. Lee, Mark Billinghurst, and Matt Adcock. 2018. Hand gestures and visual annotation in live 360 panorama-based mixed reality remote collaboration. In Proceedings of the 30th Australian Conference on Computer-Human Interaction (OzCHI '18). ACM, New York, NY, USA, 406-410. DOI: https://doi.org/10.1145/3292147.3292200

BibTeX | EndNote | ACM Ref

@inproceedings{Teo:2018:HGV:3292147.3292200,

author = {Teo, Theophilus and Lee, Gun A. and Billinghurst, Mark and Adcock, Matt},

title = {Hand Gestures and Visual Annotation in Live 360 Panorama-based Mixed Reality Remote Collaboration},

booktitle = {Proceedings of the 30th Australian Conference on Computer-Human Interaction},

series = {OzCHI '18},

year = {2018},

isbn = {978-1-4503-6188-0},

location = {Melbourne, Australia},

pages = {406--410},

numpages = {5},

url = {http://doi.acm.org/10.1145/3292147.3292200},

doi = {10.1145/3292147.3292200},

acmid = {3292200},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {gesture communication, mixed reality, remote collaboration},

}In this paper, we investigate hand gestures and visual annotation cues overlaid in a live 360 panorama-based Mixed Reality remote collaboration. The prototype system captures 360 live panorama video of the surroundings of a local user and shares it with another person in a remote location. The two users wearing Augmented Reality or Virtual Reality head-mounted displays can collaborate using augmented visual communication cues such as virtual hand gestures, ray pointing, and drawing annotations. Our preliminary user evaluation comparing these cues found that using visual annotation cues (ray pointing and drawing annotation) helps local users perform collaborative tasks faster, easier, making less errors and with better understanding, compared to using only virtual hand gestures. -

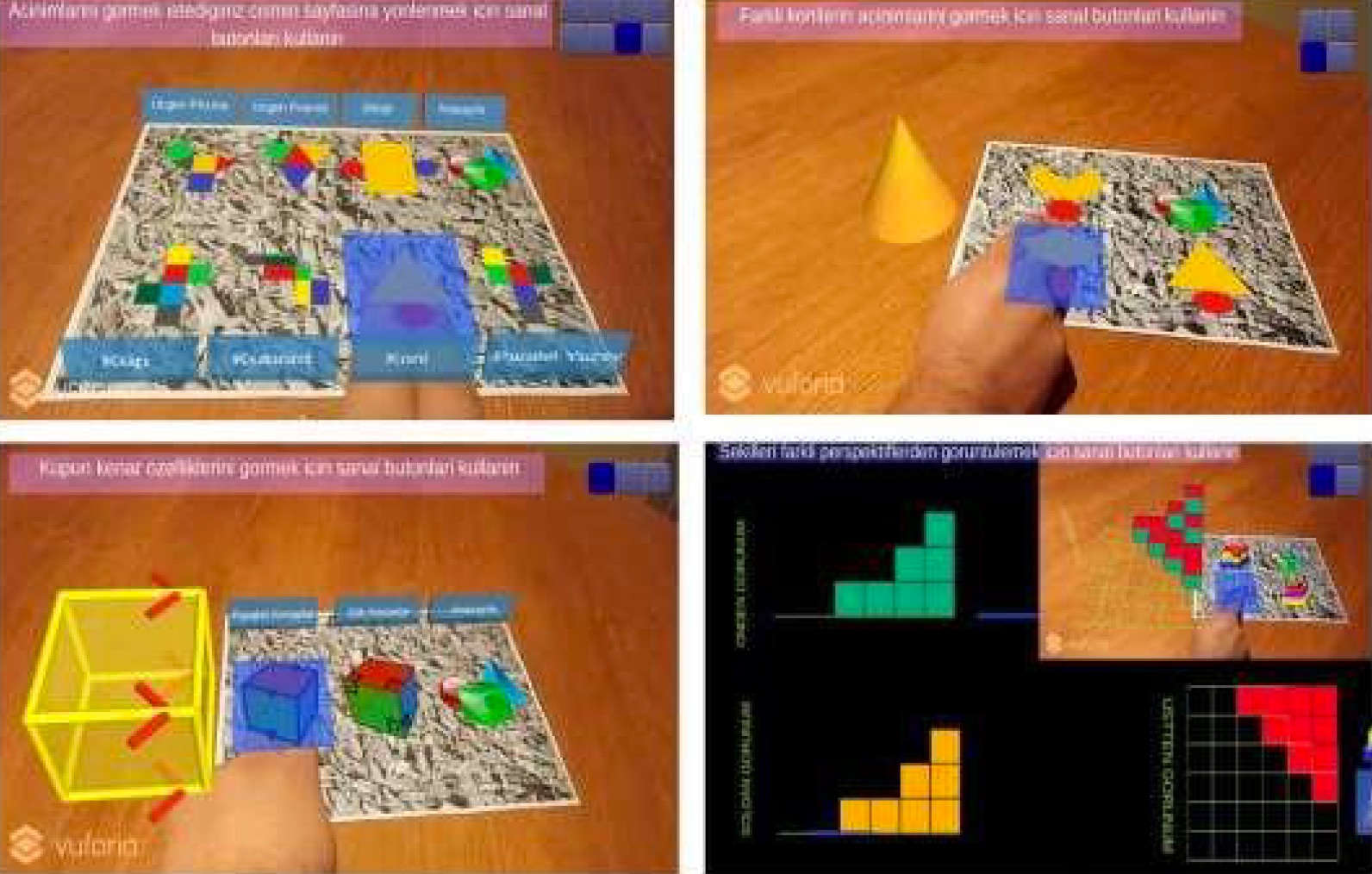

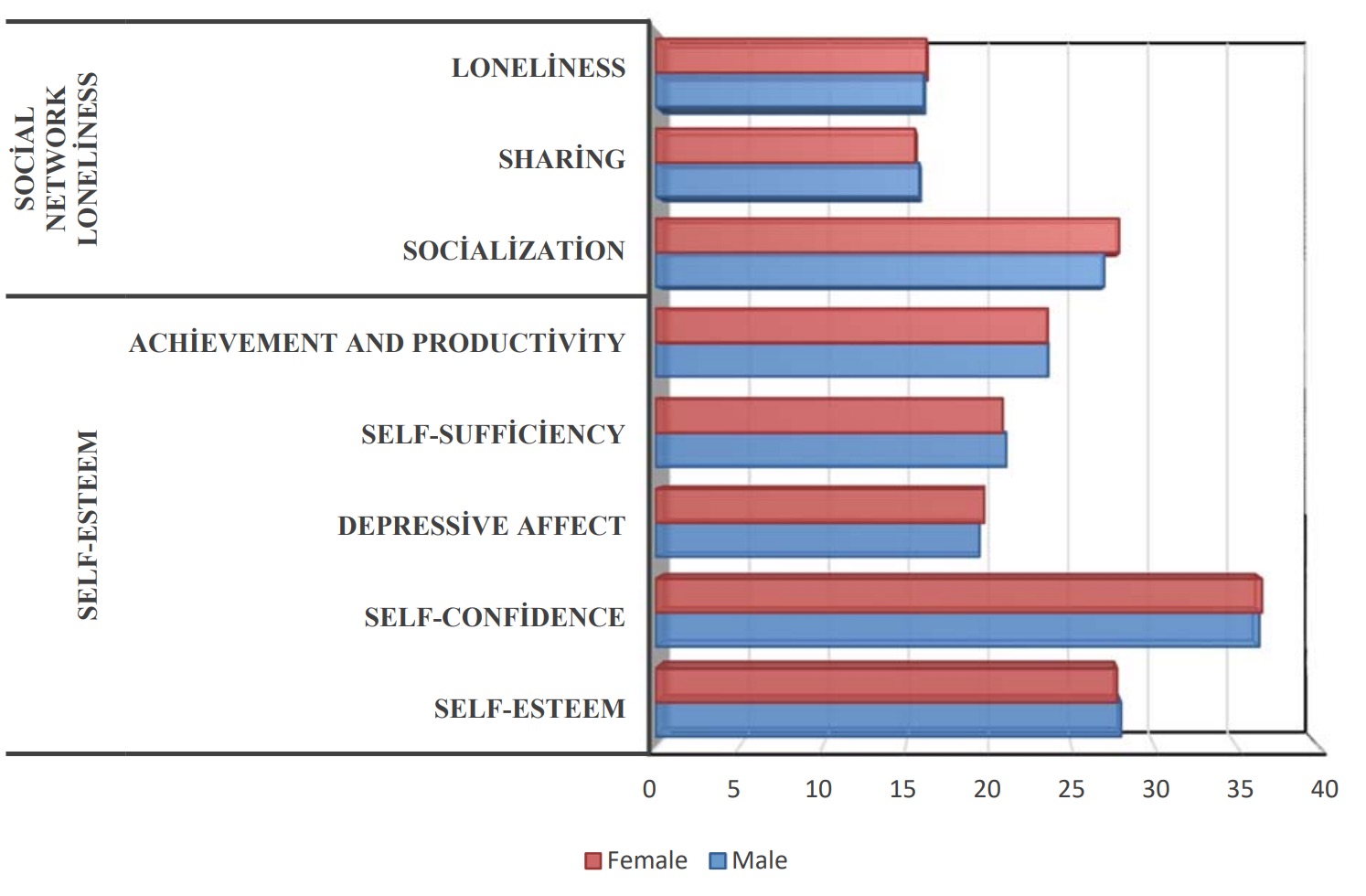

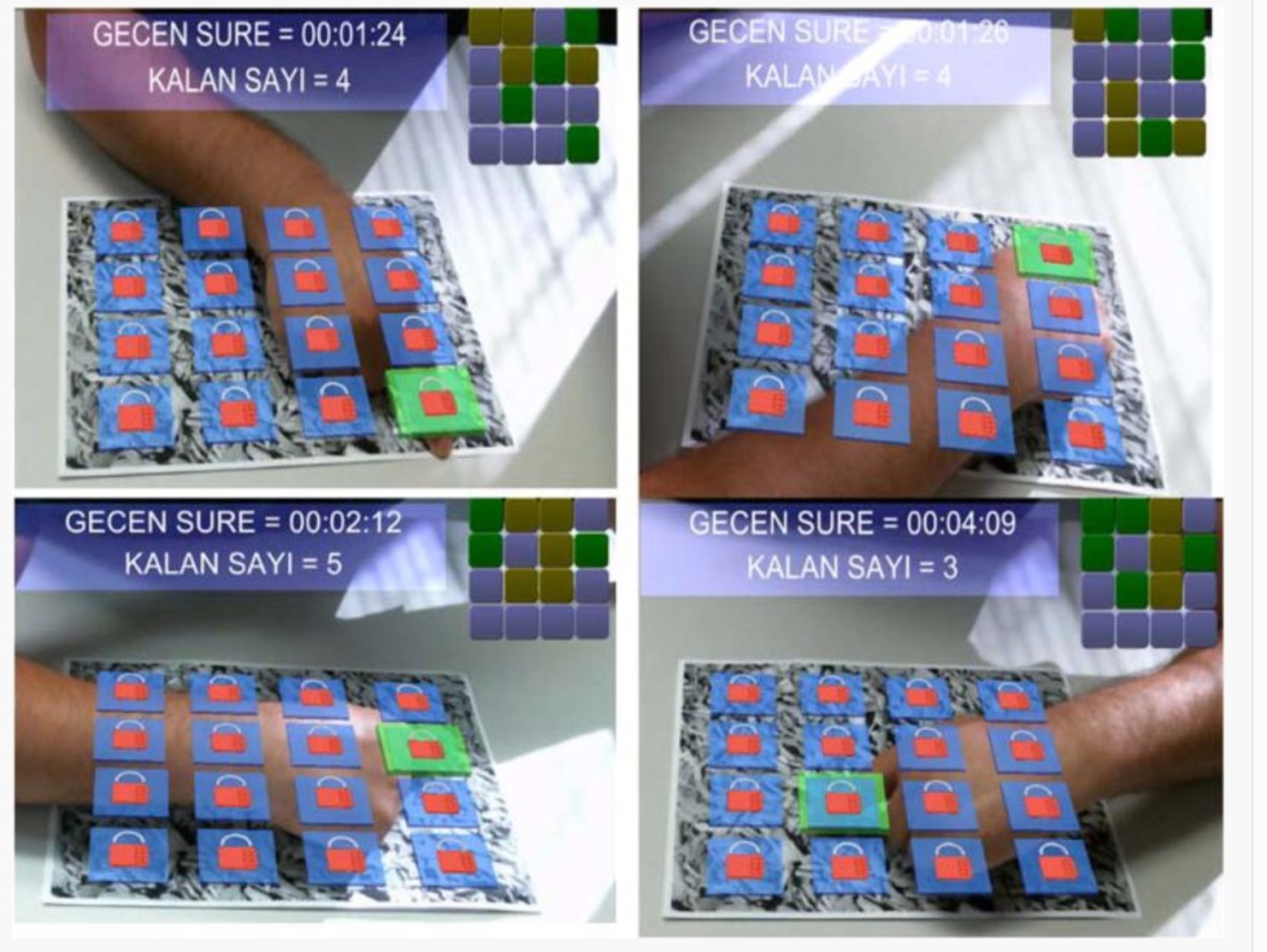

Assessing the Relationship between Cognitive Load and the Usability of a Mobile Augmented Reality Tutorial System: A Study of Gender Effects

E Ibili, M BillinghurstIbili, E., & Billinghurst, M. (2019). Assessing the Relationship between Cognitive Load and the Usability of a Mobile Augmented Reality Tutorial System: A Study of Gender Effects. International Journal of Assessment Tools in Education, 6(3), 378-395.

@article{ibili2019assessing,

title={Assessing the Relationship between Cognitive Load and the Usability of a Mobile Augmented Reality Tutorial System: A Study of Gender Effects},

author={Ibili, Emin and Billinghurst, Mark},

journal={International Journal of Assessment Tools in Education},

volume={6},

number={3},

pages={378--395},

year={2019}

}In this study, the relationship between the usability of a mobile Augmented Reality (AR) tutorial system and cognitive load was examined. In this context, the relationship between perceived usefulness, the perceived ease of use, and the perceived natural interaction factors and intrinsic, extraneous, germane cognitive load were investigated. In addition, the effect of gender on this relationship was investigated. The research results show that there was a strong relationship between the perceived ease of use and the extraneous load in males, and there was a strong relationship between the perceived usefulness and the intrinsic load in females. Both the perceived usefulness and the perceived ease of use had a strong relationship with the germane cognitive load. Moreover, the perceived natural interaction had a strong relationship with the perceived usefulness in females and the perceived ease of use in males. This research will provide significant clues to AR software developers and researchers to help reduce or control cognitive load in the development of AR-based instructional software. -

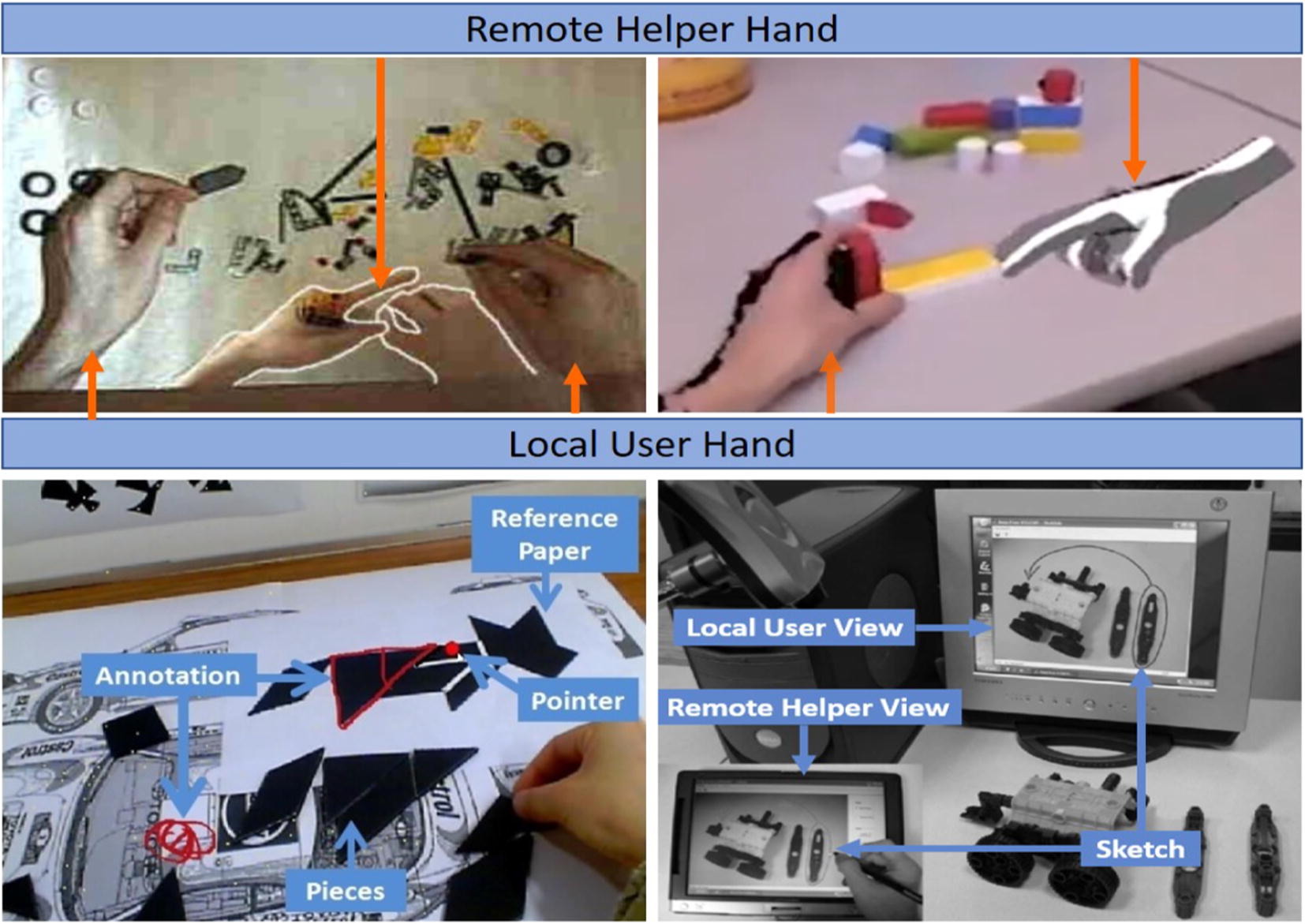

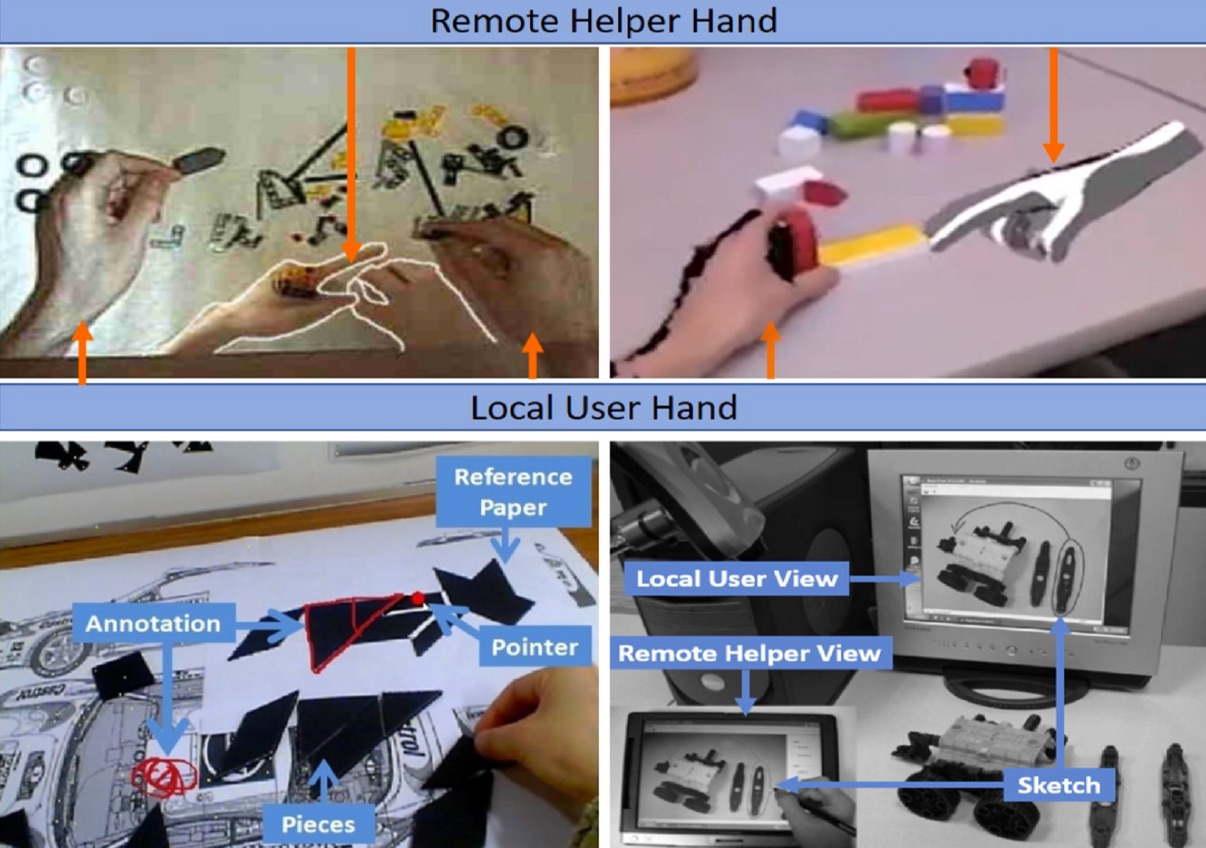

Sharing hand gesture and sketch cues in remote collaboration

W. Huang, S. Kim, M. Billinghurst, L. AlemHuang, W., Kim, S., Billinghurst, M., & Alem, L. (2019). Sharing hand gesture and sketch cues in remote collaboration. Journal of Visual Communication and Image Representation, 58, 428-438.

@article{huang2019sharing,

title={Sharing hand gesture and sketch cues in remote collaboration},

author={Huang, Weidong and Kim, Seungwon and Billinghurst, Mark and Alem, Leila},

journal={Journal of Visual Communication and Image Representation},

volume={58},

pages={428--438},

year={2019},

publisher={Elsevier}

}Many systems have been developed to support remote guidance, where a local worker manipulates objects under guidance of a remote expert helper. These systems typically use speech and visual cues between the local worker and the remote helper, where the visual cues could be pointers, hand gestures, or sketches. However, the effects of combining visual cues together in remote collaboration has not been fully explored. We conducted a user study comparing remote collaboration with an interface that combined hand gestures and sketching (the HandsInTouch interface) to one that only used hand gestures, when solving two tasks; Lego assembly and repairing a laptop. In the user study, we found that (1) adding sketch cues improved the task completion time, only with the repairing task as this had complex object manipulation but (2) using gesture and sketching together created a higher task load for the user. -

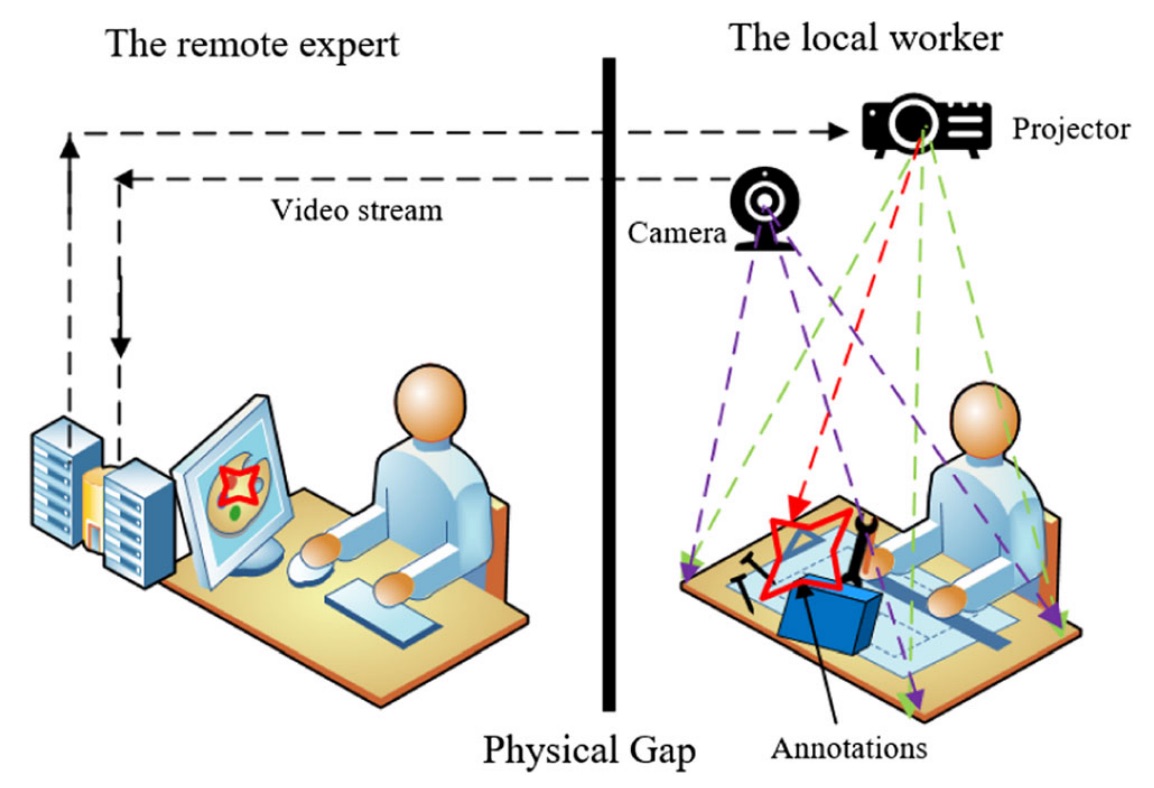

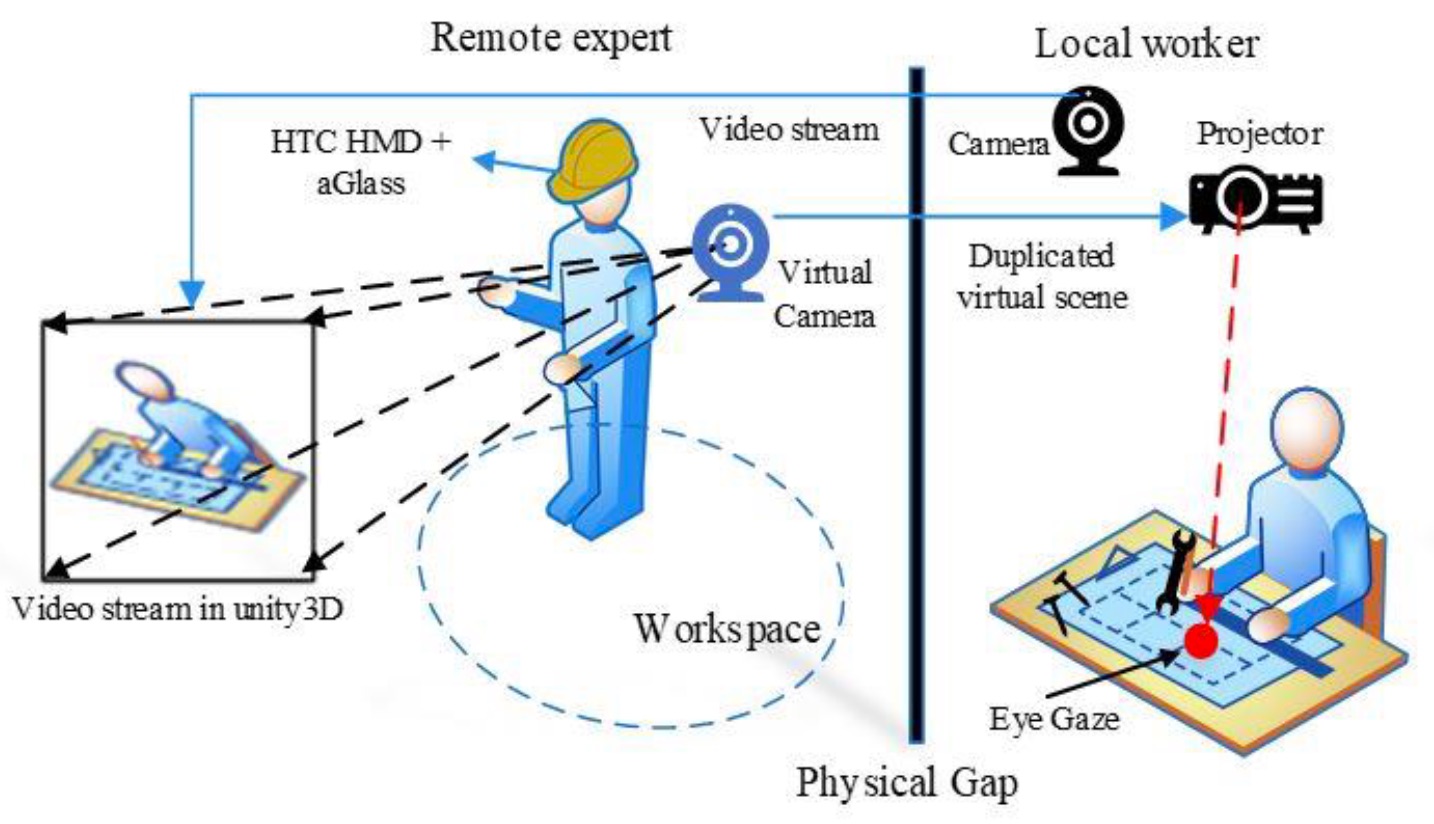

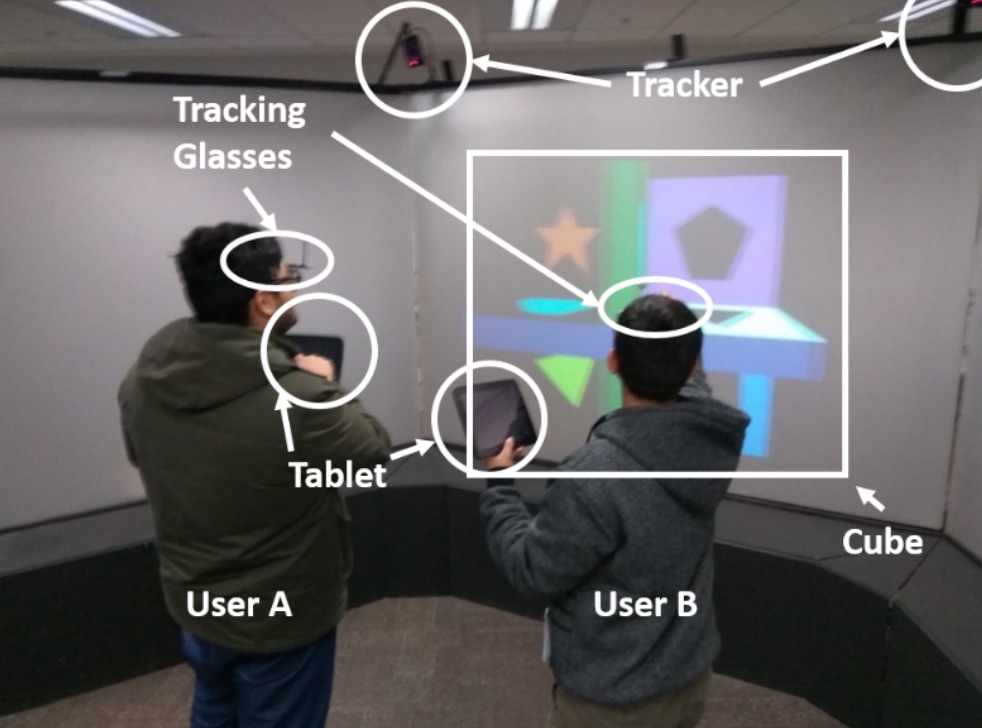

2.5 DHANDS: a gesture-based MR remote collaborative platform

Wang, P., Zhang, S., Bai, X., Billinghurst, M., He, W., Sun, MWang, P., Zhang, S., Bai, X., Billinghurst, M., He, W., Sun, M., ... & Ji, H. (2019). 2.5 DHANDS: a gesture-based MR remote collaborative platform. The International Journal of Advanced Manufacturing Technology, 102(5-8), 1339-1353.

@article{wang20192,

title={2.5 DHANDS: a gesture-based MR remote collaborative platform},

author={Wang, Peng and Zhang, Shusheng and Bai, Xiaoliang and Billinghurst, Mark and He, Weiping and Sun, Mengmeng and Chen, Yongxing and Lv, Hao and Ji, Hongyu},

journal={The International Journal of Advanced Manufacturing Technology},

volume={102},

number={5-8},

pages={1339--1353},

year={2019},

publisher={Springer}

}Current remote collaborative systems in manufacturing are mainly based on video-conferencing technology. Their primary aim is to transmit manufacturing process knowledge between remote experts and local workers. However, it does not provide the experts with the same hands-on experience as when synergistically working on site in person. The mixed reality (MR) and increasing networking performances have the capacity to enhance the experience and communication between collaborators in geographically distributed locations. In this paper, therefore, we propose a new gesture-based remote collaborative platform using MR technology that enables a remote expert to collaborate with local workers on physical tasks. Besides, we concentrate on collaborative remote assembly as an illustrative use case. The key advantage compared to other remote collaborative MR interfaces is that it projects the remote expert’s gestures into the real worksite to improve the performance, co-presence awareness, and user collaboration experience. We aim to study the effects of sharing the remote expert’s gestures in remote collaboration using a projector-based MR system in manufacturing. Furthermore, we show the capabilities of our framework on a prototype consisting of a VR HMD, Leap Motion, and a projector. The prototype system was evaluated with a pilot study comparing with the POINTER (adding AR annotations on the task space view through the mouse), which is the most popular method used to augment remote collaboration at present. The assessment adopts the following aspects: the performance, user’s satisfaction, and the user-perceived collaboration quality in terms of the interaction and cooperation. Our results demonstrate a clear difference between the POINTER and 2.5DHANDS interface in the performance time. Additionally, the 2.5DHANDS interface was statistically significantly higher than the POINTER interface in terms of the awareness of user’s attention, manipulation, self-confidence, and co-presence. -

The effects of sharing awareness cues in collaborative mixed reality

Piumsomboon, T., Dey, A., Ens, B., Lee, G., & Billinghurst, M.Piumsomboon, T., Dey, A., Ens, B., Lee, G., & Billinghurst, M. (2019). The effects of sharing awareness cues in collaborative mixed reality. Front. Rob, 6(5).

@article{piumsomboon2019effects,

title={The effects of sharing awareness cues in collaborative mixed reality},

author={Piumsomboon, Thammathip and Dey, Arindam and Ens, Barrett and Lee, Gun and Billinghurst, Mark},

year={2019}

}Augmented and Virtual Reality provide unique capabilities for Mixed Reality collaboration. This paper explores how different combinations of virtual awareness cues can provide users with valuable information about their collaborator's attention and actions. In a user study (n = 32, 16 pairs), we compared different combinations of three cues: Field-of-View (FoV) frustum, Eye-gaze ray, and Head-gaze ray against a baseline condition showing only virtual representations of each collaborator's head and hands. Through a collaborative object finding and placing task, the results showed that awareness cues significantly improved user performance, usability, and subjective preferences, with the combination of the FoV frustum and the Head-gaze ray being best. This work establishes the feasibility of room-scale MR collaboration and the utility of providing virtual awareness cues. -

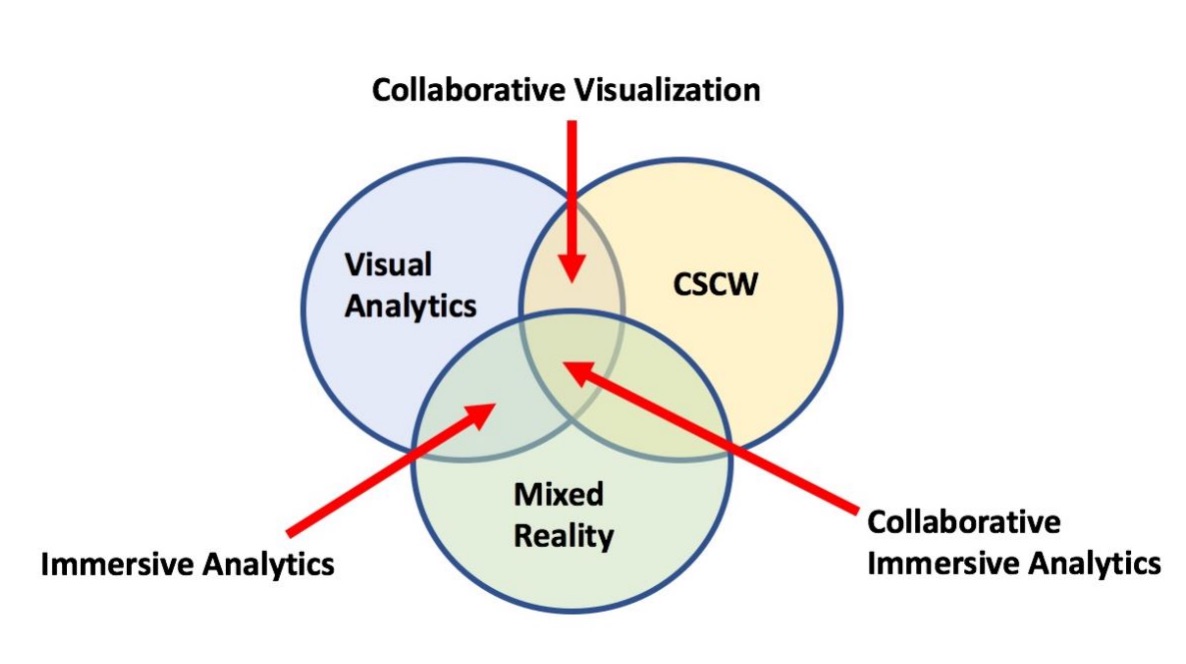

Revisiting collaboration through mixed reality: The evolution of groupware

Ens, B., Lanir, J., Tang, A., Bateman, S., Lee, G., Piumsomboon, T., & Billinghurst, M.Ens, B., Lanir, J., Tang, A., Bateman, S., Lee, G., Piumsomboon, T., & Billinghurst, M. (2019). Revisiting collaboration through mixed reality: The evolution of groupware. International Journal of Human-Computer Studies.

@article{ens2019revisiting,

title={Revisiting collaboration through mixed reality: The evolution of groupware},

author={Ens, Barrett and Lanir, Joel and Tang, Anthony and Bateman, Scott and Lee, Gun and Piumsomboon, Thammathip and Billinghurst, Mark},

journal={International Journal of Human-Computer Studies},

year={2019},

publisher={Elsevier}

}Collaborative Mixed Reality (MR) systems are at a critical point in time as they are soon to become more commonplace. However, MR technology has only recently matured to the point where researchers can focus deeply on the nuances of supporting collaboration, rather than needing to focus on creating the enabling technology. In parallel, but largely independently, the field of Computer Supported Cooperative Work (CSCW) has focused on the fundamental concerns that underlie human communication and collaboration over the past 30-plus years. Since MR research is now on the brink of moving into the real world, we reflect on three decades of collaborative MR research and try to reconcile it with existing theory from CSCW, to help position MR researchers to pursue fruitful directions for their work. To do this, we review the history of collaborative MR systems, investigating how the common taxonomies and frameworks in CSCW and MR research can be applied to existing work on collaborative MR systems, exploring where they have fallen behind, and look for new ways to describe current trends. Through identifying emergent trends, we suggest future directions for MR, and also find where CSCW researchers can explore new theory that more fully represents the future of working, playing and being with others. -

WARPING DEIXIS: Distorting Gestures to Enhance Collaboration

Sousa, M., dos Anjos, R. K., Mendes, D., Billinghurst, M., & Jorge, J.Sousa, M., dos Anjos, R. K., Mendes, D., Billinghurst, M., & Jorge, J. (2019, April). WARPING DEIXIS: Distorting Gestures to Enhance Collaboration. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems (p. 608). ACM.

@inproceedings{sousa2019warping,

title={WARPING DEIXIS: Distorting Gestures to Enhance Collaboration},

author={Sousa, Maur{\'\i}cio and dos Anjos, Rafael Kufner and Mendes, Daniel and Billinghurst, Mark and Jorge, Joaquim},

booktitle={Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems},

pages={608},

year={2019},

organization={ACM}

}When engaged in communication, people often rely on pointing gestures to refer to out-of-reach content. However, observers frequently misinterpret the target of a pointing gesture. Previous research suggests that to perform a pointing gesture, people place the index finger on or close to a line connecting the eye to the referent, while observers interpret pointing gestures by extrapolating the referent using a vector defined by the arm and index finger. In this paper we present Warping Deixis, a novel approach to improving the perception of pointing gestures and facilitate communication in collaborative Extended Reality environments. By warping the virtual representation of the pointing individual, we are able to match the pointing expression to the observer’s perception. We evaluated our approach in a colocated side by side virtual reality scenario. Results suggest that our approach is effective in improving the interpretation of pointing gestures in shared virtual environments. -

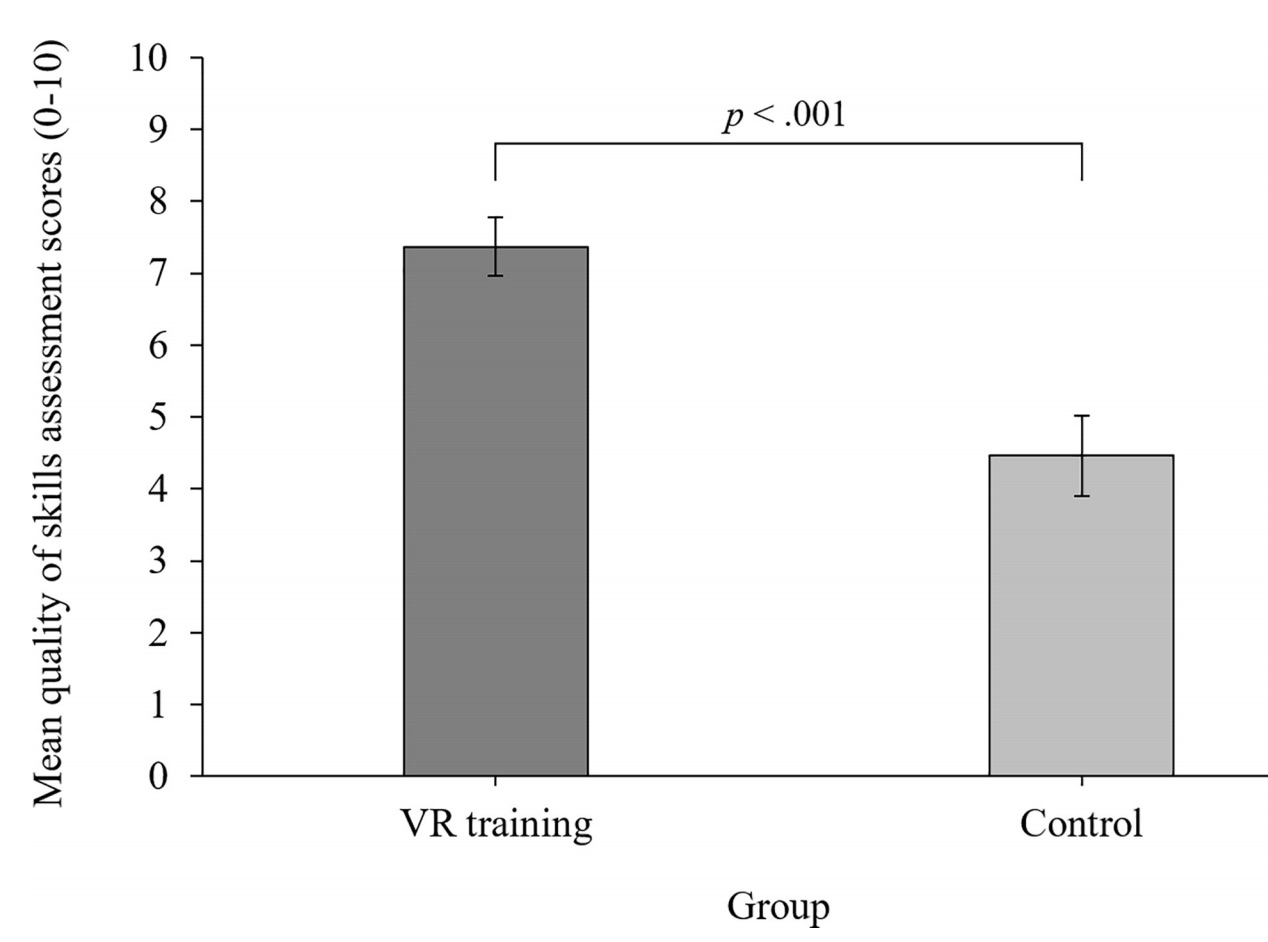

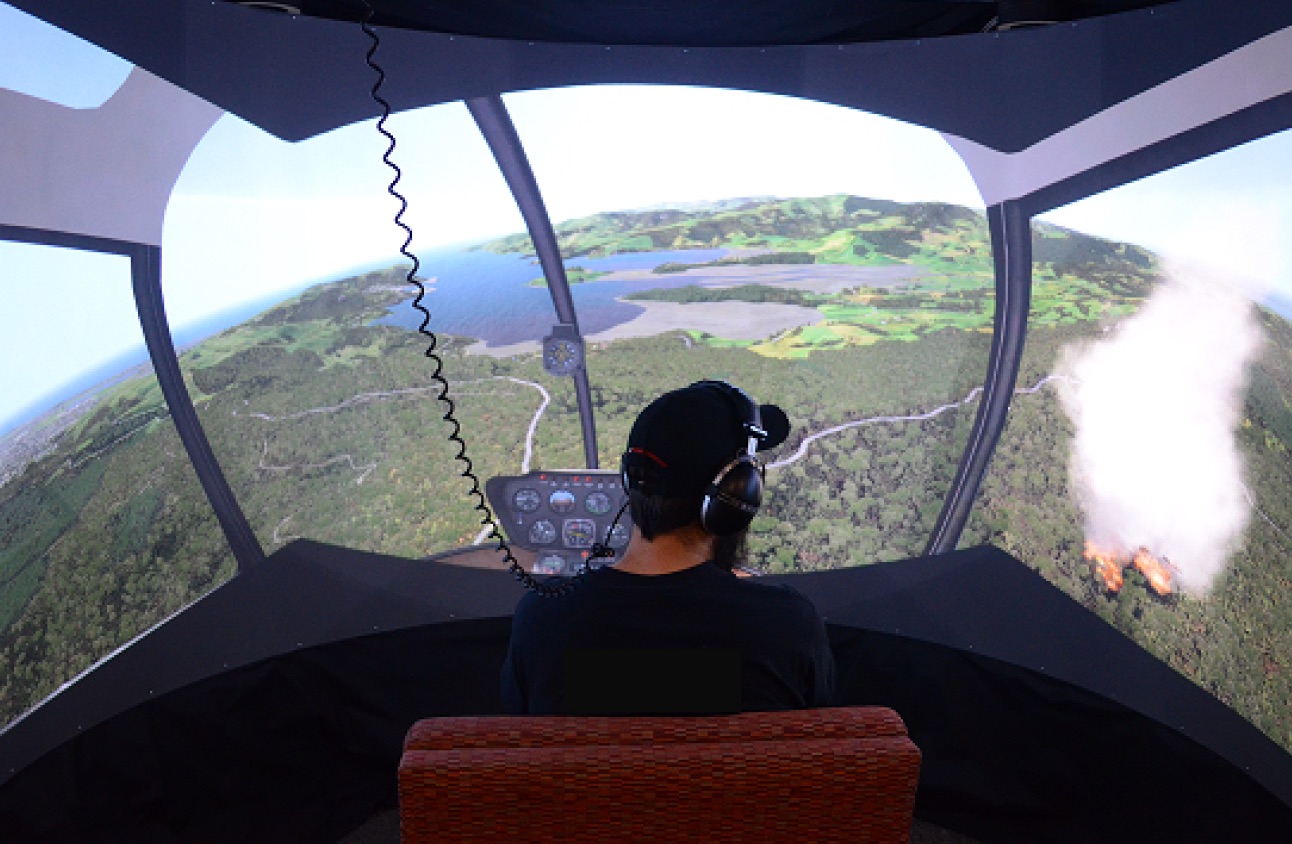

Getting your game on: Using virtual reality to improve real table tennis skills

Michalski, S. C., Szpak, A., Saredakis, D., Ross, T. J., Billinghurst, M., & Loetscher, T.Michalski, S. C., Szpak, A., Saredakis, D., Ross, T. J., Billinghurst, M., & Loetscher, T. (2019). Getting your game on: Using virtual reality to improve real table tennis skills. PloS one, 14(9).

@article{michalski2019getting,

title={Getting your game on: Using virtual reality to improve real table tennis skills},

author={Michalski, Stefan Carlo and Szpak, Ancret and Saredakis, Dimitrios and Ross, Tyler James and Billinghurst, Mark and Loetscher, Tobias},

journal={PloS one},

volume={14},

number={9},

year={2019},

publisher={Public Library of Science}

}Background: A key assumption of VR training is that the learned skills and experiences transfer to the real world. Yet, in certain application areas, such as VR sports training, the research testing this assumption is sparse. Design: Real-world table tennis performance was assessed using a mixed-model analysis of variance. The analysis comprised a between-subjects (VR training group vs control group) and a within-subjects (pre- and post-training) factor. Method: Fifty-seven participants (23 females) were either assigned to a VR training group (n = 29) or no-training control group (n = 28). During VR training, participants were immersed in competitive table tennis matches against an artificial intelligence opponent. An expert table tennis coach evaluated participants on real-world table tennis playing before and after the training phase. Blinded regarding participant’s group assignment, the expert assessed participants’ backhand, forehand and serving on quantitative aspects (e.g. count of rallies without errors) and quality of skill aspects (e.g. technique and consistency). Results: VR training significantly improved participants’ real-world table tennis performance compared to a no-training control group in both quantitative (p < .001, Cohen’s d = 1.08) and quality of skill assessments (p < .001, Cohen’s d = 1.10). Conclusions: This study adds to a sparse yet expanding literature, demonstrating real-world skill transfer from Virtual Reality in an athletic task -

On the Shoulder of the Giant: A Multi-Scale Mixed Reality Collaboration with 360 Video Sharing and Tangible Interaction

Piumsomboon, T., Lee, G. A., Irlitti, A., Ens, B., Thomas, B. H., & Billinghurst, M.Piumsomboon, T., Lee, G. A., Irlitti, A., Ens, B., Thomas, B. H., & Billinghurst, M. (2019, April). On the Shoulder of the Giant: A Multi-Scale Mixed Reality Collaboration with 360 Video Sharing and Tangible Interaction. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems (p. 228). ACM.

@inproceedings{piumsomboon2019shoulder,

title={On the Shoulder of the Giant: A Multi-Scale Mixed Reality Collaboration with 360 Video Sharing and Tangible Interaction},

author={Piumsomboon, Thammathip and Lee, Gun A and Irlitti, Andrew and Ens, Barrett and Thomas, Bruce H and Billinghurst, Mark},

booktitle={Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems},

pages={228},

year={2019},

organization={ACM}

}We propose a multi-scale Mixed Reality (MR) collaboration between the Giant, a local Augmented Reality user, and the Miniature, a remote Virtual Reality user, in Giant-Miniature Collaboration (GMC). The Miniature is immersed in a 360-video shared by the Giant who can physically manipulate the Miniature through a tangible interface, a combined 360-camera with a 6 DOF tracker. We implemented a prototype system as a proof of concept and conducted a user study (n=24) comprising of four parts comparing: A) two types of virtual representations, B) three levels of Miniature control, C) three levels of 360-video view dependencies, and D) four 360-camera placement positions on the Giant. The results show users prefer a shoulder mounted camera view, while a view frustum with a complimentary avatar is a good visualization for the Miniature virtual representation. From the results, we give design recommendations and demonstrate an example Giant-Miniature Interaction. -

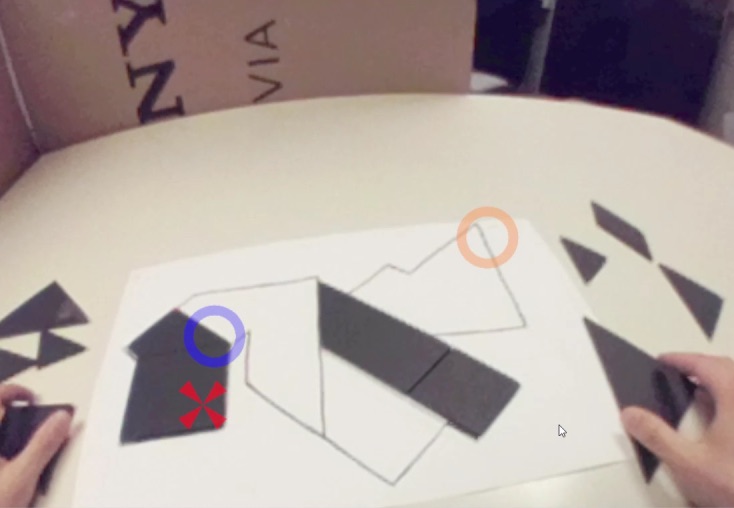

Evaluating the Combination of Visual Communication Cues for HMD-based Mixed Reality Remote Collaboration

Kim, S., Lee, G., Huang, W., Kim, H., Woo, W., & Billinghurst, M.Kim, S., Lee, G., Huang, W., Kim, H., Woo, W., & Billinghurst, M. (2019, April). Evaluating the Combination of Visual Communication Cues for HMD-based Mixed Reality Remote Collaboration. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems (p. 173). ACM.

@inproceedings{kim2019evaluating,

title={Evaluating the Combination of Visual Communication Cues for HMD-based Mixed Reality Remote Collaboration},

author={Kim, Seungwon and Lee, Gun and Huang, Weidong and Kim, Hayun and Woo, Woontack and Billinghurst, Mark},

booktitle={Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems},

pages={173},

year={2019},

organization={ACM}

}Many researchers have studied various visual communication cues (e.g. pointer, sketching, and hand gesture) in Mixed Reality remote collaboration systems for real-world tasks. However, the effect of combining them has not been so well explored. We studied the effect of these cues in four combinations: hand only, hand + pointer, hand + sketch, and hand + pointer + sketch, with three problem tasks: Lego, Tangram, and Origami. The study results showed that the participants completed the task significantly faster and felt a significantly higher level of usability when the sketch cue is added to the hand gesture cue, but not with adding the pointer cue. Participants also preferred the combinations including hand and sketch cues over the other combinations. However, using additional cues (pointer or sketch) increased the perceived mental effort and did not improve the feeling of co-presence. We discuss the implications of these results and future research directions. -

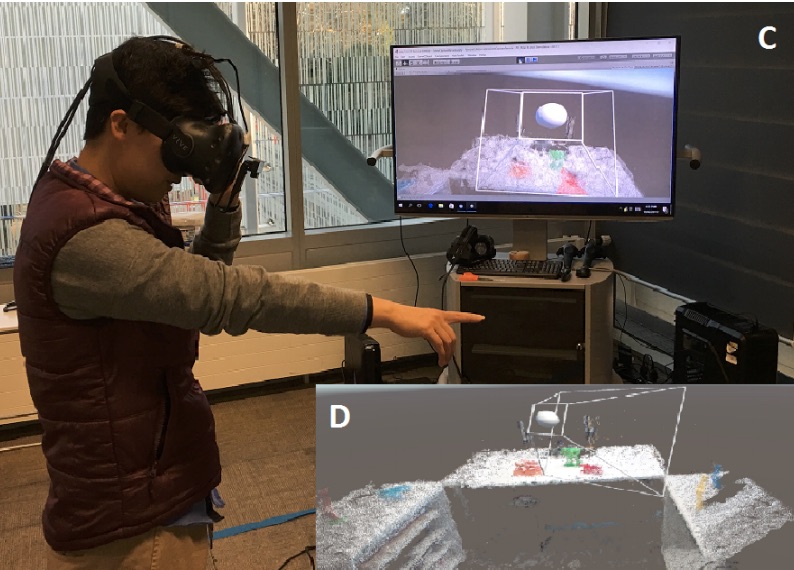

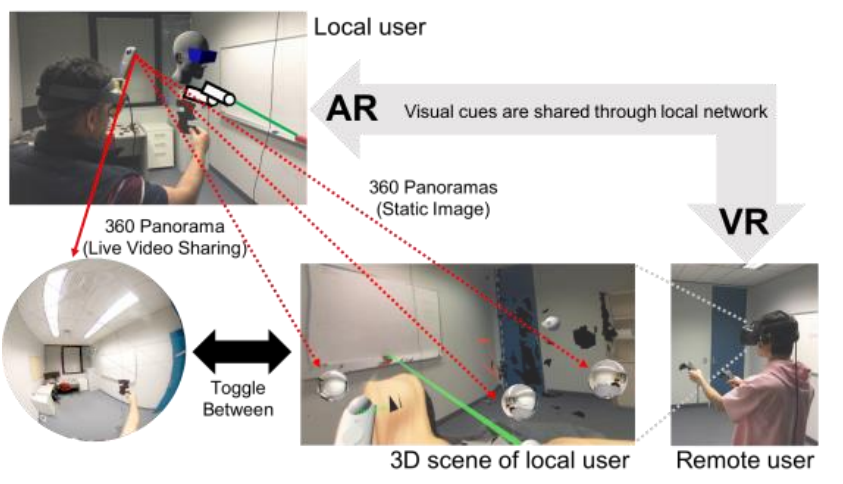

Mixed Reality Remote Collaboration Combining 360 Video and 3D Reconstruction

Teo, T., Lawrence, L., Lee, G. A., Billinghurst, M., & Adcock, M.Teo, T., Lawrence, L., Lee, G. A., Billinghurst, M., & Adcock, M. (2019, April). Mixed Reality Remote Collaboration Combining 360 Video and 3D Reconstruction. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems (p. 201). ACM.

@inproceedings{teo2019mixed,

title={Mixed Reality Remote Collaboration Combining 360 Video and 3D Reconstruction},

author={Teo, Theophilus and Lawrence, Louise and Lee, Gun A and Billinghurst, Mark and Adcock, Matt},

booktitle={Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems},

pages={201},

year={2019},

organization={ACM}

}Remote Collaboration using Virtual Reality (VR) and Augmented Reality (AR) has recently become a popular way for people from different places to work together. Local workers can collaborate with remote helpers by sharing 360-degree live video or 3D virtual reconstruction of their surroundings. However, each of these techniques has benefits and drawbacks. In this paper we explore mixing 360 video and 3D reconstruction together for remote collaboration, by preserving benefits of both systems while reducing drawbacks of each. We developed a hybrid prototype and conducted user study to compare benefits and problems of using 360 or 3D alone to clarify the needs for mixing the two, and also to evaluate the prototype system. We found participants performed significantly better on collaborative search tasks in 360 and felt higher social presence, yet 3D also showed potential to complement. Participant feedback collected after trying our hybrid system provided directions for improvement. -

Using Augmented Reality with Speech Input for Non-Native Children's Language Learning

Dalim, C. S. C., Sunar, M. S., Dey, A., & Billinghurst, M.Dalim, C. S. C., Sunar, M. S., Dey, A., & Billinghurst, M. (2019). Using Augmented Reality with Speech Input for Non-Native Children's Language Learning. International Journal of Human-Computer Studies.

@article{dalim2019using,

title={Using Augmented Reality with Speech Input for Non-Native Children's Language Learning},