Mikko Kytö

Mikko Kytö

Visiting Researcher

Dr. Mikko Kytö is a Postdoctoral Research Fellow from Finland. Before joining Empathic Computing Lab he worked at Aalto University in the Department of Computer Science, from where he received Ph.D. in 2014. He received Masters degree in interactive and digital media in 2009 from Helsinki University of Technology.

Interests

His research interests include: augmented reality, social interaction, IoT, perception, and 3D user interfaces. Current research project is related to using gaze in augmented reality environments to support interaction with smart objects.

Projects

-

Pinpointing

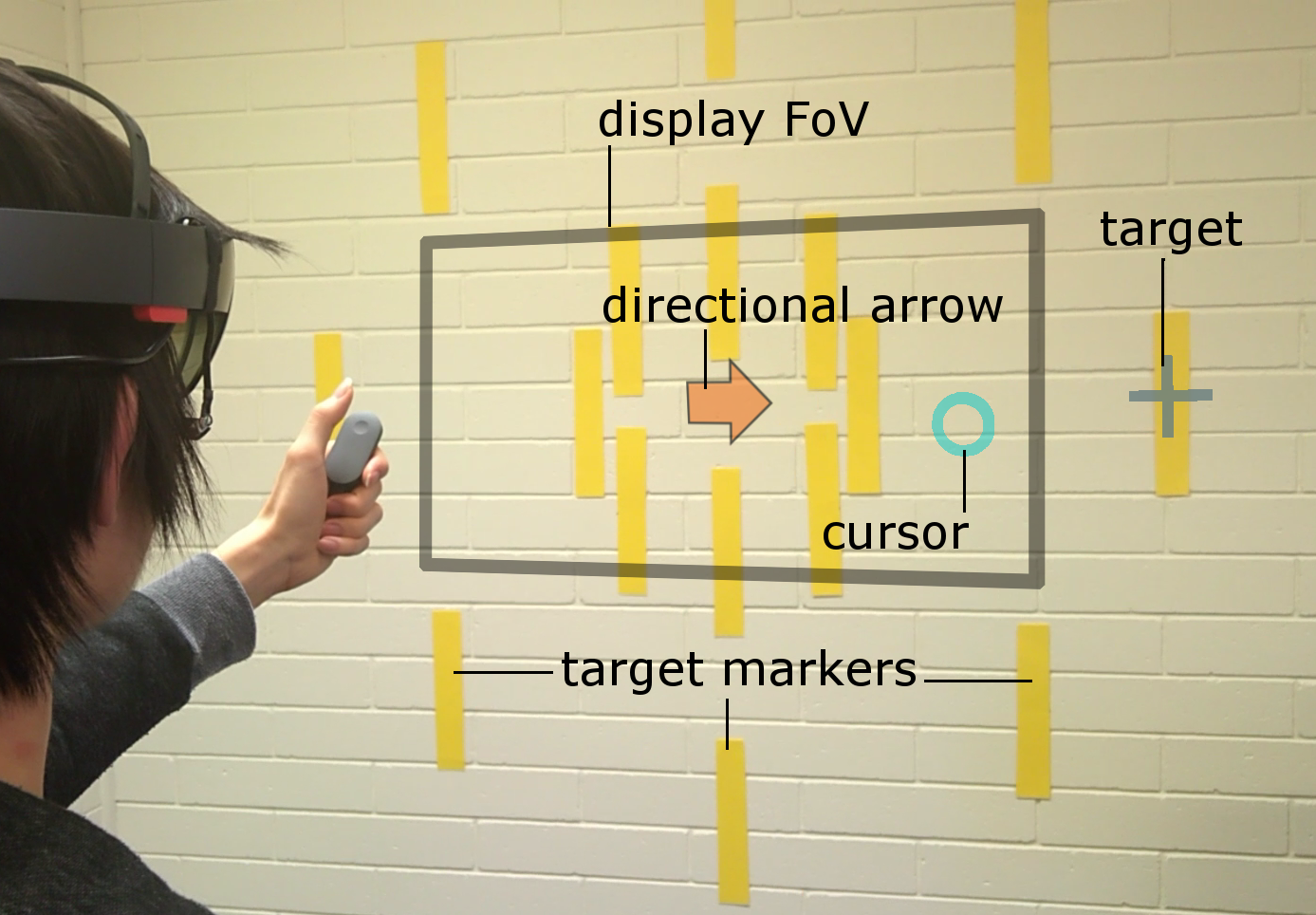

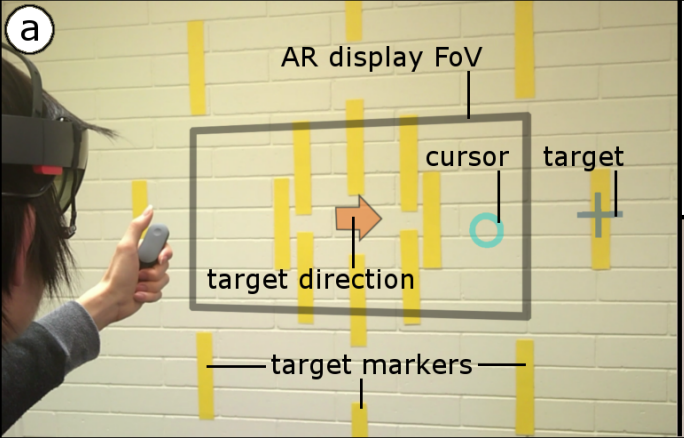

Head and eye movement can be leveraged to improve the user’s interaction repertoire for wearable displays. Head movements are deliberate and accurate, and provide the current state-of-the-art pointing technique. Eye gaze can potentially be faster and more ergonomic, but suffers from low accuracy due to calibration errors and drift of wearable eye-tracking sensors. This work investigates precise, multimodal selection techniques using head motion and eye gaze. A comparison of speed and pointing accuracy reveals the relative merits of each method, including the achievable target size for robust selection. We demonstrate and discuss example applications for augmented reality, including compact menus with deep structure, and a proof-of-concept method for on-line correction of calibration drift.

Publications

-

Pinpointing: Precise Head-and Eye-Based Target Selection for Augmented Reality

Mikko Kytö, Barrett Ens, Thammathip Piumsomboon, Gun A Lee, Mark BillinghurstMikko Kytö, Barrett Ens, Thammathip Piumsomboon, Gun A. Lee, and Mark Billinghurst. 2018. Pinpointing: Precise Head- and Eye-Based Target Selection for Augmented Reality. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems (CHI '18). ACM, New York, NY, USA, Paper 81, 14 pages. DOI: https://doi.org/10.1145/3173574.3173655

@inproceedings{Kyto:2018:PPH:3173574.3173655,

author = {Kyt\"{o}, Mikko and Ens, Barrett and Piumsomboon, Thammathip and Lee, Gun A. and Billinghurst, Mark},

title = {Pinpointing: Precise Head- and Eye-Based Target Selection for Augmented Reality},

booktitle = {Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems},

series = {CHI '18},

year = {2018},

isbn = {978-1-4503-5620-6},

location = {Montreal QC, Canada},

pages = {81:1--81:14},

articleno = {81},

numpages = {14},

url = {http://doi.acm.org/10.1145/3173574.3173655},

doi = {10.1145/3173574.3173655},

acmid = {3173655},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {augmented reality, eye tracking, gaze interaction, head-worn display, refinement techniques, target selection},

}View: https://dl.acm.org/ft_gateway.cfm?id=3173655&ftid=1958752&dwn=1&CFID=51906271&CFTOKEN=b63dc7f7afbcc656-4D4F3907-C934-F85B-2D539C0F52E3652A

Video: https://youtu.be/nCX8zIEmv0sHead and eye movement can be leveraged to improve the user's interaction repertoire for wearable displays. Head movements are deliberate and accurate, and provide the current state-of-the-art pointing technique. Eye gaze can potentially be faster and more ergonomic, but suffers from low accuracy due to calibration errors and drift of wearable eye-tracking sensors. This work investigates precise, multimodal selection techniques using head motion and eye gaze. A comparison of speed and pointing accuracy reveals the relative merits of each method, including the achievable target size for robust selection. We demonstrate and discuss example applications for augmented reality, including compact menus with deep structure, and a proof-of-concept method for on-line correction of calibration drift.