Mitchell Norman

Mitchell Norman

PhD Student

Mitchell Norman is a PhD student studying Augmented Reality (AR) Collaborations. Mitchell graduated from the University of South Australia with a Bachelor of Software Engineering (Honours) and completed his Honours project ‘VR for Big Data analytics’ with fellow PhD student Theophilus Teo for the CSIRO, both Theo and Mitchell received a scholarship for this project from the CSIRO. Mitchell has a keen interest in Virtual Reality (VR) and AR applications and how they may assist industry to better solve problems.

Projects

-

Augmented Mirrors

Mirrors are physical displays that show our real world in reflection. While physical mirrors simply show what is in the real world scene, with help of digital technology, we can also alter the reality reflected in the mirror. The Augmented Mirrors project aims at exploring visualisation interaction techniques for exploiting mirrors as Augmented Reality (AR) displays. The project especially focuses on using user interface agents for guiding user interaction with Augmented Mirrors.

-

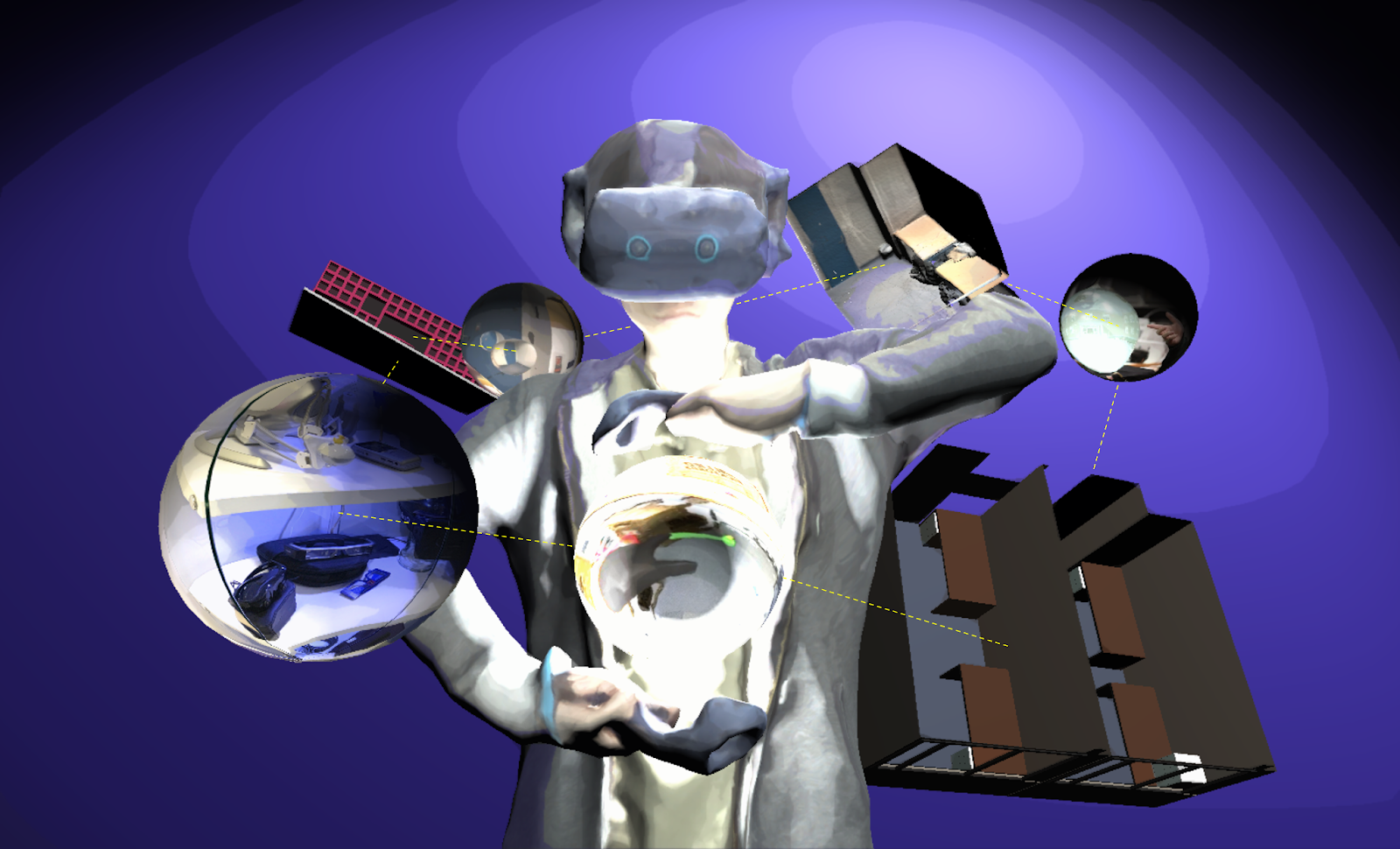

Using 3D Spaces and 360 Video Content for Collaboration

This project explores techniques to enhance collaborative experience in Mixed Reality environments using 3D reconstructions, 360 videos and 2D images. Previous research has shown that 360 video can provide a high resolution immersive visual space for collaboration, but little spatial information. Conversely, 3D scanned environments can provide high quality spatial cues, but with poor visual resolution. This project combines both approaches, enabling users to switch between a 3D view or 360 video of a collaborative space. In this hybrid interface, users can pick the representation of space best suited to the needs of the collaborative task. The project seeks to provide design guidelines for collaboration systems to enable empathic collaboration by sharing visual cues and environments across time and space.

-

MPConnect: A Mixed Presence Mixed Reality System

This project explores how a Mixed Presence Mixed Reality System can enhance remote collaboration. Collaborative Mixed Reality (MR) is a popular area of research, but most work has focused on one-to-one systems where either both collaborators are co-located or the collaborators are remote from one another. For example, remote users might collaborate in a shared Virtual Reality (VR) system, or a local worker might use an Augmented Reality (AR) display to connect with a remote expert to help them complete a task.

Publications

-

Improving Collaboration in Augmented Video Conference using Mutually Shared Gaze

Gun Lee, Seungwon Kim, Youngho Lee, Arindam Dey, Thammathip Piumsomboon, Mitchell Norman and Mark BillinghurstGun Lee, Seungwon Kim, Youngho Lee, Arindam Dey, Thammathip Piumsomboon, Mitchell Norman and Mark Billinghurst. 2017. Improving Collaboration in Augmented Video Conference using Mutually Shared Gaze. In Proceedings of ICAT-EGVE 2017 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments, pp. 197-204. http://dx.doi.org/10.2312/egve.20171359

@inproceedings {egve.20171359,

booktitle = {ICAT-EGVE 2017 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments},

editor = {Robert W. Lindeman and Gerd Bruder and Daisuke Iwai},

title = {{Improving Collaboration in Augmented Video Conference using Mutually Shared Gaze}},

author = {Lee, Gun A. and Kim, Seungwon and Lee, Youngho and Dey, Arindam and Piumsomboon, Thammathip and Norman, Mitchell and Billinghurst, Mark},

year = {2017},

publisher = {The Eurographics Association},

ISSN = {1727-530X},

ISBN = {978-3-03868-038-3},

DOI = {10.2312/egve.20171359}

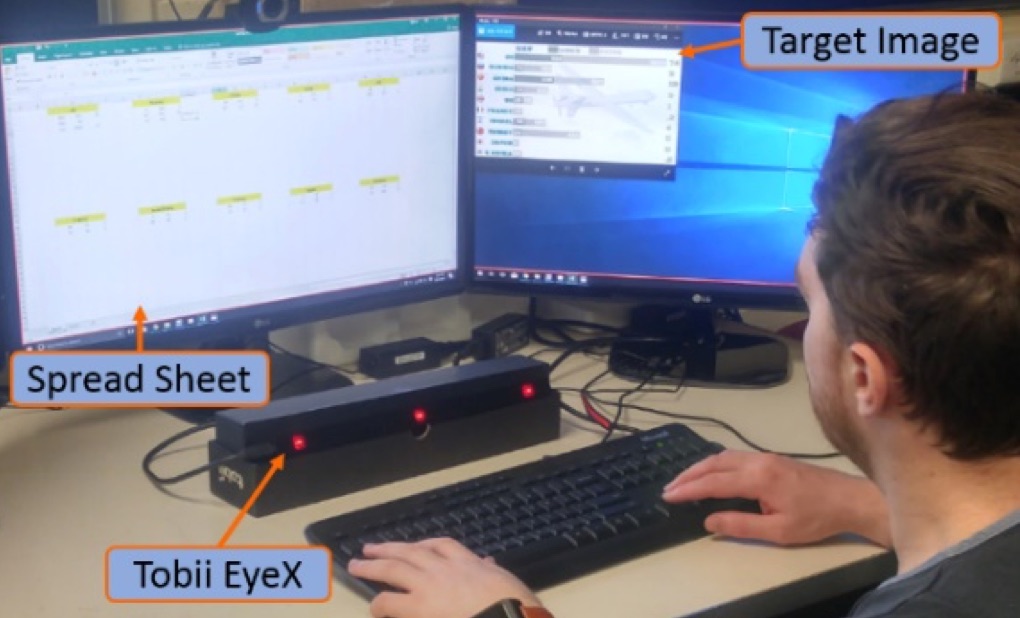

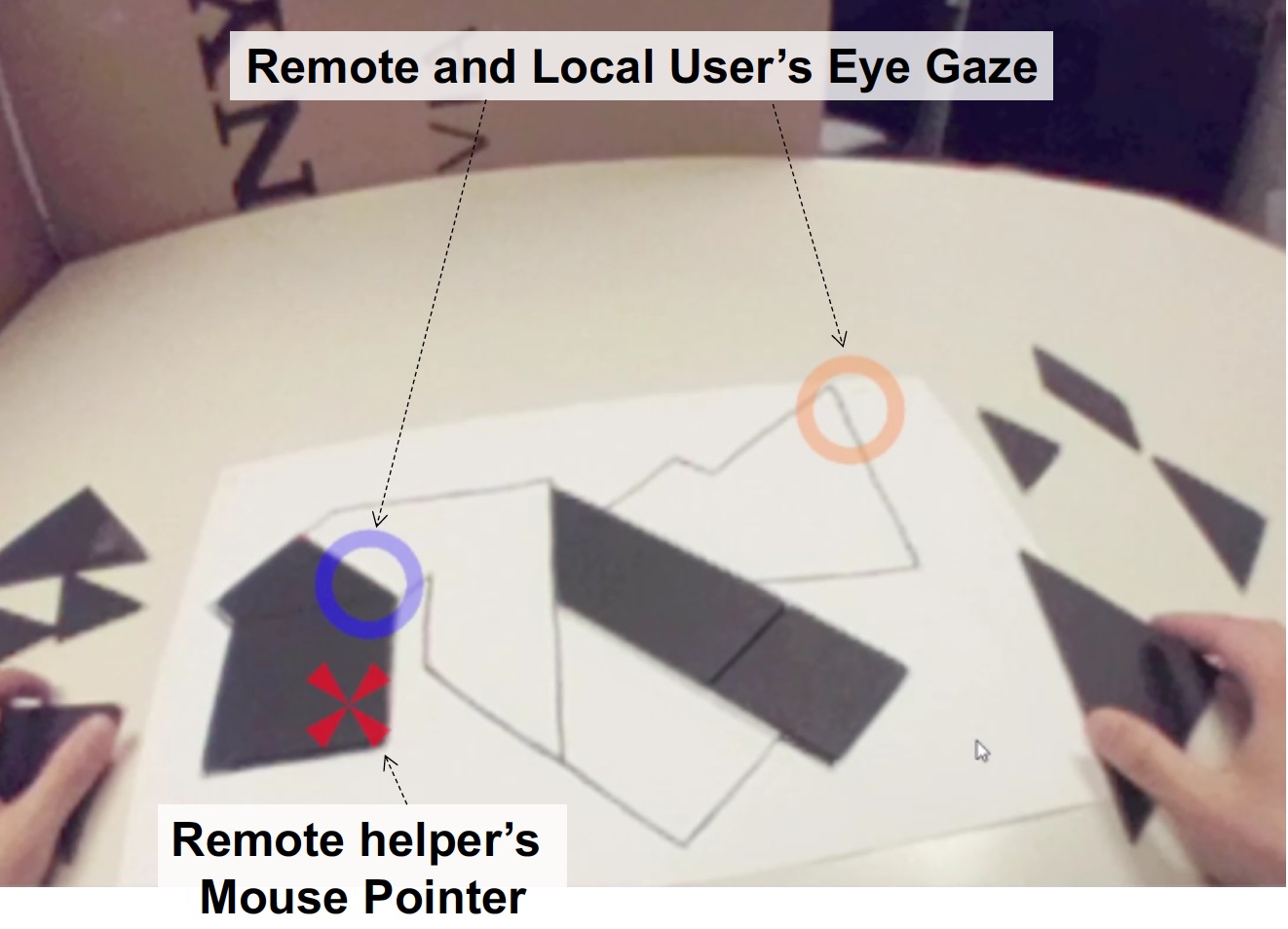

}To improve remote collaboration in video conferencing systems, researchers have been investigating augmenting visual cues onto a shared live video stream. In such systems, a person wearing a head-mounted display (HMD) and camera can share her view of the surrounding real-world with a remote collaborator to receive assistance on a real-world task. While this concept of augmented video conferencing (AVC) has been actively investigated, there has been little research on how sharing gaze cues might affect the collaboration in video conferencing. This paper investigates how sharing gaze in both directions between a local worker and remote helper in an AVC system affects the collaboration and communication. Using a prototype AVC system that shares the eye gaze of both users, we conducted a user study that compares four conditions with different combinations of eye gaze sharing between the two users. The results showed that sharing each other’s gaze significantly improved collaboration and communication. -

Sharing Emotion by Displaying a Partner Near the Gaze Point in a Telepresence System

Kim, S., Billinghurst, M., Lee, G., Norman, M., Huang, W., & He, J.Kim, S., Billinghurst, M., Lee, G., Norman, M., Huang, W., & He, J. (2019, July). Sharing Emotion by Displaying a Partner Near the Gaze Point in a Telepresence System. In 2019 23rd International Conference in Information Visualization–Part II (pp. 86-91). IEEE.

@inproceedings{kim2019sharing,

title={Sharing Emotion by Displaying a Partner Near the Gaze Point in a Telepresence System},

author={Kim, Seungwon and Billinghurst, Mark and Lee, Gun and Norman, Mitchell and Huang, Weidong and He, Jian},

booktitle={2019 23rd International Conference in Information Visualization--Part II},

pages={86--91},

year={2019},

organization={IEEE}

}In this paper, we explore the effect of showing a remote partner close to user gaze point in a teleconferencing system. We implemented a gaze following function in a teleconferencing system and investigate if this improves the user's feeling of emotional interdependence. We developed a prototype system that shows a remote partner close to the user's current gaze point and conducted a user study comparing it to a condition displaying the partner fixed in the corner of a screen. Our results showed that showing a partner close to their gaze point helped users feel a higher level of emotional interdependence. In addition, we compared the effect of our method between small and big displays, but there was no significant difference in the users' feeling of emotional interdependence even though the big display was preferred. -

Mutually Shared Gaze in Augmented Video Conference

Lee, G., Kim, S., Lee, Y., Dey, A., Piumsomboon, T., Norman, M., & Billinghurst, M.Lee, G., Kim, S., Lee, Y., Dey, A., Piumsomboon, T., Norman, M., & Billinghurst, M. (2017, October). Mutually Shared Gaze in Augmented Video Conference. In Adjunct Proceedings of the 2017 IEEE International Symposium on Mixed and Augmented Reality, ISMAR-Adjunct 2017 (pp. 79-80). Institute of Electrical and Electronics Engineers Inc..

@inproceedings{lee2017mutually,

title={Mutually Shared Gaze in Augmented Video Conference},

author={Lee, Gun and Kim, Seungwon and Lee, Youngho and Dey, Arindam and Piumsomboon, Thammatip and Norman, Mitchell and Billinghurst, Mark},

booktitle={Adjunct Proceedings of the 2017 IEEE International Symposium on Mixed and Augmented Reality, ISMAR-Adjunct 2017},

pages={79--80},

year={2017},

organization={Institute of Electrical and Electronics Engineers Inc.}

}Augmenting video conference with additional visual cues has been studied to improve remote collaboration. A common setup is a person wearing a head-mounted display (HMD) and camera sharing her view of the workspace with a remote collaborator and getting assistance on a real-world task. While this configuration has been extensively studied, there has been little research on how sharing gaze cues might affect the collaboration. This research investigates how sharing gaze in both directions between a local worker and remote helper affects the collaboration and communication. We developed a prototype system that shares the eye gaze of both users, and conducted a user study. Preliminary results showed that sharing gaze significantly improves the awareness of each other's focus, hence improving collaboration. -

Exploring interaction techniques for 360 panoramas inside a 3D reconstructed scene for mixed reality remote collaboration

Theophilus Teo, Mitchell Norman, Gun A. Lee, Mark Billinghurst & Matt AdcockT. Teo, M. Norman, G. A. Lee, M. Billinghurst and M. Adcock. “Exploring interaction techniques for 360 panoramas inside a 3D reconstructed scene for mixed reality remote collaboration.” In: J Multimodal User Interfaces. (JMUI), 2020.

@article{teo2020exploring,

title={Exploring interaction techniques for 360 panoramas inside a 3D reconstructed scene for mixed reality remote collaboration},

author={Teo, Theophilus and Norman, Mitchell and Lee, Gun A and Billinghurst, Mark and Adcock, Matt},

journal={Journal on Multimodal User Interfaces},

volume={14},

pages={373--385},

year={2020},

publisher={Springer}

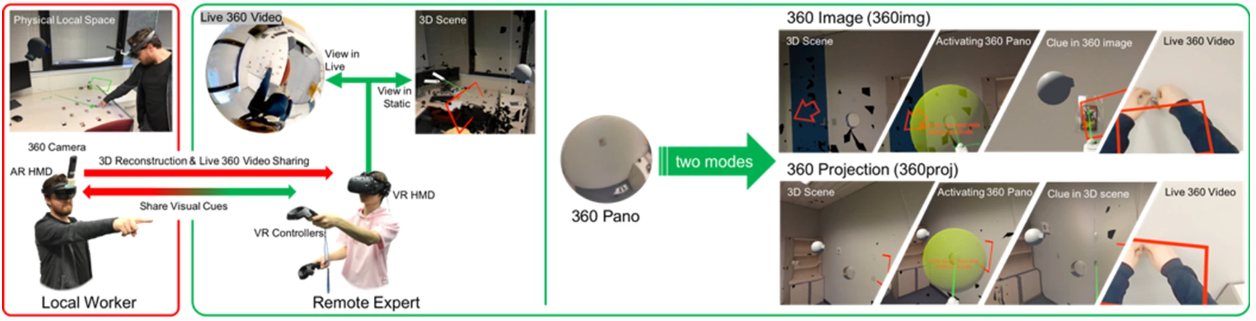

}Remote collaboration using mixed reality (MR) enables two separated workers to collaborate by sharing visual cues. A local worker can share his/her environment to the remote worker for a better contextual understanding. However, prior techniques were using either 360 video sharing or a complicated 3D reconstruction configuration. This limits the interactivity and practicality of the system. In this paper we show an interactive and easy-to-configure MR remote collaboration technique enabling a local worker to easily share his/her environment by integrating 360 panorama images into a low-cost 3D reconstructed scene as photo-bubbles and projective textures. This enables the remote worker to visit past scenes on either an immersive 360 panoramic scenery, or an interactive 3D environment. We developed a prototype and conducted a user study comparing the two modes of how 360 panorama images could be used in a remote collaboration system. Results suggested that both photo-bubbles and projective textures can provide high social presence, co-presence and low cognitive load for solving tasks while each have its advantage and limitations. For example, photo-bubbles are good for a quick navigation inside the 3D environment without depth perception while projective textures are good for spatial understanding but require physical efforts. -

A Mixed Presence Collaborative Mixed Reality System

Mitchell Norman; Gun Lee; Ross T. Smith; Mark BillinqhurstM. Norman, G. Lee, R. T. Smith and M. Billinqhurst, "A Mixed Presence Collaborative Mixed Reality System," 2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Osaka, Japan, 2019, pp. 1106-1107, doi: 10.1109/VR.2019.8797966.

@INPROCEEDINGS{8797966,

author={Norman, Mitchell and Lee, Gun and Smith, Ross T. and Billinqhurs, Mark},

booktitle={2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR)},

title={A Mixed Presence Collaborative Mixed Reality System},

year={2019},

volume={},

number={},

pages={1106-1107},

doi={10.1109/VR.2019.8797966}}

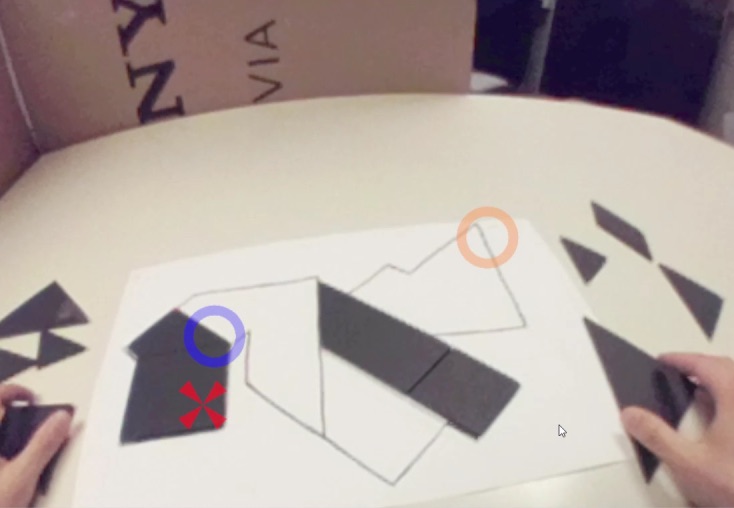

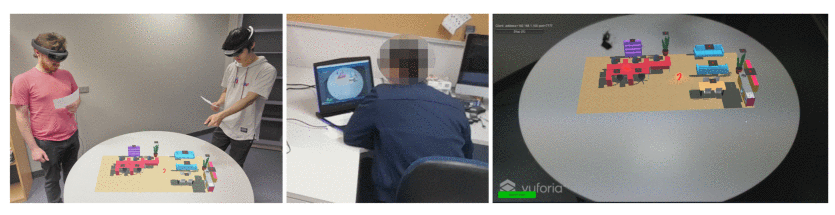

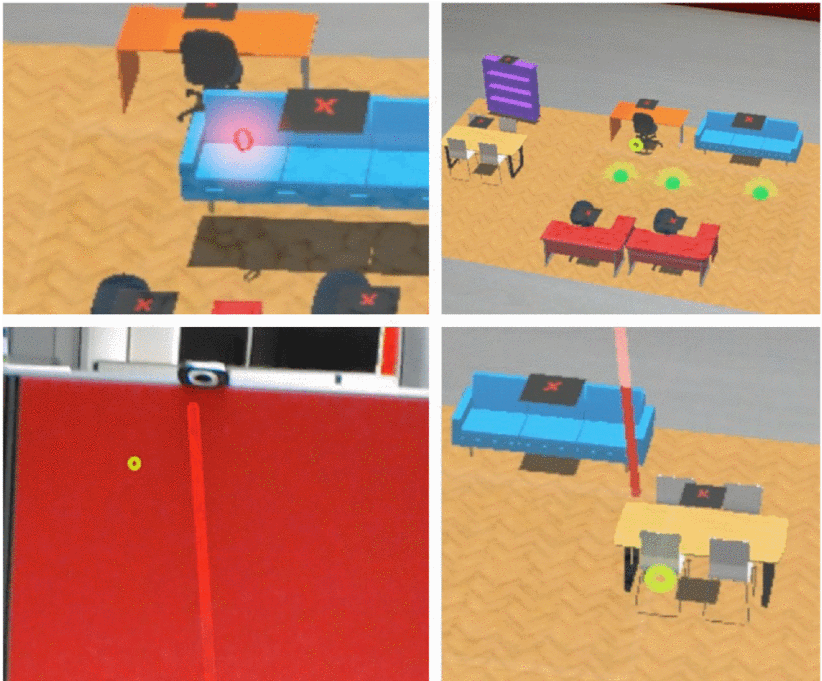

Research has shown that Mixed Presence Groupware (MPG) systems are a valuable collaboration tool. However research into MPG systems is limited to a handful of tabletop and Virtual Reality (VR) systems with no exploration of Head-Mounted Display (HMD) based Augmented Reality (AR) solutions. We present a new system with two local users and one remote user using HMD based AR interfaces. Our system provides tools allowing users to layout a room with the help of a remote user. The remote user has access to a marker and pointer tools to assist in directing the local users. Feedback collected from several groups of users showed that our system is easy to learn but could have increased accuracy and consistency. -

A Mixed Presence Collaborative Mixed Reality System

Mitchell Norman; Gun Lee; Ross T. Smith; Mark BillinqhurstNorman, M., Lee, G., Smith, R. T., & Billinqhurst, M. (2019, March). A mixed presence collaborative mixed reality system. In 2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR) (pp. 1106-1107). IEEE.

@INPROCEEDINGS{8797966,

author={Norman, Mitchell and Lee, Gun and Smith, Ross T. and Billinqhurs, Mark},

booktitle={2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR)},

title={A Mixed Presence Collaborative Mixed Reality System},

year={2019},

volume={},

number={},

pages={1106-1107},

doi={10.1109/VR.2019.8797966}}

Research has shown that Mixed Presence Groupware (MPG) systems are a valuable collaboration tool. However research into MPG systems is limited to a handful of tabletop and Virtual Reality (VR) systems with no exploration of Head-Mounted Display (HMD) based Augmented Reality (AR) solutions. We present a new system with two local users and one remote user using HMD based AR interfaces. Our system provides tools allowing users to layout a room with the help of a remote user. The remote user has access to a marker and pointer tools to assist in directing the local users. Feedback collected from several groups of users showed that our system is easy to learn but could have increased accuracy and consistency.